Sample Touch Research Paper. Browse other research paper examples and check the list of research paper topics for more inspiration. iResearchNet offers academic assignment help for students all over the world: writing from scratch, editing, proofreading, problem solving, from essays to dissertations, from humanities to STEM. We offer full confidentiality, safe payment, originality, and money-back guarantee. Secure your academic success with our risk-free services.

This research paper describes a sensory modality that underlies the most common everyday activities: maintaining one’s posture, scratching an itch, or picking up a spoon. As a topic of psychological research, touch has received far less attention than vision has. However, the substantial literature that is available covers topics from neurophysiology, through basic psychophysics, to cognitive issues such as memory and object recognition. All these topics are reviewed in the current research paper.

Academic Writing, Editing, Proofreading, And Problem Solving Services

Get 10% OFF with 24START discount code

We begin by defining the modality of touch as comprising different submodalities, characterized by their neural inputs. A brief review of neurophysiological and basic psychophysical findings follows. The research paper then pursues a number of topics concerning higher-level perception and cognition. Touch is emphasized as an active modality in which the perceiver seeks information from the world by exploratory movements. We ask how properties of objects and surfaces— like roughness or size—are perceived through contact and movement. We discuss the accuracy of haptic space perception and why movement might introduce systematic errors or illusions. Next comes an evaluation of touch as a patternrecognition system, where the patterns range from twodimensional arrays like Braille to real, free-standing objects. In everyday perception, touch and vision operate together; this research paper offers a discussion of how these modalities interact. Higher-level cognition, including attention and memory, is considered next. The research paper concludes with a review of some applications of research on touch.

Anumber of common themes underlie these topics. One is the idea that perceptual modalities are similar with respect to general functions they attempt to serve, such as conveying information about objects and space. Another is that by virtue of having distinct neural structures and relying on movement for input, touch has unique characteristics. The research paper makes the point that touch and vision interact cooperatively in extracting information about the world, but that the two modalities represent different priorities, with touch emphasizing information about material properties and vision emphasizing spatial and geometric properties. Thus there is a remarkable balance between redundant and complementary functions across vision and touch. A final theme of the present research paper is that research on touch has exciting applications to everyday problems.

Touch Defined as an Active, Multisensory System

The modality of touch encompasses several distinct sensory systems. Most researchers have distinguished among three systems—cutaneous, kinesthetic, and haptic—on the basis of the underlying neural inputs. In the terminology of Loomis and Lederman (1986), the cutaneous system receives sensory inputs from mechanoreceptors—specialized nerve endings that respond to mechanical stimulation (force)—that are embedded in the skin. The kinesthetic system receives sensory inputs from mechanoreceptors located within the body’s muscles, tendons, and joints. The haptic system uses combined inputs from both the cutaneous and kinesthetic systems. The term haptic is associated in particular with active touch. In an everyday context, touch is active; the sensory apparatus is intertwined with the body structures that produce movement. By virtue of moving the limbs and skin with respect to surfaces and objects, the basic sensory inputs to touch are enhanced, allowing this modality to reveal a rich array of properties of the world.

When investigating the properties of the peripheral sensory system, however, researchers have often used passive, not active, displays. Accordingly, a basic distinction has arisen between active and passive modes of touch. Unfortunately, over the years the meaning and use of these terms have proven to be somewhat variable. On occasion, J. J. Gibson (1962, 1966) treated passive touch as restricted to cutaneous (skin) inputs. However, at other times Gibson described passive touch as the absence of motor commands to the muscles (i.e., efferent commands) during the process of information pickup. For example, if an experimenter shaped a subject’s hands so as to enclose an object, it would be a case of active touch by the first criterion, but passive touch by the second one. We prefer to use Loomis and Lederman’s (1986) distinctions between types of active versus passive touch. They combined Gibson’s latter criterion, the presence or absence of motor control, with the three-way classification of sensory systems by the afferent inputs used (i.e., cutaneous, kinesthetic, and haptic). This conjunction yielded five different modes of touch: (a) tactile (cutaneous) perception, (b) passive kinesthetic perception (kinesthetic afferents respond without voluntary movement), (c) passive haptic perception (cutaneous and kinesthetic afferents respond without voluntary movement), (d) active kinesthetic perception, and (e) active haptic perception. The observer only has motor control over the touch process in modes d and e.

In addition to mechanical stimulation, the inputs to the touch modality include heat, cooling, and various stimuli that produce pain. Tactile scientists distinguish a person’s subjective sensations of touch per se (e.g., pressure, spatial acuity, position) from those pertaining to temperature and pain. Not only is the quality of sensation different, but so too are the neural pathways. This research paper primarily discusses touch and, to a lesser extent, thermal subsystems, inasmuch as thermal cues provide an important source of sensory information for purposes of haptic object recognition. Overviews of thermal sensitivity have been provided by Sherrick and Cholewiak (1986) and by J. C. Stevens (1991). The topic of pain is not extensively discussed here, but reviews of pain responsiveness by Sherrick and Cholewiak (1986) and, more recently, by Craig and Rollman (1999) are recommended.

The Neurophysiology of Touch

The Skin and Its Receptors

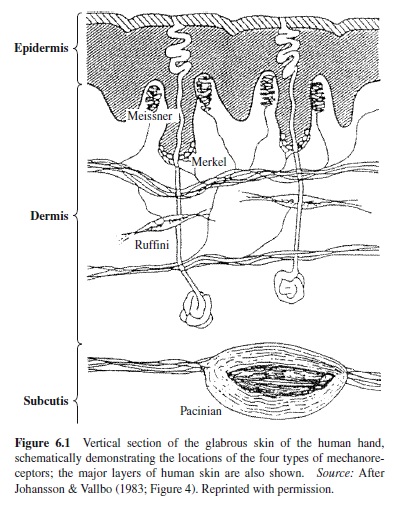

The skin is the largest sense organ in the body. In the average adult, it covers close to 2 m and weighs about 3–5 kg (Quilliam, 1978). As shown in Figure 6.1, it consists of two major layers: the epidermis (outer) and the dermis (inner). The encapsulated endings of the mechanoreceptor units, which are believed to be responsible for transducing mechanical energy into neural responses, are found in both layers, as well as at the interface between the two. A third layer lies underneath the dermis and above the supporting structures made up of muscle and bone. Although not considered part of the formal medical definition of skin, this additional layer (the hypodermis) contains connective tissue and subcutaneous fat, as well as one population of mechanoreceptor end organs (Pacinian corpuscles).

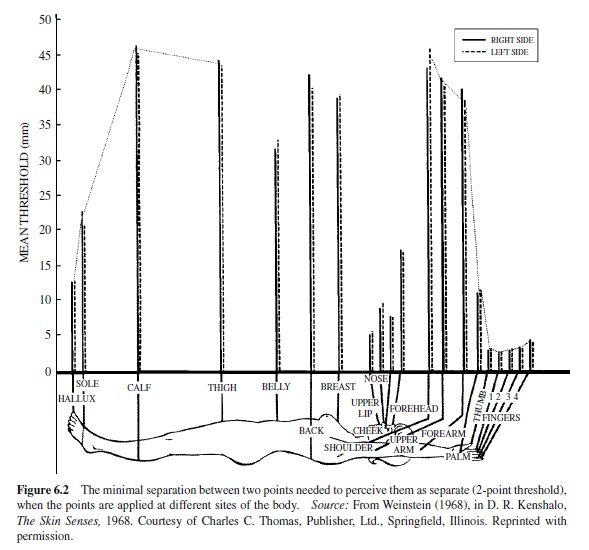

We focus here on the volar portion of the human hand, because the remainder of this research paper considers interactions of the hand with the world. This skin, which is described as glabrous (hairless), contains four different populations of cutaneous mechanoreceptor afferent units. These populations are differentiated in terms of both relative receptive field size and adaptation responses to sustained and transient stimulation (see Table 6.1).

The two fast-adapting populations (FA units) show rapid responses to the onset, and sometimes the offset, of skin deformation. In addition, FAI (fast adapting type I) units have very small, well-defined receptive fields, whereas FAII (fast adapting type II) units have large receptive fields with poorly defined boundaries. FAI units respond particularly well to rate of skin deformation, and they are presumed to end in Meisner’s corpuscles. FAII units respond reliably to both the onset and offset of skin deformation, particularly acceleration and higher-derivative components, and have been shown to terminate in Pacinian corpuscles. The two slow-adapting populations (SA units) show a continuous response to sustained skin deformation. SAI (slow adapting type I) units demonstrate a strong dynamic sensitivity, as well as a somewhat irregular response to sustained stimulation. They are presumed to end in Merkel cell neurite complexes (see Figure 6.1). SAII (slow adapting type II) units show less dynamic sensitivity but a more regular sustained discharge, as well as spontaneous discharge sometimes in the absence of skin deformation; they are presumed to end in Ruffini endings. Bolanowski, Gescheider, Verrillo, and Checkosky (1988) have developed a four-channel model of mechanoreception, which associates psychophysical functions with the tuning curves of mechanoreceptor populations. Each of the four mechanoreceptors is presumed to produce different psychophysical responses, constituting a sensory channel, so to speak.

Response to thermal stimulation is mediated by several peripheral cutaneous receptor populations that lie near the body surface. Researchers have documented the existence of separate “warm” and “cold” thermoreceptor populations in the skin; such receptors are thought to be primarily responsible for thermal sensations. Nociceptor units respond only to extremes (noxious) in temperature (or sometimes mechanical) stimulation, but these are believed to be involved in pain rather than temperature sensation.

Response to noxious stimulation has received an enormous amount of attention. Here, we simply note that two populations of peripheral afferent fibers (high-threshold nociceptors) in the skin have been shown to contribute to pain transmission: the larger, myelinated A-delta fibers and the narrow, unmyelinated C fibers.

Mechanoreceptors in the muscles, tendons, and joints (and in the case of the hand, in skin as well) contribute to the kinesthetic sense of position and movement of the limbs. With respect to muscle, the muscle spindles contain two types of sensory endings: Large-diameter primary endings code for rate of change in the length of the muscle fibers, dynamic stretch, and vibration; smaller-diameter secondary endings are primarily sensitive to the static phase of muscle activity. It is now known that joint angle is coded primarily by muscle length. Golgi tendon organs are spindle-shaped receptors that lie in series with skeletal muscle fibers. These receptors code muscle tension. Finally, afferent units of the joints are now known to code primarily for extreme, but not intermediate, joint positions. As they do not code for intermediate joint positions, it has been suggested that they serve mainly a protective function—detecting noxious stimulation. The way in which the kinesthetic mechanoreceptor units mediate perceptual outcomes is not well understood, especially in comparison to cutaneous mechanoreceptors. For further details on kinesthesis, see reviews by Clark and Horch (1986) and by Jones (1999).

Pathways to Cortex and Major Cortical Areas

Peripheral units in the skin and muscles congregate into single nerve trunks at each vertebral level as they are about to enter the spinal cord. At each level, their cell bodies cluster together in the dorsal root ganglion. These ganglia form chains along either side of the spinal cord. The proximal ends of the peripheral units enter the dorsal horn of the spinal cord, where they form two major ascending pathways: the dorsal column-medial lemniscal system and the anterolateral system. The dorsal column-medial lemniscal system carries information about tactile sensation and limb kinesthesis. Of the two systems, it conducts more rapidly because it ascends directly to the cortex with few synapses. The anterolateral system carries information about temperature and pain—and to a considerably lesser extent, touch. This route is slower than the dorsal column-medial lemniscal system because it involves many synapses between the periphery and the cortex. The two pathways remain segregated until they converge at the thalamus, although even there the separation is preserved. The primary cortical receiving area for the somatic senses, S-I, lies in the postcentral gyrus and in the depths of the central sulcus.It consists of four functional areas, which when ordered from the central sulcus back to the posterior parietal lobe, are known as Brodmann’s areas 3a, 3b, 1, and 2. Lateral and somewhat posterior to S-I is S-II, the secondary somatic sensory cortex, which lies in the upper bank of the lateral sulcus. S-II receives its main inputs from S-I. The posterior parietal lobe (Brodmann’s areas 5 and 7) also receives somatic inputs. It serves higher-level associative functions, such as relating sensory and motor processing, and integrating the various somatic inputs (for further details, see Kandel, Schwartz, & Jessell, 1991).

Sensoryaspects of Touch

Cutaneous Sensitivity and Resolution

Tests of absolute and relative sensitivity to applied force describe people’s threshold responses to intensive aspects of mechanical deformation (e.g., the depth of penetration of a probe into the skin). In addition, sensation magnitude has been scaled as a function of stimulus amplitude, in order to reveal the relation between perceptual response and stimulus variables at suprathreshold levels. Corresponding psychophysical experiments have been performed to determine sensitivity to warmth and cold, and to pain.

The spatial resolving capacity of the skin has been measured in a variety of ways, including the classical two-point discrimination method, in which the threshold for perceiving two punctate stimuli as a single point is determined. However, Johnson and Phillips (1981; see also Craig & Johnson, 2000; Loomis, 1979) have argued persuasively that grating orientation discrimination provides a more stable and valid assessment of the human capacity for cutaneous spatial resolution. Using spatial gratings, the spatial acuity of the skin has been found to be about 1 mm.

The temporal resolving capacity of the skin has been evaluated with a number of different methods (see Sherrick & Cholewiak, 1986). For example, it has been assessed in terms of sensitivity to vibratory frequency. Experiments have shown that human adults are able to detect vibrations up to about 700 Hz, which suggests that they can resolve temporal intervals as small as about 1.4 ms (e.g., Verrillo, 1963). A more conservative estimate (5.5 ms) was obtained when determining the minimum separation time between two 1-ms pulse stimuli that is required for an observer to perceive them as successive.

Overall, the experimental data suggest that the hand is poorer than the eye and better than the ear in resolving fine spatial details. On the other hand, it has proven to be better than the eye and poorer than the ear in resolving fine temporal details.

Effects of Body Site and Age on Cutaneous Thresholds

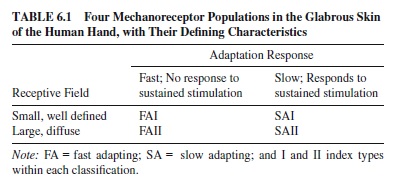

It has long been known that the sensitivity, acuity, and magnitude of tactile and thermal sensations can vary quite substantially as a function of the body locus of stimulation (for details, see van Boven & Johnson, 1994; Stevens, 1991; Weinstein, 1968; Wilska, 1954). For example, the face (i.e., upper lip, cheek, and nose) is best able to detect a low-level force, whereas the fingers are most efficient at processing spatial information. The two-point threshold is shown for various body sites in Figure 6.2.

More recently, researchers have addressed the effect of chronological age on cutaneous thresholds (for details, see Verrillo, 1993). One approach to studying aging effects is to examine the vibratory threshold (the skin displacement at which a vibration becomes detectable) as a function of age. A number of studies converge to indicate that aging particularly affects thresholds for vibrations in the range detected by the Pacinian corpuscles (i.e, at frequencies above 40 Hz; see Gescheider, Bolanowski, Verrillo, Hall, & Hoffman, 1994; Verillo, 1993). The rise in the threshold with age has been attributed to the loss of receptors. By this account, the Pacinian threshold is affected more than are other channels because it is the only one whose response depends on summation of receptor outputs over space and time (Gescheider, Edwards, Lackner, Bolanowski, & Verrillo, 1996). Although the ability to detect a vibration in the Pacinian range is substantially affected by age, the difference limen—the change in amplitude needed to produce a discriminable departure from a baseline value—varies little after the baseline values are adjusted for the age-related differences in detection threshold (i.e., the baselines are equated for magnitude of sensation relative to threshold; Gescheider et al., 1996).

Cutaneous spatial acuity has also been demonstrated to decline with age. Stevens and Patterson (1995) reported an approximate 1% increase in threshold per year over the ages of 20 to 80 years for each of four acuity measures. The measures were thresholds, as follows: minimum separation of a 2-point stimulus that allows discrimination of its orientation on the finger (transverse vs. longitudinal), minimum separation between points that allows detection of gaps in lines or disks, minimum change in locus that allows discrimination between successive touches on the same or different skin site, and difference limen for length of a line stimulus applied to the skin.

The losses in cutaneous sensitivity that have been described can have profound consequences for everyday life in older persons because the mechanoreceptors function critically in basic processes of grasping and manipulation.

Sensory-Guided Grasping and Manipulation

Persons who have sustained peripheral nerve injury to their hands are often clumsy when grasping and manipulating objects. Such persons will frequently drop the objects; moreover, when handling dangerous tools (e.g., a knife), they can cut themselves quite badly. Older adults, whose cutaneous thresholds are elevated, tend to grip objects more tightly than is needed in order to manipulate them (Cole, 1991). Experiments have now confirmed what these observations suggest: Namely, cutaneous information plays a critical role in guiding motor interactions with objects following initial contact.

Neurophysiological evidence by Johansson and his colleagues (see review by Johansson & Westling, 1990) has clearly shown that the mechanoreceptor populations present in glabrous skin of the hand, particularly the FAI receptors, contribute in vital ways to the skill with which people are able to grasp, lift, and manipulate objects using a precision grip (a thumb-forefinger pinch). The grasp-lift action requires that people coordinate the grip and load forces (i.e., forces perpendicular and tangential to the object grasped, respectively) over a sequence of stages. The information from cutaneous receptors enables people to grasp objects highly efficiently, applying force just sufficient to keep them from slipping. In addition to using cutaneous inputs, people use memory for previous experience with the weight and slipperiness of an object in order to anticipate the forces that must be applied. Johansson and Westling have suggested that this sensorimotor form of memory involves programmed muscle commands. If the anticipatory plan is inappropriate—for example, if the object slips from the grasp or it is lighter than expected and the person overgrips—the sensorimotor trace must be updated. Overt errors can often be prevented, however, because the cutaneous receptors, particularly the FAIs, signal when slip is about to occur, while the grip force can still be corrected.

Haptic Perception of Properties of Objects and Surfaces

Up to this point, this research paper has discussed the properties of touch that regulate very early processing. The paper now turns to issues of higher-level processing, including representations of the perceived world, memory and cognition about that world, and interactions with other perceptual modalities. A considerable amount of work has been done in these areas since the review of Loomis and Lederman (1986). We begin with issues of representation. What is it about the haptically perceived world—its surfaces, objects, and their spatial relations—that we represent through touch?

Klatzky and Lederman (1999a) pointed out that the haptic system begins extracting attributes of surfaces and objects from the level of the most peripheral units.This contrasts with vision, in which the earliest output from receptors codes the distribution of points of light, and considerable higher-order processing ensues before fundamental attributes of objects become defined.

The earliest output from mechanoreceptors and thermal receptors codes attributes of objects directly through various mechanisms. There may be different populations of peripheral receptors, each tuned to a particular level of some dimension along which stimuli vary. An example of this mechanism can be found in the two populations of thermoreceptors, which code different (but overlapping) ranges of heat flow. Another example can be found in the frequencybased tuning functions of the mechanoreceptors (Johansson, Landstrom, & Lundstrom, 1982), which divide the continuum of vibratory stimuli. Stimulus distinctions can be made within single units as well: for example, by phase locking of the unit’s output to a vibratory input (i.e., the unit fires at some multiple of the input frequency). The firing rate of a single unit can indicate a property such as the sharpness of a punctate stimulus (Vierck, 1979). Above the level of the initial receptor populations are populations that combine inputs from the receptors to produce integrative codes. As is later described, the perception of surface roughness appears to result from the integration at cortical levels of inputs from populations of SAI receptors. Multiple inputs from receptors may also be converted to maps that define spatial features of surfaces pressed against the fingertip, such as curvature (LaMotte & Srinivasan, 1993; Vierck, 1979).

Ultimately, activity from receptors to the brain leads to a representation of a world of objects and surfaces, defined in spatial relation to one another, each bound to a set of enduring physical properties. We now turn to the principal properties that are part of that representation.

Haptically Perceptible Properties

Klatzky and Lederman (1993) suggested a hierarchical organization of object properties extracted by the haptic system. At the highest level, a distinction is made between geometric properties of objects and material properties. Geometric properties are specific to particular objects, whereas material properties are independent of any one sampled object.

At the next level of the hierarchy, the geometric properties are divided into size and shape. Two natural scales for these properties are within the haptic system, differentiated by the role of cutaneous versus kinesthetic receptors, which we call micro- and macrogeometric. At the microgeometric level, an object is small enough to fall within a single region of skin, such as the fingertip. This produces a spatial deformation pattern on the skin that is coded by the mechanoreceptors (particularly the SAIs) and functions essentially as a map of the object’s spatial layout. This map might be called 2-1/2 D, after Marr (1982), in that the coding pertains only to the surfaces that are in contact with the finger. The representation extends into depth because the fingertip accommodates so as to have differential pressure from surface planes lying at different depth. At the macrogeometric level, objects do not fall within a single region of the skin, but rather are enveloped in hands or limbs, bringing in the contribution of kinesthetic receptors and skin sites that are not somatotopically continuous, such as multiple fingers. Integration of these inputs must be performed to determine the geometry of the objects.

The hierarchical organization of Klatzky and Lederman further differentiates material properties into texture, hardness (or compliance), and apparent temperature. Texture comprises many perceptually distinct properties, such as roughness, stickiness, and spatial density. Roughness has been the most extensively studied, and we treat it in some detail in a following section. Compliance perception has both cutaneous and kinesthetic components, the relative contributions of which depend on the rigidity of the object’s surface (Srinivasan & LaMotte, 1995). For example, a piano key is rigid on the surface but compliant, and kinesthesis is a necessary input to the perception that it is a hard or soft key to press. Although cutaneous cues are necessary, they are not sufficient, because the skin bottoms out, so to speak, whether the key is resistant or compliant. On the other hand, a cotton ball deforms as it is penetrated, causing a cutaneous gradient that may be sufficient by itself to discriminate compliance. Another property of objects is weight, which reflects geometry and material. Although an object’s weight is defined by its total mass, which reflects density and volume, we will see that perceived weight can be affected by the object’s material, shape, and identity.

Acomplete review of the literature on haptic perception of object properties would go far beyond the scope of this research paper. Here, we treat three of the most commonly studied properties in some detail: texture, weight, and curvature. Each of these properties can be defined at different scales, although the meaning of scale varies with the particular dimension of interest. The mechanisms of haptic perception may be profoundly affected by scale.

Roughness

A textured surface has protruberant elements arising from a relatively homogeneous substrate. The surface can be characterized as having macrotexture or microtexture, depending on the spacing between surface elements. Different mechanisms appear to mediate roughness perception at these two scales. In a microtexture, the elements are spaced at intervals on the order of microns (thousandths of a millimeter); in a macrotexture, the spacing is one or two orders of magnitude greater, or more. When the elements get too sparse, on the order of 3–4 mm apart or so, people tend to be reluctant to characterize the surface as textured. Rather, it appears to be a smooth surface punctuated by irregularities.

Early research determined some of the primary physical determinants of perceived roughness with macrotextures (i.e., ͟˃ 1 mm spacing between elements). For example, Lederman (Lederman, 1974, 1983; Lederman & Taylor, 1972; see also Connor, Hsaio, Philips, & Johnson, 1990; Connor & Johnson, 1992; Sathian, Goodwin, John, & Darian-Smith, 1989; Sinclair & Burton, 1991; Stevens & Harris, 1962), using textures that took the form of grooves with rectangular profiles, found that perceived roughness strongly increased with the spacing between the ridges (groove width). Increases in ridge width—that is, the size of the peaks rather than the troughs in the surface—had a relatively modest effect, tending to decrease perceived roughness. Although roughness was principallyaffectedbythegeometryofthesurface,thewayinwhich the surface was explored also had some effect. Increasing applied fingertip force increased the magnitude of perceived roughness, and the speed of relative motion between hand and surface had a small but systematic effect on perceived roughness. Finally, conditions of active versus passive control over the speed-of-hand motion led to similar roughness judgments, suggesting that kinesthesis plays a minimal role, and that the manner in which the skin is deformed is critical.

Taylor and Lederman (1975) constructed a model of perceived roughness, based on a mechanical analysis of the skin deformation resulting from changes in groove width, fingertip force, and ridge width. Their model suggested that perceived roughness of gratings was based on the total amount of skin deformation produced by the stimulus. Taylor and Lederman described the representation of roughness in terms of this proximal stimulus as “intensive” because the deformation appeared to be integrated over the entire area of contact, resulting in an essentially unidimensional percept.

The neural basis for coding roughness has been modeled by Johnson, Connor, and associates (Connor et al., 1990; Connor & Johnson, 1992). The model assumes that initial coding of the textured surface is in terms of the relative activity rates of spatially distributed SAI mechanoreceptors. The spatial map is preserved in S-I, the primary somatosensory cortex (specifically, area 3b), which computes differences in activity of adjacent (1 mm apart) SAI units. These differences in spatially distributed activity are passed along to neurons in S-II, another somatosensory cortical area that integrates the information from the primary cortex (Hsiao, Johnson, & Twombly, 1993).

Although vibratory signals exist, psychophysical studies suggest that humans tend not to use vibration to judge macrotextures presented to the bare skin. Roughness judgments were unaffected by the spatial period of stimulus gratings (Lederman, 1974, 1983) and minimally affected by movement speed (Katz, 1925/1989; Lederman, 1974, 1983), both of which should alter vibration; they were also unaffected by either low- or high-frequency vibrotactile adaptation (Lederman, Loomis, & Williams, 1982). Vibratory coding of roughness does, however, occur with very fine microtextures. LaMotte and Srinivasan (1991) found that observers could discriminate a featureless surface from a texture with height .06–.16 microns and interelement spacing ~100 microns. Subjects reported attending to the vibration from stroking the texture. Moreover, measures of mechanoreceptor activity in monkeys passively exposed to the same surfaces implicated the FAII (or PC) units, which respond to relatively high-frequency vibrations (peakresponse ~ 250Hz; Johansson & Vallbo, 1983). Vibrotactile adaptation affected perceived roughness of fine but not coarse surfaces (Hollins, Bensmaia, & Risner, 1998).

Somewhat surprisingly, the textural scale where spatial coding of macrotexture changes to vibratory coding of microtexture appears to be below the limit of tactile spatial resolution (.5–1.0 mm). Dorsch, Yoshioka, Hsiao, and Johnson (2000) reported that SAI activity, which implicates spatial coding, was correlated with roughness perception over a range of gratings that began with a .1-mm groove width. Using particulate textures, Hollins and Risner (2000) found evidence for a transition between vibratory and spatial coding at a similar particle size.

Weight

The perception of weight has been of interest for a time approaching two centuries, since the work of Weber (1834/1978). Weber pointed out that the impression of an object’s heaviness was greater when it was wielded than when it rested passively on the skin, suggesting that the perception of weight was not entirely determined by its objective value. In the late 1800s (Charpentier, 1891; Dresslar, 1894), the discovery of the size-weight illusion—that given equal objective weight, a smaller object seems heavier—pointed to the fact that multiple physical factors determine heaviness perception. Recently, Amazeen and Turvey (1996) have integrated a body of work on the size-weight illusion and weight perception by accounting for perceived weight in terms of resistance to the rotational forces imposed by the limbs as an object is held and wielded. Their task requires the subject to wield an object at the end of a rod or handle, precluding volumetric shape cues. Formally, resistance to wielding is defined by an entity called the inertia tensor, a three-by-three matrix whose elements represent the resistance to rotational acceleration about the axes of a three-dimensional coordinate system that is imposed on the object around the center of rotation. Although the inertia tensor will vary with the coordinate system that is imposed on the object, its eigenvalues are invariant. (The eigenvalues of a matrix are scalars that, together with a set of eigenvectors—essentially, coordinate axes—can be used to reconstruct it.) They correspond to the principal moments of inertia: that is, the resistances to rotation about a nonarbitrary coordinate system that uses the primary axes of the object (those around which the mass is balanced). In a series of experiments in which the eigenvalues were manipulated and the seminal data on the size-weight illusion were analyzed (Stevens & Rubin, 1970), Amazeen and Turvey found that heaviness was directly related to the product of power functions of the eigenvalues (specifically, the first and third). This finding explains why weight is not dictated simply by mass alone; the reliance of heaviness perception on resistance to rotation means that it will also be affected by geometric factors.

But the story is more complicated, it seems, as weight perception is also affected by the material from which an object is made and the way in which it is gripped. Amaterial-weight relation was documented by Wolfe (1898), who covered objects of equal mass with different surface materials and found that objects having surface materials that were more dense were judged lighter than those with surfaces that were less dense (e.g., comparing brass to wood). Flanagan and associates (Flanagan, Wing, Allison, & Spencely, 1995; Flanagan & Wing, 1997; see also Rinkenauer, Mattes, & Ulrich, 1999) suggested that material affected perceived weight because objects that were slipperier required a greater grip force in order to be lifted, and a more forceful grip led to a perception of greater weight (presumably because heavier objects must be gripped more tightly to lift them). Ellis and Lederman (1999) reportedamaterial-weightillusion,however,thatcouldnotbe entirely explained by grip force, because the slipperiest object was not felt to be the heaviest. Moreover, they demonstrated that the effects of material on perceived heaviness vanished when (a) objects of high mass were used, or (b) even low-mass objects were required to be gripped tightly. The first of these effects, an interaction between material and mass, is a version of scale effects in haptic perception to which we previously alluded.

However, cognitive factors cannot be entirely excluded either, as demonstrated by an experiment by Ellis and Lederman (1998) that describes the so-called golf-ball illusion, a newly documented misperception of weight. Experienced golfers and nongolfers were visually shown practice and real golf balls that looked alike, but that were adjusted to be of equal mass. The golfers judged the practice balls to be heavier than the real balls, in contrast to the nongolfers, who judged them to be the same apparent weight. These results highlight the contribution of a cognitive component to weight perception, inasmuch as only experienced golfers would know that practice balls are normally lighter than real golf balls.

Collectively, this body of studies points to a complex set of factors that affect the perception of weight via the haptic system. Resistance to rotation is important, particularly when an object is wielded (as opposed, e.g., to being passively held). Grip force and material may reflect cognitive expectancies (i.e., the expectation that more tightly gripped objects and denser objects should be heavier), but they may also affect more peripheral perceptual mechanisms. A pure cognitive-expectancy explanation for these factors would suggest equivalent effects when vision is used to judge weight, but such effects are not obtained (Ellis & Lederman, 1999). Nor would a pure expectancy explanation explain why the effects of material on weight perception vanish when an object is gripped tightly. Still, a cognitive expectancy explanation does explain the differences in the weight percepts of the experienced golfers versus the nongolfers. As for lowerlevel processes that may alter the weight percept, Ellis and Lederman (1999) point out that a firm grip may saturate mechanoreceptors that usually provide information about slip. And Flanagan and Bandomir (2000) have found that weight perception is affected by the width of the grip, the number of fingers involved, and the contact area, but not the angle of the contacted surfaces; these findings suggest the presence of additional complex interactions between weight perception and the motor commands for grasping.

Curvature

Curvature is the rate of change in the angle of the tangent line to a curve as the tangent point moves along it. Holding shape constant, curvature decreases as scale increases; for example, a circle with a larger radius has a smaller curvature. Like other haptically perceived properties, the scale of a curve is important. A curved object may be small enough to fall within the area of a fingertip, or large enough to require a movement of the hand across its surface in order to touch it all. If the curvature of a surface is large (e.g., a pearl), then the entire surface may fall within the scale of a fingertip. A surface with a smaller curvature may still be presented to a single finger, but the changes in the tangent line over the width of the fingertip may not make it discriminable from a flat surface.

One clear point is that curvature perception is subject to error from various sources. One is manner of exploration. For example, when curved edges are actively explored, curvature away from the explorer may lead to the perception that the edge is straight (Davidson, 1972; Hunter, 1954). Vogels, Kappers, and Koenderink (1996) found that the curvature of a surface was affected by another surface that had been touched previously, constituting a curvature aftereffect. The apparent curvature of a surface also depends on whether it lies along or across the fingers (Pont, Kappers, & Koenderink, 1998), or whether it touches the palm or upper surface of the hand (Pont, Kappers, & Koenderink, 1997).

When small curved surfaces, which have relatively high curvature, are brought to the fingertip, slowly adapting mechanoreceptors provide an isomorphic representation of the pressure gradient on the skin (LaMotte & Srinivasan, 1993; Srinivasan & LaMotte, 1991; Vierck, 1979). This map is sufficient to make discriminations between curved surfaces on the basis of a single finger’s touch. Goodwin, John, and Marceglia (1991) found that a curvature equivalent to a circle with a radius of .2 m could be discriminated from a flat surface when passively touched by a single finger.

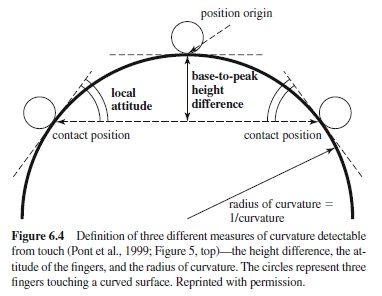

When larger surfaces (smaller curvature) are presented, they may be explored by multiple fingers of a static hand or by tracing along the edge. Pont et al. (1997) tested three models to explain curvature perception when static, multifinger exposure was used.

To understand the models, consider a stimulus shaped like a semicircle, the flat edge of which lies on a tabletop with the curved edge pointing up. This situation is illustrated in Figure 6.4. Assume that the stimulus is felt by three fingers, with the middle finger at the highest point (i.e., the midpoint) of the curve. There are then three parameters to consider. The first is height difference: The middle finger is higher (i.e., at a greater distance from the tabletop) than the other fingers by some height. The second is the difference in the angles at which the two outer fingers lie: These fingers’ contact points have tangent lines tilted toward one another, with the difference in their slopes constituting an attitude difference, so to speak. In addition, the semicircle has some objective curvature. All three parameters will change as the semicircle’s radius changes size. For example, as the radius increases and the surface gets flatter, the curvature will decrease, the difference in height between the middle and outer fingers will decrease, and the attitudes of the outer fingers approach the horizontal from opposing directions, maximizing the attitude difference. The question is, which of these parameters—height difference, attitude difference, or curvature—determines the discriminability between edges of different curvature? Pont et al. concluded that subjects compared the difference in attitudes between surfaces and used that difference to discriminate them. That is, for each surface, subjects considered the difference in the slope at the outer points of contact. For example, this model predicts that as the outer fingers are placed further apart along a semicircular edge of some radius, the value of the radius at which there is a threshold level of curvature (i.e., where a curved surface can just be discriminated from a flat one) will increase. As the fingers move farther apart, only by increasing the radius of the semicircle can the attitude difference between them be maintained.

As we report in the following section, when a stimulus has an extended contour, moving the fingers along its edge is the only way to extract its shape; static contact does not suffice. For simple curves, at least, it appears that this is not the case, and static and dynamic curvature detection is similar. Pont (1997) reported that when subjects felt a curved edge by moving their index finger along it, from one end to the other of a window of exposure, the results were similar to those with static touch. She again concluded that it was the difference in local attitudes, the changing local gradients touched by the finger as it moved along the exposed edge, that were used for discrimination. A similar conclusion was reached by Pont, Kappers, and Koenderink (1999) in a more extended comparison of static and dynamic touch. It should be noted that the nature of dynamic exploration of the stimulus was highly constrained in these tasks, and that the manner in which a curved surface is touched may affect the resulting percept (Davidson, 1972; Davidson & Whitson, 1974). We now turn to the general topic of how manual exploration affects the extraction of the properties of objects through haptic perception.

Role of Manual Exploration in Perceiving Object Properties

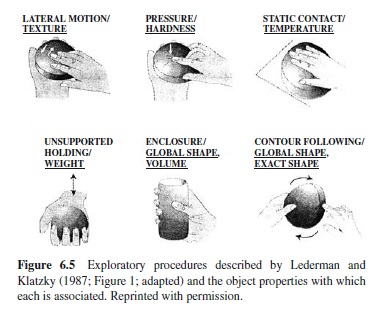

The sensory receptors under the skin, and in muscles, tendons, and joints, become activated not only through contact with an object but through movement. Lederman and Klatzky (1987) noted the stereotypy with which objects are explored when people seek information about particular object properties. For example, when people seek to know which of two objects is rougher, they typically rub their fingers along the objects’surfaces. Lederman and Klatzky called such an action an “exploratory procedure,” by which they meant a stereotyped pattern of action associated with an object property.

The principal set of exploratory procedures they described is as follows (see Figure 6.5):

Lateral motion—associated with texture encoding; characterized by production of shearing forces between skin and object.

Static contact—associated with temperature encoding; characterized by contact with maximum skin surface and without movement, also without effort to mold to the touched surface.

Enclosure—associated with encoding of volume and coarse shape; characterized by molding to touched surface but without high force.

Pressure—associated with encoding of compliance; characterized by application of forces to object (usually, normal to surface), while counterforces are exerted (by person or external support) to maintain its position.

Unsupported holding—associated with encoding of weight; characterized by holding object away from supporting surface, often with arm movement (hefting).

Contour following—associated with encoding of precise contour; characterized by movement of exploring effector (usually, one or more fingertips) along edge or surface contour.

The association between these exploratory procedures and the properties they are used to extract has been documented in a variety of tasks. One paradigm (Lederman & Klatzky, 1987) required blindfolded participants to pick the best match, among three comparison objects, to a standard object. The match was to be based on a particular property, like roughness, with others being ignored. The hand movements of the participants when exploring the standard object were recorded and classified as exploratory procedures. In another task, blindfolded participants were asked to sort objects into categories defined by haptically perceptible properties, as quickly as possible (Klatzky, Lederman, & Reed, 1989; Lederman, Klatzky, & Reed, 1993; Reed, Lederman, & Klatzky, 1990). The objects were custom fabricated and varied systematically (across several sets) in shape complexity, compliance, size, hardness, and surface roughness. In both of these tasks, subjects were observed to produce the exploratory procedure associated with the targeted object property.

Haptic exploratory procedures are also observed when vision is available, although they occur only for a subset of the properties, and then only when the judgment is relatively difficult (i.e., vision does not suffice). In particular (Klatzky, Lederman, & Matula, 1993), individuals who were asked which of two objects was greater along a designated property—size, weight, and so on—used vision alone to make judgments of size or shape, whether the judgments were easy or difficult. However, they used appropriate haptic exploratory procedures to make difficult judgments of material properties, such as weight and roughness.

One might ask what kind of exploration occurs when people try to identify common objects. Klatzky, Lederman, and Metzger (1985) observed a wide variety of hand movements when participants tried to generate the names of 100 common objects, as each object was placed in their hands in turn. Lederman and Klatzky (1990) probed for the hand movements used in object identification more directly, by placing an object in the hands of a blindfolded participant and asking for its identity with one of two kinds of cues. The cue referred either to the object’s basic-level name (e.g., Is this writing implement a pencil?) or to a name at a subordinate level (e.g., Is this pencil a used pencil?). An initial phase of the experiment determined what property or properties people thought were most critical to identifying the named object at each level; in this phase, a group of participants selected the most diagnostic attributes for each name from a list of properties that was provided. This initial phase revealed that shape was the most frequent diagnostic attribute for identifying objects at the basic level, although texture was often diagnostic as well. At the subordinate level, however, the set of object names was designed to elicit a wider variety of diagnostic attributes; for example, whereas shape is diagnostic to identify a food as a noodle, compliance is important when identifying a noodle as a cooked noodle. In the main phase of the experiment, when participants were given actual exemplars of the named object and probed at the basic or subordinate level, their hand movements were recorded and classified. Most identifications began with a grasp and lift of the object. This initial exploration was often followed by more specific exploratory procedures, and those procedures were the ones that were associated with the object’s most diagnostic attributes.

Why are dedicated exploratory procedures used to extract object properties? Klatzky and Lederman (1999a) argued that each exploratory procedure optimizes the input to an associated property-computation process. For example, the exploratory procedure associated with the property of apparent temperature (i.e., static holding) uses a large hand surface. Spatial summation across the thermal receptors means that a larger surface provides a stronger signal about rate of heat flow. As another example, lateral motion—the scanning procedure associated with the property of surface roughness— has been found to increase the firing rates of slowly adapting receptors (Johnson & Lamb, 1981), which appear to be the input to the computation of roughness for macrotextured surfaces (see Hsaio et al., 1993, for review). (For a more complete analysis of the function of exploratory procedures, see Klatzky & Lederman, 1999a.)

The idea that the exploratory procedure associated with an object property optimizes the extraction of that property is supported by an experiment of Lederman and Klatzky (1987, Experiment 2). In this study, participants were constrained to use a particular exploratory procedure while a target property was to be compared. Across conditions, each exploratory procedure was associated with each target property, not just the property with which the procedure spontaneously emerged. The accuracy and speed of the comparison were determined for each combination of procedure and property. When performance on each property was assessed, the optimal exploratory procedure in this forced-exploration task (based on accuracy, with speed used to disambiguate ties) was found to be the same one that emerged when subjects freely explored to compare the given property. That is, the spontaneously executed procedure was in fact the best one to use, indicating that the procedure maximizes the availability of relevant information. The use of contour following to determine precise shape was found not only optimal, but also necessary in order to achieve accurate performance.

Turvey and associates, in an extensive series of studies, have examined a form of exploration that they call “dynamic touch,” to contrast it with both cutaneous sensing and haptic exploration, in which the hand actively passes over the surface of an object (for review, see Turvey, 1996; Turvey & Carello, 1995). With dynamic touch, the object is held in the hand and wielded, stimulating receptors in the tendons and muscles; thus it can be considered to be based on kinesthesis. The inertia tensor, described previously in the context of weight perception, has been found to be a mediating construct in the perception of several object properties from wielding. We have seen that the eigenvalues of the inertia tensor—that is, the resistance to rotation around three principal axes (the eigenvectors)—appear to play a critical role in the perception of heaviness. The eigenvalues and eigenvectors also appear to convey information about the geometric properties of objects and the manner in which they are held during wielding, respectively. Among the perceptual judgments that have been found to be directly related to the inertia tensor are the length of a wielded object (Pagano & Turvey, 1993; Solomon & Turvey, 1988), its width (Turvey, Burton, Amazeen, Butwill, & Carello, 1998), and the orientation of the object relative to the hand (Pagano & Turvey, 1992). A wielded object can also be a tool for finding out about the external world; for example, the gap between two opposing surfaces can be probed by a handheld rod (e.g., Barac-Cikoja & Turvey, 1993).

Relative Availability of Object Properties

LedermanandKlatzky(1997)usedavariantofavisualsearch task (Treisman & Gormican, 1988) to investigate which haptically perceived properties become available at different points in the processing stream. In their task, the participant searched for a target that was defined by some haptic property and presented to a single finger, while other fingers were presented with distractors that did not have the target property. For example, the target might be rough, and the distractors smooth. From one to six fingers were stimulated on any trial, by means of a motorized apparatus. The participant indicated target presence or absence by pressing a thumb switch, and the response time—from presentation of the stimuli to the response—was recorded. The principal interest was in the search function; that is, the function relating response time to the number of fingers that were stimulated. Two such functions could be calculated, one for target-present trials and the other for target-absent trials. The functions were generally strongly linear.

Twenty-five variants on this task were performed, representing different properties. The properties fell into four broad classes. One was material properties: for example, rough-smooth (a target could be rough and distractors smooth, or vice versa), hard-soft, and cool-warm (copper vs. pine). A second class required subjects to search for the presence or absence of abrupt surface discontinuities, such as detecting a surface with a raised bar among flat surfaces. A third class of discriminations was based on planar or threedimensional spatial position. For example, subjects might be asked to search for a vertical edge (i.e., a raised bar aligned along the finger) among horizontal-edge distractors, or they might look for a raised dot to the right of an indentation among surfaces with a dot to the left of an indentation (Experiments 8–11). Finally, the fourth class of searches required subjects to discriminate between continuous threedimensional contours, such as seeking a curved surface among flat surfaces.

From the resulting response-time functions, the slope and intercept parameters were extracted. The slope indicates the additional cost, in terms of processing time, of adding a single finger to the display. The intercept includes one-time processes that do not depend on the number of fingers, such as adjusting the orientation of the hand so as to better contact the display. Note that although the processes entering the intercept do not depend on the number of fingers, they may depend on the particular property that is being discriminated. The intercept will include the time to extract information about the object property being interrogated, to the extent the process of information extraction is done in parallel and it does not use distributed capacity across the fingers (in which case, the processing time would affect the slope).

The relative values of the slope and intercept indicate the availability ordering among properties. A property whose discrimination produces a higher slope extracts a higher finger-by-finger cost and hence is slower to extract; a property producing a higher intercept takes longer for one-time processing and hence is slow to be extracted. Both the slopes and intercepts of this task told a common story about the relative availability among haptically accessible properties. There was a progression in availability from material properties, to surface discontinuities, to spatial relations. The slopes for material properties tended to be low (£ 36 ms), and several were approximately equal to zero. Similarly, the intercepts of material-property search functions tended to be among the lowest, except for the task in which the target was cool (copper) and the distractors warm (pine). This exception presumably reflects the time necessary for heat to flow from the subject’s skin to the stimulus, activating the thermoreceptors. In contrast, the slopes and intercepts for spatially defined properties tended to be among the highest.

Why should material properties and abrupt spatial discontinuities be more available than properties that are spatially defined? Lederman and Klatzky (1997) characterized the material and discontinuity properties as unidimensional or intensive: That is, they can be represented by a scalar magnitude that indicates the intensity of the perceptual response. In contrast, spatial properties are, by definition, related to the two- or three-dimensional layout of points in a reference system. A spatial discrimination task requires that a distinction be made between stimuli that are equal in intensity but vary in spatial placement. For example, a bar can be aligned with or across the fingertip, but exerts the same amount of pressure in either case.

The relative unavailability of spatial properties demonstrated in this research is consistent with a more general body of work suggesting that spatial information is relatively difficult to extract by the haptic system, in comparison both to spatial coding by the visual system and to haptic coding of nonspatial properties (e.g., Cashdan, 1968; Johnson & Phillips, 1981; Lederman, Klatzky, Chataway, & Summers, 1990).

Haptic Space Perception

Whereas a large body of theoretical and empirical research has addressed visual space perception, there is no agreed-upon definition of haptic space. Lederman, Klatzky, Collins, and Wardell (1987) made a distinction between manipulatory and ambulatory space, the former within reach of the hands and the latter requiring exploration by movements of the body. Both involve haptic feedback, although to different effectors. Here, we consider manipulatory space exclusively.

Avariety of studies have established that the perception of manipulatory space is nonveridical.The distortions have been characterized in various ways. One approach is to attempt to determine a distance metric for lengths of movements made on a reached surface. Brambring (1976) had blind and sighted individuals reach along two sides of a right triangle and estimate the length of the hypotenuse. Fitting the hypotenuse to a general distance metric revealed that estimates departed from the Euclidean value by using an exponent less than 2. Brambring concluded that the operative metric was closer to a city block. Subsequent work suggests, however, that no one metric will apply to haptic spatial perception, because distortions arise from several sources, and perception is not uniform over the explored space; that is, haptic spatial perception is anisotropic.

One of the indications of anisotropy is the verticalhorizontal illusion. Well known in vision, although observed long ago in touch as well (e.g., Burtt, 1917), this illusion takes the form of vertical lines’ being overestimated relative to length-matched horizontals. Typically, the illusion is tested by presenting subjects with a T-shaped or L-shaped form and asking them to match the lengths of the components. The T-shaped stimulus introduces another source of judgment error, however, in that the vertical line is bisected (making it perceptually shorter) and the horizontal is not. The illusion in touch is not necessarily due to visual mediation (i.e., imagining how the stimulus would look), because it has been observed in congenitally blind people as well as sighted individuals (e.g., Casla, Blanco, & Travieso, 1999; Heller & Joyner, 1993). Heller, Calcaterra, Burson, & Green (1997) demonstrated that the patterns of arm movement used by subjects had a substantial effect on the illusion. Use of the whole arm in particular augmented the magnitude of the illusion. Millar and Al-Attar (2000) found that the illusion was affected by the position of the display relative to the body, which would affect movement and, potentially, the spatial reference system in which the display was represented.

Another anisotropy is revealed by the radial-tangential effect in touch. This refers to the fact that movements directed toward and away from the body (radial motions) are overestimated relative to side-to-side (tangential) motions of equal extent (e.g., Cheng, 1968; Marchetti & Lederman, 1983). Like the vertical-horizontal illusion, this appears to be heavily influenced by motor patterns. The perception of distance is greater when the hand is near the body, for example (Cheng, 1968; Marchetti & Lederman, 1983). Wong (1977) found that the slower the movement, the greater the judged extent; he suggested that the difference between radial and tangential distance judgments may reflect different execution times. Indeed, when Armstrong and Marks (1999) controlled for movement duration, the difference between estimates of radial and tangential extents vanished.

A third manifestation of anisotropy in haptic space perception is the oblique effect, also found in visual perception (e.g., Appelle & Countryman, 1986; Gentaz & Hatwell, 1995, 1996, 1998; Lechelt, Eliuk, & Tanne, 1976). When people are asked to reproduce the orientation of a felt rod, they do worse with obliques (e.g., 45°) than with horizontal or vertical lines. As with the other anisotropies that have been described, the pattern in which the stimulus is explored appears to be critical to the effect. Gentaz and Hatwell (1996) had subjects reproduce the orientation of a rod when the gravitational force was either natural or nulled by a counterweight. The oblique effect was greater when the natural gravitational forces were present. In a subsequent experiment with blind subjects (Gentaz & Hatwell, 1998), it appeared that the variability of the gravitational forces, rather than their magnitude, was critical: The oblique effect was not found in the horizontal plane, even with an unsupported arm; in this plane the gravitational forces do not vary with the direction of movement. In contrast, the oblique effect was found in the frontal plane, where gravitational force impedes upward and facilitates downward movements, regardless of arm support.

A study by Essock, Krebs, and Prather (1997) points to the fact that anisotropies may have multiple processing loci. Although effects of movement and gravity point to the involvement of muscle-tendon-joint systems, the oblique effect was also found for gratings oriented on the finger pad. This is presumably due to the filtering of the cutaneous system. The authors suggest a basic distinction between lowlevel anisotropies that arise at a sensory level, and ones that arise from higher-level processing of spatial relations.

The influence of high-level processes can be seen in a phenomenon described by Lederman, Klatzky, and Barber (1985), which they called “length distortion.” In their studies, participants were asked to trace a curved line between two endpoints, and then to estimate the direct (Euclidean) distance between them.The estimates increased directly with the length of the curved line, in some cases amounting to a 2:1 estimate relative to the correct value. High errors were maintained, even when subjects kept one finger on the starting point of their exploration and maintained it until they came to the endpoint. Under these circumstances, they had simultaneous sensory information about the positions of the fingers before making the judgment; still, they were pulled off by the length of the exploratory path. Because the indirect path between endpoints adds to both the extent and duration of the travel between them by the fingers, Lederman et al. (1987) attempted to disambiguate these factors by having subjects vary movement speed. They found that although the duration of the movement affected responses, the principal factor was the pathway extent. In short, it appears that the spatial pattern of irrelevant movement is taken into account when the shortest path is estimated.

Bingham, Zaal, Robin, and Shull (2000) suggested that haptic distortion might actually be functional: namely, as a means of compensating for visual distortion in reaching. They pointed out that although visual distances are distorted by appearing greater in depth than in width, the same appears to be true of haptically perceived space (Kay, Hogan, & Fasse, 1996). Given an error in vision, then, the analogous error in touch leads the person to the same point in space. Suppose that someone reaching to a target under visual guidance perceives it to be 25% further away than it is—for example, at 1.25 m rather than its true location of 1 m. If the haptic system also feels it to be 25% further away than it is, then haptic feedback from reaching will guide a person to land successfully on the target at 1 m while thinking it is at 1.25 m. However, the hypothesis that haptic distortions usefully cancel the effects of visual distortions was not well supported. Haptic feedback in the form of touching the target after the reach compensated to some extent, but not fully, for the visual distortion.

Virtually all of the anisotropies that have been described are affected by the motor patterns used to explore haptic space. The use of either the hand or arm, the position of the arm when the hand explores, the gravitational forces present, and the speed of movement, fo rexample, are all factors that have been identified as influencing the perception of a tangible layout in space. What is clearly needed is research that clarifies the processes by which a representation of external space is derived from sensory signals provided by muscle-tendon-joint receptors, which in turn arise from the kinematics (positional change of limbs and effectors) and dynamics (applied forces) of exploration. This is clearly a multidimensional problem. Although it may turn out to have a reduced-dimensional solution, the solution seems likely to be relatively complex, given the evidence that high-level cognitive processes mediate the linkages between motor exploration, cutaneous and kinesthetic sensory responses, and spatial representation.

Haptic Perception of Two- and Three-Dimensional Patterns

Perception of pattern by the haptic system has been tested within a number of stimulus domains. The most common stimuli are vibrotactile patterns, presented by vibrating pins. Other two-dimensional patterns that have been studied are Braille, letters, unfamiliar outlines, and outline drawings of common objects. There is also work on fully three-dimensional objects.

Vibrotactile Patterns

A vibrotactile pattern is formed by repeatedly stimulating some part of the body (usually the finger) at a set of contact points. Typically, the points are a subset of the elements in a matrix. The most commonly used stimulator, the Optacon (for optical-to-tacile converter), is a array with 24 rows and 6 columns; it measures 12.7 * 29.2 mm (Cholewiak & Collins, 1990). The row vibrators are separated by approximately 1.25 mm and the column pins by approximately 2.5 mm. The pins vibrate approximately 230 times per second. Larger arrays were described by Cholewiak and Sherrick (1981) for use on the thigh and the palm.

A substantial body of research has examined the effects of temporal and spatial variation on pattern perception with vibrating pin arrays (see Craig & Rollman, 1999; Loomis & Lederman, 1986). When two temporally separated patterns are presented, they may sum to form a composite, or they may produce two competing responses; these mechanisms of temporal interaction appear to be distinct (Craig, 1996; Craig & Qian 1997). These temporal effects can occur even when the patterns are presented to spatial locations on two different fingers (Craig & Qian, 1997).

Spatial interactions between vibratory patterns may occur because the patterns stimulate common areas of skin, or because they involve a common stimulus identity but are not necessarily at the same skin locus. The term communality (Geldard & Sherrick, 1965) has been used to measure the extent to which two patterns have active stimulators in the same spatial location, whether the pattern identities are the same or different. The ability to discriminate patterns has been found to be inversely related to their communality at the finger, palm, and thigh (Cholewiak & Collins, 1995; see that paper also for a review). The extent to which two patterns occupy common skin sites has also been found to affect discrimination performance. Horner (1995) found that when subjects were asked to make same-different judgments of vibrotactile patterns, irrespective of the area of skin that was stimulated, they performed best when the patterns were presented to the same site, in which case the absolute location of the stimulation could be used for discrimination. As the locations were more widely separated, performance deteriorated, suggesting a cost for aligning the patterns within a common representation when they were physically separated in space.

Two-Dimensional Patterns and Freestanding Forms

Another type of pattern that has been used in a variety of studies is composed of raised lines or points. Braille constitutes the latter type of pattern. Loomis (1990) modeled the perception of characters presented to the fingertip—notonlyBraille patterns, but also modified Braille with adjacent connected dots, raised letters of English and Japanese, and geometric forms. Confusion errors in identifying members of these pattern sets, tactually and visually when seen behind a blurring filter (to simulate filtering properties of the skin), were compiled. The data supported a model in which the finger acts like a low-pass filter, essentially blurring the input; the intensity is also compressed. Loomis has pointed out that given the filtering imposed by the skin, the Braille patterns that have been devised for use by the blind represent a useful compromise between the spatial extent of the finger and its acuity: A larger pattern would have points whose relative locations were easier to determine, but it would then extend beyond the fingertip.

The neurophysiological mechanisms underlying perception of raised, two-dimensional patterns at the fingertip have been investigated by Hsaio, Johnson, and associates (see Hsaio, Johnson, Twombly, & DiCarlo, 1996). The SAI mechanoreceptors appear to be principally involved in form perception. These receptors have small receptive fields (about 2 mm diameter), respond better to edges than to continuous surfaces (Phillips & Johnson, 1981), and given their sustained response, collectively produce an output that preserves the shape of embossed patterns presented to the skin. Hsaio et al. (1996) have traced the processing beyond the SI mechanoreceptors to cortical areas SI and SII in succession. Isomorphism is preserved in area SI, whereas SII neurons have larger receptive fields and show more complex responses that are not consistently related to the attributes of the stimulus.

Larger two-dimensional shapes, felt with the fingers of one or more hands, have also been used to test the patternrecognition capabilities of the haptic system. These larger stimuli introduce demands of memory and integration (see following paragraphs), and often, performance is poor. Klatzky, Lederman, and Balakrishnan (1991) found chance performance in a successive matching task with irregularly shaped planar forms (like wafers) on the order of 15 cm in diameter. Strategic exploration may be used to reduce the memory demands and detect higher-order properties of such stimuli. Klatzky etal. Found that subjects explored as symmetrically as possible, often halting exploration with one hand so that the other, slowed by a more complex contour, could catch up, so to speak, to the same height in space. Ballesteros, Manga, and Reales (1997) and Ballesteros, Millar, and Reales (1998) found that such bimanual exploration facilitated the ability to detect the property of symmetry in raised-lineshapes scaled well beyond the fingertip.

Two-Dimensional Outline Drawings of Common Objects

If unfamiliar forms that require exploration beyond the fingertip are difficult to identify and compare, one might expect better performance with familiar objects. Studies that examine object-identification performance with raised, two-dimensional depictions of objects have led to the conclusion that performance is considerably below that with real objects (see following discussion), but well above chance. Lederman et al. (1990) found that sighted individuals recognized only 34% of raised-line drawings of objects, even when they were allowed up to 2 minutes of exploration. The blind participants did substantially worse (10% success). Loomis, Klatzky, and Lederman (1991) implicated memory and integration processes as limiting factors in twodimensional haptic picture recognition. This study compared visual and tactual recognition with identical line drawings of objects. In one condition with visual presentation, the contours of the object were revealed through an aperture scaled to have the same proportion, relative to the size of the object, as the fingertip. As the participant moved his or her hand on a digital pad, the contours of the object were continuously revealed through the aperture. Under these viewing conditions, performance with visual recognition—which was completely accurate when the whole object was simultaneously exposed—deteriorated to the level of the tactual condition, despite high familiarity with the object categories.

There is evidence that given the task of recognizing a twodimensional picture by touch, people who have had experience with sight attempt to form a visual image of the object and recognize it by visual mediation. Blind people with some visual experience do better on the task than those who lacked early vision (Heller, 1989a), and among sighted individuals, measures of imagery correlate with performance (Lederman et al., 1990). However, Heller also reported a study in which blind people with some visual experience outperformed sighted, blindfolded individuals. This demonstrates that visual experience and mediation by means of visual images are not prerequisites for successful picture identification. (Note that spatial images, as compared to visual images, may be readily available to those lacking in visual experience.) D’Angiulli, Kennedy, and Heller (1998) also found that when active exploration of raised pictures was used, performance by blind children (aged 8–13) was superior to that of a matched group of sighted children; moreover, the blind children’s accuracy averaged above 50%. They suggested that the blind had better spontaneous strategies for exploring the pictures; the sighted children benefited from having their hands passively guided by the experimenter. A history of instruction for the blind individuals may contribute to this effect (Heller, Kennedy, & Joyner, 1995).

The studies just cited clearly show that persons who lack vision can recognize raised drawings of objects at levels that, although they do not approach visual recognition, nonetheless point to a strong capacity to interpret kinesthetic variation in the plane as a three-dimensional spatial entity. This ability is consistent with demonstrations that blind people often create drawings that illustrate pictorial conventions such as perspective and metaphorical indications of movement (Heller, Calcaterra, Tyler, & Burson, 1996; Kennedy, 1997).

Three-Dimensional Objects

Real, common objects are recognized very well by touch. Klatzky et al. (1985) found essentially perfect performance in naming common objects placed in the hands, with a modal response time of 2 s. This level of performance contrasts with the corresponding data for raised-line portrayals of common objects (i.e., low accuracy even with 2 minutes of exploration), raising the question as to what is responsible for the difference. No doubt there are several factors. Experience is likely to be one; note that experience is implicated in previously described studies with raised-line objects.

Another relevant factor is three-dimensionality. A twodimensional object follows a convention of projecting variations in depth to a picture plane, from which the third dimension must be constructed. This is performed automatically by visual processes, but not, apparently, in the domain of touch. Lederman et al. (1990) found that portrayals of objects that have variations in depth led to lower performance than was found with flat objects that primarily varied in two dimensions (e.g., a bowl vs. a fork). Shimizu, Saida, and Shimura (1993) used a pin-element display to portray objects as two-dimensional outlines or three-dimensional relief forms. Ratings of haptic legibility were higher for the threedimensional objects, and their identification by early blind individuals was also higher. Klatzky, Loomis, Lederman, Wake, and Fujita (1993) asked participants to identify real objects while wearing heavy gloves and exploring with only a single finger, which reduced the objects’ information content primarily to three-dimensional contour (although some surface information, such as coefficient of friction, was no doubt available). Performance was approximately 75% accurate, well above the level achieved when exploring raisedline depictions of the same objects.

Lakatos and Marks (1999) investigated whether, when individuals explore three-dimensional objects, they emphasize the local features or the global form. The task was to make similarity judgments of unfamiliar geometric forms (e.g., cube; column) that contained distinctive local features such as grooves and spikes (see Figure 6.6). The data suggested a greater salience for local features in early processing, with globalfeaturesbecomingmoreequalinsalienceasprocessing time increased. Objects with different local features but similar in overall shape were judged less similar when explored haptically than when vision was available. Longer exposure time (increasing from 1 s to 16 s) produced greater similarity ratings for objects that were locally different but globally similar, indicating the increasing salience for global shape over time.

When people do extract local features of threedimensional objects, they appear to have a bias toward encoding the back of the object—the reverse of vision. Newell, Ernst, Tian, and Bülthoff (2001) documented this phenomenon using objects made of Lego blocks. The participants viewed or haptically explored the objects, and then tried to recognize the ones to which they had been exposed. On some trials, the objects were rotated 180 (back-to-front) between exposure and the recognition test. When exposure and test were in the same modality (vision or touch), performance suffered if the objects were rotated. When the modality changed between exposure and test, however, performance was better when the objects were rotated as well: In this case, the surface that was felt at the back of the object was viewed at the front. Moreover, when exploration and testing were exclusively by touch, performance was better for objects explored from the back than for those explored from the front.