Sample Consciousness Research Paper. Browse other research paper examples and check the list of research paper topics for more inspiration. iResearchNet offers academic assignment help for students all over the world: writing from scratch, editing, proofreading, problem solving, from essays to dissertations, from humanities to STEM. We offer full confidentiality, safe payment, originality, and money-back guarantee. Secure your academic success with our risk-free services.

Consciousness is an inclusive term for a number of central aspects of our personal existence. It is the arena of selfknowledge, the ground of our individual perspective, the realm of our private thoughts and emotions. It could be argued that these aspects of mental life are more direct and immediate than any perception of the physical world; indeed, according to Descartes, the fact of our own thinking is the only empirical thing we know with mathematical certainty. Nevertheless, the study of consciousness within science has proven both challenging and controversial, so much so that some have doubted the appropriateness of addressing it within the tradition of scientific psychology.

Academic Writing, Editing, Proofreading, And Problem Solving Services

Get 10% OFF with 24START discount code

In recent years, however, new methods and technologies have yielded striking insights into the nature of consciousness. Neuroscience in particular has begun to reveal detailed connections between brain events, subjective experiences, and cognitive processes. The effect of these advances has been to give consciousness a central role both in integrating the diverse areas of psychology and in relating them to developments in neuroscience. In this research paper we survey what has been discovered about consciousness; but because of the unique challenges that the subject poses, we also devote a fair amount of discussion to methodological and theoretical issues and consider the ways in which prescientific models of consciousness exert a lingering (and potentially harmful) influence.

Two features of consciousness pose special methodological challenges for scientific investigation. First, and best known, is its inaccessibility. A conscious experience is directly accessible only to the one person who has it, and even for that person it is often not possible to express precisely and reliably what has been experienced. As an alternative, psychology has developed indirect measures (such as physiological measurements and reaction time) that permit reliable and quantitative measurement, but at the cost of raising new methodological questions about the relationship between these measures and consciousness itself.

The second challenging feature is that the single word consciousness is used to refer to a broad range of related but distinct phenomena (Farber & Churchland, 1995). Consciousness can mean not being knocked out or asleep; it can mean awareness of a particular stimulus, as opposed to unawareness or implicit processing; it can mean the basic functional state that is modulated by drugs, depression, schizophrenia, or REM sleep. It is the higher order self-awareness that some species have and others lack; it is the understanding of one’s own motivations that is gained only after careful reflection; it is the inner voice that expresses some small fraction of what is actually going on below the surface of the mind. On one very old interpretation, it is a transcendent form of unmediated presence in the world; on another, perhaps just as old, it is the inner stage on which ideas and images present themselves in quick succession.

Where scientists are not careful to focus their inquiry or to be explicit about what aspect of consciousness they are studying, this diversity can lead to confusion and talking at cross-purposes. On the other hand, careful decomposition of the concept can point the way to a variety of solutions to the first problem, the problem of access. As it has turned out, the philosophical problems of remoteness and subjectivity need not always intrude in the study of more specific forms of consciousness such as those just mentioned; some of the more prosaic senses of consciousness have turned out to be quite amenable to scientific analysis. Indeed, a few of these—such as “awareness of stimuli” and “ability to remember and report experiences”—have become quite central to the domain of psychology and must now by any measure be considered well studied.

In what follows we provide a brief history of the early development of scientific approaches to consciousness, followed by more in-depth examinations of the two major strands in twentieth century research: the cognitive and the neuroscientific. In this latter area especially, the pace of progress has accelerated quite rapidly in the last decade; though no single model has yet won broad acceptance, it has become possible for theorists to advance hypotheses with a degree of empirical support and fine-grained explanatory power that was undreamed-of 20 years ago. In the concluding section we offer some thoughts about the relationship between scientific progress and everyday understanding.

Brief History of the Study of Consciousness

Ebbinghaus (1908, p. 3) remarked that psychology has a long past and a short history. The same could be said for the study of consciousness, except that the past is even longer and the scientific history even shorter. The concept that the soul is the organ of experience, and hence of consciousness, is ancient. This is a fundamental idea in the Platonic dialogues, as well as the Upanishads, written about 600 years before Plato wrote and a record of thinking that was already ancient.

We could look at the soul as part of a prescientific explanation of mental events and their place in nature. In the mystical traditions the soul is conceived as a substance different from the body that inhabits the body, survives its death (typically by traveling to a supernatural realm), and is the seat of thought, sensation, awareness, and usually the personal self. This doctrine is also central to Christian belief, and for this reason it has had enormous influence on Western philosophical accounts of mind and consciousness. The doctrine of soul or mind as an immaterial substance separate from body is not universal. Aristotle considered but did not accept the idea that the soul might leave the body and reenter it (De Anima, 406; see Aristotle, 1991). His theory of the different aspects of soul is rooted in the functioning of the biological organism. The pre-Socratic philosophers for the most part had a materialistic theory of soul, as did Lucretius and the later materialists, and the conception of an immaterial soul is foreign to the Confucian tradition. The alternative prescientific conceptions of consciousness suggest that many problems of consciousness we are facing today are not inevitable consequences of a scientific investigation of awareness. Rather, they may result from the specific assumption that mind and matter are entirely different substances.

The mind-body problem is the legendary and most basic problem posed by consciousness. The question asks how subjective experience can be created by matter, or in more modern terms, by the interaction of neurons in a brain. Descartes (1596–1650; see Descartes, 1951) provided an answer to this question, and his answer formed the modern debate. Descartes’s famous solution to the problem is that body and soul are two different substances. Of course, this solution is a version of the religious doctrine that soul is immaterial and has properties entirely different from those of matter. This position is termed dualism, and it assumes that consciousness does not arise from matter at all. The question then becomes not how matter gives rise to mind, because these are two entirely different kinds of substance, but how the two different substances can interact. If dualism is correct, a scientific program to understand how consciousness arises from neural processes is clearly a lost cause, and indeed any attempt to reconcile physics with experience is doomed. Even if consciousness is not thought to be an aspect of “soul-stuff,” its concept has inherited properties from soul-substance that are not compatible with our concepts of physical causality. These include free will, intentionality, and subjective experience. Further, any theorist who seeks to understand how mind and body “interact” is implicitly assuming dualism. To those who seek a unified view of nature, consciousness under these conceptions creates insoluble problems. The philosopher Schopenhauer called the mind-body problem the “worldknot” because of the seeming impossibility of reconciling the facts of mental life with deterministic physical causality. Writing for a modern audience, Chalmers (1996) termed the problem of explaining subjective experience with physical science the “hard problem.”

Gustav Fechner, a physicist and philosopher, attempted to establish (under the assumption of dualism) the relationship between mind and body by measuring mathematical relations between physical magnitudes and subjective experiences of magnitudes. While no one would assert that he solved the mind-body problem, the methodologies he devised to measure sensation helped to establish the science of psychophysics.

The tradition of structuralism in the nineteenth century, in the hands of Wundt and Titchener and many others (see Boring, 1942), led to very productive research programs. The structuralist research program could be characterized as an attempt to devise laws for the psychological world that have the power and generality of physical laws, clearly a dualistic project. Nevertheless, many of the “laws” and effects they discovered are still of interest to researchers.

The publication of John Watson’s (1925; see also Watson, 1913, 1994) book Behaviorism marked the end of structuralism. Methodological and theoretical concerns about the current approaches to psychology had been brewing, but Watson’s critique, essentially a manifesto, was thoroughgoing and seemingly definitive. For some 40 years afterward, it was commonly accepted that psychological research should study only publicly available measures such as accuracy, heart rate, and response time; that subjective or introspective reports were valueless as sources of data; and that consciousness itself could not be studied. Watson’s arguments were consistent with views of science being developed by logical positivism, a school of philosophy that opposed metaphysics and argued that statements were meaningful only if they had empirically verifiable content. His arguments were consistent also with ideas (later expressed by Wittgenstein, 1953, and Ryle, 1949) that we do not have privileged access to the inner workings of our minds through introspection, and thus that subjective reports are questionable sources of data. The mind (and the brain) was considered a black box, an area closed to investigation, and all theories were to be based on examination of observable stimuli and responses.

Research conducted on perception and attention during World War II, the development of the digital computer and information theory, and the emergence of linguistics as the scientific study of mind led to changes in every aspect of the field of psychology. It was widely concluded that the behavioristic strictures on psychological research had led to extremely narrow theories of little relevance to any interesting aspect of human performance. Chomsky’s blistering attack on behaviorism (reprinted as Chomsky, 1996) might be taken as the 1960s equivalent of Watson’s (1913, 1994) earlier behavioristic manifesto. Henceforth, researchers in psychology had to face the very complex mental processes demanded by linguistic competence, which were totally beyond the reach of methods countenanced by behaviorism. The mind was no longer a black box; theories based on a wide variety of techniques were used to develop rather complex theories of what went on in the mind. New theories and new methodologies emerged with dizzying speed in what was termed the cognitive revolution (Gardner, 1985).

We could consider ourselves, at the turn of the century, to be in the middle of a second phase of this revolution, or possibly in a new revolution built on the shoulders of the earlier one. This second revolution results from the progress that has been made by techniques that allowed researchers to observe processing in the brain, through such techniques as electroencephalography (EEG), event-related electrical measures, positron-emission tomography (PET) imaging, magnetic resonance imaging (MRI), and functional MRI. This last black box, the brain, is getting opened. This revolution has the unusual distinction of being cited, in a joint resolution of the United States Senate and House of Representatives on January 1, 1990, declaring the 1990s as the “Decade of the Brain.” Neuroscience may be the only scientific revolution to have the official authorization of the federal government.

Our best chance of resolving the difficult problems of consciousness, including the worldknot of the mind-body problem, would seem to come from our newfound and growing ability to relate matter (neural processing) and mind (psychological measures of performance). The actual solution of the hard problem may await conceptual change, or it may remain always at the edge of knowledge, but at least we are in an era in which the pursuit of questions about awareness and volition can be considered a task of normal science, addressed with wonderful new tools.

What We Have Learned from Measures of Cognitive Functioning

Research on consciousness using strictly behavioral data has a history that long predates the present explosion of knowledge derived from neuroscience. This history includes sometimes controversial experiments on unconscious or subliminal perception and on the influences of consciously unavailable stimuli on performance and judgment. Afresh observer looking over the literature might note wryly that the research is more about unconsciousness than consciousness. Indeed, this is a fair assessment of the research, but it is that way for a good reason.

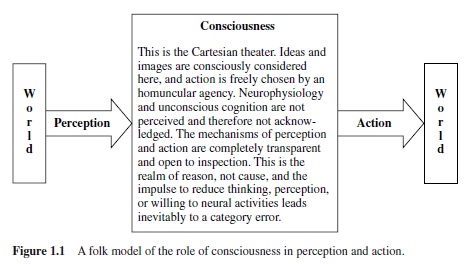

The motivation for this direction of research can be framed as a test of the folk theory of the role of consciousness in perception and action. A sketch of such a folk theory is presented in Figure 1.1. This model—mind as a container of ideas, with windows to the world for perception at one end and for action at the other—is consistent with a wide range of metaphors about mind, thought, perception, and intention (cf. Lakoff, 1987; Lakoff & Johnson, 1980). The folk model has no room for unconscious thought, and any evidence for unconscious thought would be a challenge to the model. The approach of normal science would be to attempt to disconfirm its assumptions and thus search for unconscious processes in perception, thought, and action.

The folk theory has enormous power because it defines common sense and provides the basis for intuition. In addition, the assumptions are typically implicit and unexamined. For all of these reasons, the folk model can be very tenacious. Indeed, as McCloskey and colleagues showed (e.g., McCloskey & Kohl, 1983), it can be very difficult to get free of a folk theory. They found that a large proportion of educated people, including engineering students enrolled in college physics courses, answered questions about physical events by using a folk model closer to Aristotelian physics than to Newtonian.

Many intuitive assumptions can be derived from the simple outline in Figure 1.1. For example, the idea that perception is essentially a transparent window on the world, unmediated by nonconscious physiological processes, sometimes termed naive realism, is seen in the direct input from the world to consciousness. The counterpart to naive realism, which we might call naive conscious agency, is that actions have as their sufficient cause the intentions generated in consciousness and, further, that the intentions arise entirely within consciousness on the basis of consciously available premises.

We used the container metaphor in the earlier sentence when we referred to “intentions generated in consciousness.” This is such a familiar metaphor that we forget that it is a metaphor. Within this container the “Cartesian theater” so named by Dennett (1991) is a dominant metaphor for the way thinking takes place. We say that we see an idea (on the stage), that we have an idea in our mind, that we are putting something out of mind, that we are holding an image in our mind’s eye, and so on. Perceptions or ideas or intentions are brought forth in the conscious theater, and they are examined and dispatched in the “light of reason.” In another common folk model, the machine model of mental processing (Lakoff & Johnson, 1980), the “thought-processing machine” takes the place of the Cartesian stage. The transparency of perception and action is retained, but in that model the process of thought is hidden in the machine and may not be available to consciousness. Both folk models require an observer (homunculus) to supervise operations and make decisions about action.

As has been pointed out by Churchland (1986, 1996) and Banks (1993), this mental model leads to assumptions that make consciousness an insoluble problem. For example, the connection among ideas in the mind is not causal in this model, but logical, so that the reduction of cognitive processing to causally related biological processes is impossible— philosophically a category error. Further, the model leads to a distinction between reason (in the mind) and cause (in matter) and thus is another route to dualism. The homunculus has free will, which is incompatible with deterministic physical causality. In short, a host of seemingly undeniable intuitions about the biological irreducibility of cognitive processes derives from comparing this model of mind with intuitive models of neurophysiology (which themselves may have unexamined folk-neurological components).

Given that mental processes are in fact grounded in neural processes, an important task for cognitive science is to provide a substitute for the model of Figure 1.1 that is compatible with biology. Such a model will likely be as different from the folk model as relativity theory is from Aristotelian physics. Next we consider a number of research projects that in essence are attacks on the model of Figure 1.1.

Unconscious Perception

It goes without saying that a great deal of unconscious processing must take place between registration of stimulus energy on a receptor and perception. This should itself place doubt on the naive realism of the folk model, which views the entire process as transparent. We do not here consider these processes in general but only studies that have looked for evidence of a possible route from perception to memory or response that does not go through the central theater. We begin with this topic because it raises a number of questions and arguments that apply broadly to studies of unconscious processing.

The first experimentally controlled study of unconscious perception is apparently that of Pierce and Jastrow (1884). They found that differences between lifted weights that were not consciously noticeable were nonetheless discriminated at an above-chance level. Another early study showing perception without awareness is that of Sidis (1898), who found above-chance accuracy in naming letters on cards that were so far away from the observers that they complained that they could see nothing at all. This has been a very active area of investigation. The early research history on unconscious perception was reviewed by Adams (1957). More recent reviews include Dixon (1971, 1981), Bornstein and Pittman (1992), and Baars (1988, 1997). The critical review of Holender (1986), along with the commentary in the same issue of Behavioral and Brain Sciences, contains arguments and evidence that are still of interest.

A methodological issue that plagues this area of research is that of ensuring that the stimulus is not consciously perceived. This should be a simple technical matter, but many studies have set exposures at durations brief enough to prevent conscious perception and then neglected to reset them as the threshold lowered over the session because the participants dark-adapted or improved in the task through practice. Experiments that presumed participants were not aware of stimuli out of the focus of attention often did not have internal checks to test whether covert shifts in attention were responsible for perception of the purportedly unconscious material.

Even with perfect control of the stimulus there is the substantive issue of what constitutes the measure of unconscious perception. One argument would deny unconscious perception by definition: The very finding that performance was above chance demonstrates that the stimuli were not subliminal. The lack of verbal acknowledgement of the stimuli by the participant might come from a withholding of response, from a very strict personal definition of what constitutes “conscious,” or have many other interpretations. A behaviorist would have little interest in these subjective reports, and indeed it might be difficult to know what to make of them because they are reports on states observable only to the participant. The important point is that successful discrimination, whatever the subjective report, could be taken as an adequate certification of the suprathreshold nature of the stimuli.

The problem with this approach is that it takes consciousness out of the picture altogether. One way of getting it back in was suggested by Cheesman and Merikle (1984). They proposed a distinction between the objective threshold, which is the point at which performance, by any measure, falls to chance, and the subjective threshold, which is the point at which participants report that they are guessing or otherwise have no knowledge of the stimuli. Unconscious perception would be above-chance performance with stimuli presented at levels falling between these two thresholds. Satisfying this definition amounts to finding a dissociation between consciousness and response. For this reason Kihlstrom, Barnhardt, and Tataryn (1992) suggested that a better term than unconscious perception would be implicit perception, in analogy with implicit memory. Implicit memory is an influence of memory on performance without conscious recollection of the material itself. Analogously, implicit perception is an effect of a stimulus on a response without awareness of the stimulus. The well-established findings of implicit memory in neurological cases of amnesia make it seem less mysterious that perception could also be implicit.

The distinction between objective and subjective threshold raises a new problem: the measurement of the “subjective” threshold. Accuracy of response can no longer be the criterion. We are then in the position of asking the person if he or she is aware of the stimulus. Just asking may seem a dubious business, but several authors have remarked that it is odd that we accept the word of people with brain damage when they claim that they are unaware of a stimulus for which implicit memory can be demonstrated, but we are more skeptical about the reports of awareness or unawareness by normal participants with presumably intact brains. There is rarely a concern that the participant is untruthful in reporting on awareness of the stimulus. The problem is more basic than honesty: It is that awareness is a state that is not directly accessible by the experimenter. A concrete consequence of this inaccessibility is that it is impossible to be sure that the experimenter’s definition of awareness is shared by the participant. Simply asking the participant if he or she is aware of the stimulus amounts to embracing the participant’s definition of awareness, and probably aspects of the person’s folk model of mind, with all of the problems such an acceptance of unspoken assumptions entails. It is therefore important to find a criterion of awareness that will not be subject to experimental biases, assumptions by the participants about the meaning of the instructions, and so on.

Some solutions to this problem are promising. One is to present a stimulus in a degraded form such that the participant reports seeing nothing at all, then test whether some attribute of that stimulus is perceived or otherwise influences behavior. This approach has the virtue of using a simple and easily understood criterion of awareness while testing for a more complex effect of the stimulus. Not seeing anything at all is a very conservative criterion, but it is far less questionable than more specific criteria.

Another approach to the problem has been to look for a qualitative difference between effects of the same stimulus presented above and below the subjective threshold. Such a difference would give converging evidence that the subjective threshold has meaning beyond mere verbal report. In addition, the search for differences between conscious and unconscious processing is itself of considerable interest as a way of assessing the role of consciousness in processing. This is one way of addressing the important question, What is consciousness for? Finding differences between conscious and unconscious processing is a way of answering this question. This amounts to applying the contrastive analysis advocated by Baars (1988; see also James, 1983).

Holender’s (1986) criticism of the unconscious perception literature points out, among other things, that in nearly all of the findings of unconscious perception the response to the stimulus—for example the choice of the heavier weight in the Pierce and Jastrow (1884) study—is the same for both the conscious and the putatively unconscious case. The only difference then is the subjective report that the stimulus was not conscious. Because this report is not independently verifiable, the result is on uncertain footing. If the pattern of results is different below the subjective threshold, this criticism has less force.

Adramatic difference between conscious and unconscious influences is seen in the exclusion experiments of Merikle, Joordens, and Stolz (1995). The exclusion technique, devised by Jacoby (1991; cf. Debner & Jacoby, 1994; Jacoby, Lindsay, & Toth, 1992; Jacoby, Toth, & Yonelinas, 1993), requires a participant not to use some source or type of information in responding. If the information nevertheless influences the response, there seems to be good evidence for a nonconscious effect.

The Merikle et al. (1995) experiment presented individual words, such as spice, one at a time on a computer screen for brief durations ranging up to 214 ms. After each presentation participants were shown word stems like spi—on the screen. Each time, they were asked to complete the stem with any word that had not just been presented. Thus, if spice was presented, that was the only word that they could not use to complete spi—(so spin, spite, spill, etc. would be acceptable, but not spice). They were told that sometimes the presentation would be too brief for them to see anything, but they were asked to do their best. When nothing at all was shown, the stem was completed 14% of the time with one of the prohibited words. This result represents a baseline percentage. The proportion at 29 ms was 13.3%, essentially the baseline level. This performance indicates that 29 ms is below the objective threshold because it was too brief for there to be any effect at all, and of course also below the subjective threshold, which is higher than the objective threshold.

The important finding is that with the longer presentations of 43 ms and 57 ms, there was an increase in the use of the word that was to be excluded. Finally it returned below baseline to 8% at 214 ms. The interpretation of this result is that at 43 ms and 57 ms, the word fell above the objective threshold, so that it was registered at some level by the nervous system and associatively primed spice. However, at these durations it was below the subjective threshold so that its registration was not conscious, and it could not be excluded. Finally, at the still longer duration of 214 ms, it was frequently above the subjective threshold and could be excluded.

This set of findings suggests an important hypothesis about the function of consciousness that we will see applied in many domains, namely, that with consciousness of a stimulus comes the ability to control how it is used. This could only be discovered in cases in which there was some registration of the stimulus below the subjective threshold, as was the case here.

The only concern with this experiment is that the subjective threshold was not independently measured. To make the argument complete, we should have a parallel measurement of the subjective threshold. It would be necessary to show independently that the threshold for conscious report is between 57 ms and 214 ms. This particular criticism does not apply to some similar experiments, such as Cheesman and Merikle’s (1986).

Finally, whatever the definition of consciousness, or of the subjective threshold, there is the possibility that the presented material was consciously perceived, if only for an instant, and then the fact that it had been conscious was forgotten. If this were the case, the folk model in which conscious processing is necessary for any cognitive activity to take place is not challenged. It is very difficult to test the hypothesis that there was a brief moment of forgotten conscious processing that did the cognitive work being attributed to unconscious processing. It may be that this hypothesis is untestable; but testable or not, it seems implausible as a general principle. Complex cognitive acts like participating in a conversation and recalling memories take place without awareness of the cognitive processing that underlies them. If brief moments of immediately forgotten consciousness were nonetheless the motive power for all cognitive processing, it would be necessary to assume that we were all afflicted with a dense amnesia, conveniently affecting only certain aspects of mental life. It seems more parsimonious to assume that these mental events were never conscious in the first place.

Acquiring Tacit Knowledge

One of the most remarkable accomplishments of the human mind consists of learning and using extremely complex systems of knowledge and doing this without conscious effort. Natural language is a premier example of this (i.e., a system so complex that linguists continue to argue over the structure of language), but children all over the world “pick it up” in the normal course of development. Further, most adults communicate fluently with language with little or no attention to explicit grammatical rules.

There are several traditions of research on implicit learning. One example is the learning of what is often termed miniature languages. Braine (1963; for other examples of tacit learning see also Brooks, 1987; Lewicki & Czyzewska, 1994; Lewicki, Czyzewska, & Hill, 1997a, 1997b; Reber, 1992) presented people with sets of material with simple but arbitrary structure: strings of letters such as “abaftab.” In this one example “b” and “f” can follow “a,” but only “t” can follow “f.” Another legal string would be “ababaf.” In no case were people told that there was a rule. They thought that they were only to memorize some strings of arbitrary letters.

Braine’s (1963) experimental strategy used an ingenious kind of implicit testing. In his memory test some strings of letters that had never been presented in the learning phase were assembled according to the rules he had used to create the stimulus set. Other strings in the memory test were actually presented in the learning material but were (rare) exceptions to the rules. The participants were asked to select which of these were actually presented. They were more likely to think that the legal but nonpresented strings were presented than that the illegal ones that actually had been presented were. This is evidence that they had learned a system rather than a set of strings. Postexperimental interviews in experiments of this type generally reveal that most participants had no idea that there were any rules at all.

Given the much more complex example of natural language learning, this result is not surprising, but research of this type is valuable because, in contrast to natural language acquisition, the conditions of learning are controlled, as well as the exact structure of the stimulus set. Implicit learning in natural settings is not limited to language learning. Biederman and Shiffrar (1987), for example, studied the implicit learning of workers determining the sex of day-old chicks. Chicken sexers (as they are called) become very accurate with practice without, apparently, knowing exactly how they do it.

Polanyi (1958), in discussing how scientists learn their craft,arguedthatsuchtacitlearningisthecoreofabilityinany field requiring skill or expertise. Polyani made a useful distinction between a “tool” and an “object” in thought. Knowledge of howtodosomethingisatool,anditistacitlylearnedand used without awareness of its inner structure. The thing being thought about is the “object” in this metaphor, and this “object” is that of which we are aware. Several investigators have said the same thing using slightly different terms, namely, that we are not aware of the mechanisms of cognitive processing, only the results or objects (Baars, 1988; Peacocke, 1986). What and where are these “objects”?

Perceptual Construction

We tend to think of an object of perception—the thing we are looking at or hearing—as an entity with coherence, a single representation in the mind. However, this very coherence has become a theoretical puzzle because the brain does not represent an object as a single entity. Rather, various aspects of the object are separately analyzed by appropriate specialists in the brain, and a single object or image is nowhere to be found. How the brain keeps parts of an object together is termed the binding problem, as will be discussed later in the section on neurophysiology. Here we cover some aspects of the phenomenal object and what it tells us about consciousness.

Rock (1983) presented a case for a “logic of perception,” a system of principles by which perceptual objects are constructed. The principles are themselves like “tools” and are not available to awareness. We can only infer them by observing the effects of appropriate displays on perception. One principle we learn from ambiguous figures is that we can see only one interpretation at a time. There exist many bistable figures, such that one interpretation is seen, then the other, but never both. Logothetis and colleagues (e.g., Logothetis & Sheinberg, 1996) have neurological evidence that the unseen version is represented in the brain, but consciousness is exclusive: Only one of the two is seen at a given time.

Rock suggested that unconscious assumptions determine which version of an ambiguous figure is seen, and, by extension, he would argue that this is a normal component of the perception of unambiguous objects. Real objects seen under normal viewing conditions typically have only one interpretation, and there is no way to show the effect of interpretation so obvious with ambiguous figures. Because the “logic of perception”isnotconscious,thefolkmodelofnaiverealismdoes not detect a challenge in this process; all that one is aware of is the result, and its character is attributed to the object rather than to any unconscious process that may be involved in its representation.

The New Look in perceptual psychology (Erdelyi, 1972; McGinnies, 1949) attempted to show that events that are not registered consciously, as well as unconscious expectations and needs, can influence perceptions or even block them, as in the case of perceptual defense. Bruner (1992) pointed out that the thrust of the research was to demonstrate higher level cognitive effects in perception, not to establish that there were nonconscious ones. However, unacknowledged constructive or defensive processes would necessarily be nonconscious.

The thoroughgoing critiques of the early New Look research program (Eriksen, 1958, 1960, 1962; Fuhrer & Eriksen, 1960; Neisser, 1967) cast many of its conclusions in doubt, but they had the salubrious effect of forcing subsequent researchers to avoid many of the methodological problems of the earlier research. Better controlled research by Shevrin and colleagues (Bunce, Bernat, Wong, & Shevrin, 1999; Shevrin, 2000; Wong, Bernat, Bunce, & Shevrin, 1997) suggests that briefly presented words that trigger defensive reactions (established in independent tests) are registered but that the perception is delayed, in accord with the older definition of perceptual defense.

One of the theoretical criticisms (Eriksen, 1958) of perceptual defense was that it required a “superdiscriminating unconscious” that could prevent frightening or forbidden images from being passed on to consciousness. Perceptual defense was considered highly implausible because it would be absurd to have two complete sets of perceptual apparati, especially if the function of one of them were only to protect the other from emotional distress. If a faster unconscious facility existed, so goes the argument, there would have been evolutionary pressure to have it be the single organ of perception and thus of awareness. The problem with this argument is that it assumes the folk model summarized in Figure 4.1, in which consciousness is essential for perception to be accomplished. If consciousness were not needed for all acts of perception in the first place, then it is possible for material to be processed fully without awareness, to be acted upon in some manner, and only selectively to become available to consciousness.

Bruner (1992) suggested as an alternative to the superdiscriminating unconscious the idea of a judas eye, which is a term for the peephole a speakeasy bouncer uses to screen out the police and other undesirables. The judas eye would be a process that uses a feature to filter perception, just as in the example all that is needed is the sight of a uniform or a badge. However, there is evidence that unconscious detection can rely on relatively deep analysis. For example, Mack and Rock (1998) found that words presented without warning while participants were judging line lengths (a difficult task) were rarely seen. This is one of several phenomena they termed “inattentional blindness.” On the other hand, when the participant’s name or a word with strong emotional content was presented, it was reported much more frequently than were neutral words. (Detection of one’s name from an unattended auditory source has been reported in much-cited research; see Cowan & Wood, 1997; Wood & Cowan, 1995a, 1995b) Because words like “rape” were seen and visually similar words like “rope” were not, the superficial visual analysis of a judas eye does not seem adequate to explain perceptual defense and related phenomena. It seems a better hypothesis that there is much parallel processing in the nervous system, most of it unconscious, and that some products become conscious only after fairly deep analysis.

Another “object” to consider is the result of memory construction. In the model of Figure 1.1, the dominant metaphor for memory is recalling an object that is stored in memory. It is as though one goes to a “place” in memory where the “object” is “stored,” and then brings it into consciousness. William James referred to the “object” retrieved in such a manner as being “as fictitious . . . as the Jack of Spades.” There is an abundance of modern research supporting James. Conscious memory is best viewed as a construction based on pieces of stored information, general knowledge, opinion, expectation, and so on (one excellent source to consult on this is Schacter, 1995). Neisser (1967) likened the process of recall to the work of a paleontologist who constructs a dinosaur from fragments of fossilized bone, using knowledge derived from other reconstructions. The construction aspect of the metaphor is apt, but in memory as in perception we do not have a good model of what the object being constructed is, or what the neural correlate is. The folk concept of a mental “object,” whether in perception or memory, may not have much relation to what is happening in the nervous system when something is perceived or remembered.

Subliminal Priming and Negative Priming

Current interest in subliminal priming derives from Marcel’s work (1983a, 1983b). His research was based on earlier work showing that perception of one word can “prime” a related word (Meyer & Schvaneveldt, 1971). The primed word is processed more quickly or accurately than in control conditions without priming.

Marcel reported a series of experiments in which he obtained robust priming effects in the absence of perception of the prime. His conclusion was that priming, and therefore perception of the prime word, proceeds automatically and associatively, without any necessity for awareness. The conscious model (cf. Figure 1.1) would be that the prime is consciously registered, serves as a retrieval cue for items like the probe, and thus speeds processing for probe items. Marcel presented a model in which consciousness serves more as a monitor of psychological activity than as a critical path between perception and action. Holender (1986) and others have criticized this work on a number of methodological grounds, but subsequent research has addressed most of his criticisms (see Kihlstrom et al., 1992, for a discussion and review of this work).

Other evidence for subliminal priming includes Greenwald, Klinger, and Schuh’s (1995) finding that the magnitude of affective priming does not approach zero as d for detection of the priming word approaches zero (see also Draine & Greenwald, 1998). Shevrin and colleagues demonstrated classical conditioning of the Galvanic Skin Response (GSR) to faces presented under conditions that prevented detection of the faces (Bunce et al., 1999; Wong et al., 1997).

Cheesman and Merikle (1986) reported an interesting dissociation of conscious and unconscious priming effects using a variation of the Stroop (1935) interference effect. In the Stroop effect a color word such as “red” is printed in a color different from the one named, for example, blue. When presented with this stimulus (“red” printed in blue), the participant must say “blue.” Interference is measured as a much longer time to pronounce “blue” than if the word did not name a conflicting color.

Cheesman and Merikle (1986) used a version of the Stroop effect in which a word printed in black is presented briefly on a computer screen, then removed and replaced with a colored rectangle that the participant is to name. Naming of the color of the rectangle was slowed if the color word named a different color. They then showed, first, that if the color word was presented so briefly that the participant reported having seen nothing, naming of the color was still slowed. This would be classified as a case of unconscious perception, but because the same direction of effect is found both consciously and unconsciously, there would be no real dissociation between conscious and unconscious processing. Holender (1986) and other critics could argue reasonably that it was only shown that the Stroop effect was fairly robust at very brief durations, and the supplementary report of awareness by the participant is unrelated to processing.

Cheeseman and Merikle (1986) devised a clever way to answer this criticism. The procedure was to arrange the pairs such that the word “red” would be followed most of the time by the color blue, the word “blue” by yellow, and so on. This created a predictive relationship between the word and the color that participants could strategically exploit to make the task easier. They apparently did use these relationships in naming the colors. With clearly supraliminal presentation of the word, a reversal in the Stroop effect was found such that the red rectangle was named faster when “blue” came before it than when “red” was the word before it.

However, this reversal was found only when the words were presented for longer than the duration needed to perceive them. When the same participants saw the same sequence of stimuli with words that were presented too briefly for conscious perception, they showed only the normal Stroop effect. The implication of this result is that the sort of interference found in the Stroop effect is an automatic process that does not require conscious perception of the word. What consciousness of the stimulus adds is control. Only when there was conscious registration of the stimulus could the participants use the stimulus information strategically.

Negative priming is an interference, measured in reaction time or accuracy, in processing a stimulus that was previously presented but was not attended. It was first discovered by Dalrymple-Alford and Budayr (1966) in the context of the Stroop effect (see also Neill & Valdes, 1996; Neill, Valdes, & Terry, 1995; Neill & Westberry, 1987). They showed that if a participant was given, say, the word “red” printed in blue, then on the next pair was shown the color red, it took longer to name than other colors.

Negative priming has been found in many experiments in which the negative prime, while presented supraliminally, is not consciously perceived because it is not attended. Tipper (1985) presented overlapping line drawings, one drawn in red and the other in green. Participants were told to name only the item in one of the colors and not the other. After participants had processed one of the drawings, the one they had excluded was sometimes presented on the next trial. In these cases the previously unattended drawing was slower to name than in a control condition in which it had not been previously presented. Banks, Roberts, and Ciranni (1995) presented pairs of words simultaneously to the left and to the right ears. Participants were instructed to repeat aloud only the word presented to one of the ears. If a word that had been presented to the unattended ear was presented in the next pair to be repeated, the response was delayed.

As mentioned in the previous section (cf. Cowan & Wood, 1997; Goldstein & Fink, 1981; Mack & Rock, 1999; Rock & Gutman, 1981), material perceptually available but not attended is often the subject of “inattentional blindness”; that is, it seems to be excluded from awareness. The finding of negative priming suggests that ignored material is perceptually processed and represented in the nervous system, but is evidenced only by its negative consequences for later perception, not by any record that is consciously available.

A caveat regarding the implication of the negative priming findings for consciousness is that a number of researchers have found negative priming for fully attended stimuli (MacDonald & Joordens, 2000; Milliken, Joordans, Merikle, & Seiffert, 1998). These findings imply that negative priming cannot be used as evidence by itself that the perception of an item took place without awareness.

Priming studies have been used to address the question of whether the unconscious is, to put it bluntly, “smart” or “dumb.” This is a fundamental question about the role of consciousness in processing; if unconscious cognition is dumb, the function of consciousness is to provide intelligence when needed. If the unconscious is smart—capable of doing a lot on its own—it is necessary to find different roles for consciousness.

Greenwald (1992) argued that the unconscious is dumb because it could not combine pairs of words in his subliminal priming studies. He found that some pairs of consciously presented words primed other words on the basis of a meaning that could only be gotten by combining them. For example, presented together consciously, words like “KEY” and “BOARD” primed “COMPUTER. ”When presented for durations too brief for awareness they primed “LOCK” and “WOOD,” butnot “COMPUTER.” On the other hand, Shevrin and Luborsky (1961) found that subliminally presenting pictures of a pen and a knee resulted in subsequent free associations that had “penny” represented far above chance levels. The resolution of this difference may be methodological, but there are other indications that unconscious processing may in some ways be fairly smart even if unconscious perception is sometimes a bit obtuse. Kihlstrom (1987) reviews many other examples of relative smart unconscious processing.

A number of subliminal priming effects have lingered at the edge of experimental psychology for perhaps no better reason than that they make hardheaded experimentalists uncomfortable. One of these is subliminal psychodynamic activation (SPA; Silverman, 1983). Silverman and others (see Weinberger, 1992, for a review) have found that subliminal presentation of the single sentence, “Mommy and I are one,” has a number of objectively measurable positive emotional effects (when compared to controls such as “People are walking” or “Mommy is gone”). A frequent criticism is that the studies did not make sure that the stimulus was presented unconsciously. However, many of us would be surprised if the effects were found even with clearly consciously perceived stimuli. It is possible, in fact, that the effects depend on unconscious processing, and it would be interesting to see if the effects were different when subliminal and clearly supraliminal stimuli are compared.

Implicit Memory

Neurological cases brought this topic to the forefront of memory research, with findings of preserved memory in people with amnesia (Schacter, 1987). The preserved memory is termed implicit because it is a tacit sort of memory (i.e., memory that is discovered in use), not memory that is consciously retrieved or observed. People with amnesia would, for example, work each day at a Tower of Hanoi puzzle, and each day assert that they had never seen it before, but each day show improvement in speed of completing it (Cohen, Eichenbaum, Deacedo, & Corkin, 1985). The stem completion task of Merikle et al. (1995) is another type of implicit task. After the word spice was presented, its probability of use would be increased even though the word was not consciously registered. In a memory experiment, people with amnesia and normals who could not recall the word spice would nevertheless be more likely to use it to complete the stem than if it had not been presented.

Nonconscious Basis of Conscious Content

We discussed earlier how the perceptual object is a product of complex sensory processes and probably of inferential processes as well. Memory has also been shown to be a highly inferential skill, and the material “retrieved” from memory has as much inference in it as retrieval. These results violate an assumption of the folk model by which objects are not constructed but are simply brought into the central arena, whether from perception or memory. Errors of commission in memory serve much the same evidentiary function in memory as do ambiguous figures in perception, except that they are much more common and easier to induce. The sorts of error we make in eyewitness testimony, or as a result of a number of documented memory illusions (Loftus, 1993), are particularly troublesome because they are made—and believed— with certainty. Legitimacy is granted to a memory on the basis of a memory’s clarity, completeness, quantity of details, and other internal properties, and the possibility that it is the result of suggestion, association, or other processes is considered invalidated by the internal properties (Henkel, Franklin, & Johnson, 2000). Completely bogus memories, induced by an experimenter, can be believed with tenacity (cf. also Schacter, 1995; see also Roediger & McDermott, 1995).

The Poetzl phenomenon is the reappearance of unconsciously presented material in dreams, often transformed so that the dream reports must be searched for evidence of relation to the material. The phenomenon has been extended to reappearance in free associations, fantasies, and other forms of output, and a number of studies appear to have found Poetzl effects with appropriate controls and methodology (Erdelyi, 1992; Ionescu & Erdelyi, 1992). Still, the fact that reports must be interpreted and that base rates for certain topics or words are difficult to assess casts persistent doubt over the results, as do concerns about experimenter expectations, the need for double-blind procedures in all studies, and other methodological issues.

Consciousness, Will, and Action

In the folk model of consciousness (see Figure 1.1) a major inconsistency with any scientific analysis is the free will or autonomous willing of the homunculus. The average person will report that he or she has free will, and it is often a sign of mental disorder when a person complains that his or her actions are constrained or controlled externally. The problem of will is as much of a hard problem (Chalmers, 1996) as is the problem conscious experience. How can willing be put in a natural-science framework?

One approach comes from measurements of the timing of willing in the brain. Libet and colleagues (Libet, 1985, 1993; Libet, Alberts, & Wright, 1967; Libet et al., 1964) found that changes in EEG potentials recorded from the frontal cortex began 200 ms to 500 ms before the participant was aware of deciding to begin an action (flexion of the wrist) that was to be done freely. One interpretation of this result is that the perception we have of freely willing is simply an illusion, because by these measurements it comes after the brain has already begun the action.

Other interpretations do not lead to this conclusion. The intention that ends with the motion of the hand must have its basis in neurological processes, and it is not surprising that the early stages are not present in consciousness. Consciousness has a role in willing because the intention to move can be arrested before the action takes place (Libet, 1993) and because participation in the entire experimental performance is a conscious act. The process of willing would seem to be an interplay between executive processes, memory, and monitoring, some of which we are conscious and some not. Only the dualistic model of a completely autonomous will controlling the process from the top, like the Cartesian soul fingering the pineal gland from outside of material reality, is rejected. Having said this, we must state that a great deal of theoretical work is needed in this area.

The idea of unconscious motivation dates to Freud and before. Freudian slips (Freud, 1965), in which unconscious or suppressed thoughts intrude on speech in the form of action errors, should constitute a challenge to the simple folk model by which action is transparently the consequence of intention. However, the commonplace cliché that one has made a Freudian slip seems to be more of a verbal habit than a recognition of unconscious determinants of thought because unconscious motivation is not generally recognized in other areas.

Wegner (1994) and his colleagues have studied some paradoxical (but embarrassingly familiar) effects that result from attempting to suppress ideas. In what they term ironic thought suppression, they find that the suppressed thought can pose a problem for control of action. Participants trying to suppress a word were likely to blurt it out when speeded in a word association task. Exciting thoughts (about sex) could be suppressed with effort, but they tended to burst into awareness later. The irony of trying to suppress a thought is that the attempt at suppression primes it, and then more control is needed to keep it hidden than if it had not been suppressed in the first place. The intrusion of unconscious ideation in a modified version of the Stroop task (see Baldwin, 2001) indicates that the suppressed thoughts can be completely unconscious and still have an effect on processing.

Attentional Selection

In selective attention paradigms the participant is instructed to attend to one source of information and not to others that are presented. For example, in the shadowing paradigm the participant hears one verbal message with one ear and a completely different one with the other. “Shadowing” means to repeat verbatim a message one hears, and that is what the participants do with one of the two messages. This subject has led to a large amount of research and to attention research as one of the most important areas in cognitive psychology.

People generally have little awareness of the message on the ear not shadowed (Cherry, 1957; Cowan & Wood, 1997). What happens to that message? Is it lost completely, or is some processing performed on it unconsciously? Treisman (1964; see also Moray, 1969) showed that participants responded to their name on the unattended channel and would switch the source that they were shadowing if the material switched source. Both of these results suggest that unattended material is processed to at least some extent. In the visual modality Mack and Rock (1998) reported that in the “inattentional blindness” paradigm a word presented unexpectedly when a visual discrimination is being conducted is noticed infrequently, but if that word spells the participant’s name or an emotional word, it is noticed much more often. For there to be a discrimination between one word and another on the basis of their meaning and not any superficial feature such as length or initial letter, the meaning must have been extracted.

Theories of attention differ on the degree to which unattended material is processed. Early selection theories assume that the rejected material is stopped at the front gate, as it were (cf. Broadbent, 1958). Unattended material could only be monitored by switching or time-sharing. Late selection theories (Deutsch & Deutsch, 1963) assumed that unattended material is processed to some depth, perhaps completely, but that limitations of capacity prevent it from being perceived consciously or remembered. The results of the processing are available for a brief period and can serve to summon attention, bias the interpretation of attended stimuli, or have other effects. One of these effects would be negative priming, as discussed earlier. Another effect would be the noticing of one’s own name or an emotionally charged word from an unattended source.

An important set of experiments supports the late selection model, although there are alternative explanations. In an experiment that required somewhat intrepid participants, Corteen and Wood (1972) associated electric shocks with words to produce a conditioned galvanic skin response. After the conditioned response was established, the participants performed a shadowing task in which the shock-associated words were presented to the unattended ear. The conditioned response was still obtained, and galvanic skin responses were also obtained for words semantically related to the conditioned words. This latter finding is particularly interesting because the analysis of the words would have to go deeper than just the sound to elicit these associative responses. Other reports of analysis of unattended material include those of Corteen and Dunn (1974); Forster and Govier (1978); MacKay (1973); and Von Wright, Anderson, and Stenman (1975). On the other hand, Wardlaw and Kroll (1976), in a careful series of experiments, did not replicate the effect.

Replicating this effect may be less of an issue than the concern over whether it implies unconscious processing. This is one situation in which momentary conscious processing of the nontarget material is not implausible. Several lines of evidence support momentary conscious processing. For example, Dawson and Schell (1982), in a replication of Corteen and Wood’s (1972) experiment, found that if participants were asked to name the conditioned word in the nonselected ear, they were sometimes able to do so. This suggests that there was attentional switching, or at least some awareness, of material on the unshadowed channel. Corteen (1986) agreed that this was possible. Treisman and Geffen (1967) found that there were momentary lapses in shadowing of the primary message when specified targets were detected in the secondary one. MacKay’s (1973) results were replicated by Newstead and Dennis (1979) only if single words were presented on the unshadowed channel and not if words were embedded in sentences. This finding suggests that occasional single words could attract attention and give rise to the effect, while the continuous stream of words in sentences did not create the effect because they were easier to ignore.

Dissociation Accounts of Some Unusual and Abnormal Conditions

The majority of psychological disorders, if not all, have important implications for consciousness, unconscious processing, and so on. Here we consider only disorders that are primarily disorders of consciousness, that is, dissociations and other conditions that affect the quality or the continuity of consciousness, or the information available to consciousness.

Problems in self-monitoring or in integrating one’s mental life about a single personal self occur in a variety of disorders. Frith (1992) described many of the symptoms that individuals with schizophrenia exhibit as a failure in attributing their actions to their own intentions or agency. In illusions of control, for example, a patient may assert that an outside force made him do something like strip off his clothes in public. By Frith’s account this assertion would result from the patient’s being unaware that he had willed the action, in other words, from a dissociation between the executive function and self-monitoring. The source of motivation is attributed to an outside force (“the Devil made me do it”), when it is only outside of the self system of the individual. For another example, individuals with schizophrena are often found to be subvocalizing the very voices that they hear as hallucinations (Frith, 1992, 1996); hearing recordings of the vocalizations does not cause them to abandon the illusion. There are many ways in which the monitoring could fail (see Proust, 2000), but the result is that the self system does not “own” the action, to use Kihlstrom’s (1992, 1997) felicitous term.

This lack of ownership could be as simple as being unable to remember that one willed the action, but that seems too simple to cover all cases. Frith’s theory is sophisticated and more general. He hypothesized that the self system and the source of willing are separate neural functions that are normally closely connected. When an action is willed, motor processes execute the willed action directly, and a parallel process (similar to feedforward in control of eye movements; see Festinger & Easton, 1974) informs the self system about the action. In certain dissociative states, the self system is not informed. Then, when the action is observed, it comes as a surprise, requiring explanation. Alien hand syndrome (Chan & Liu, 1999; Inzelberg, Nisipeanu, Blumen, & Carasso, 2000) is a radical dissociation of this sort, often connected with neurologic damage consistent with a disconnection between motor planning and monitoring in the brain. In this syndrome the patient’s hand will sometimes perform complex actions, such as unbuttoning his or her shirt, while the individual watches in horror.

Classic dissociative disorders include fugue states, in which at the extreme the affected person will leave home and begin a new life with amnesia for the previous one, often after some sort of trauma (this may happen more often in Hollywood movies than in real life, but it does happen). In all of these cases the self is isolated from autobiographical memory. Dissociative identity disorder is also known as multiple personality disorder. There has been doubt about the reality of this disorder, but there is evidence that some of the multiple selves do not share explicit knowledge with the others (Nissen, et al., 1994), although implicit memories acquired by one personality seem to be available to the others.

Now termed conversion disorders, hysterical dissociations, such as blindness or paralysis, are very common in wartime or other civil disturbance. One example is the case of 200 Cambodian refugees found to have psychogenic blindness (Cooke, 1991). It was speculated that the specific form of the conversion disorder that they had was a result of seeing terrible things before they escaped from Cambodia. Whatever the reason, the disorder could be described as a blocking of access of the self system to visual information, that is, a dissociation between the self and perception. One piece of evidence for this interpretation is the finding that a patient with hysterical analgesia in one arm reported no sensations when stimulated with strong electrical shocks but did have normal changes in physiological indexes as they were administered (Kihlstrom, et al., 1992). Thus the pain messages were transmitted through the nervous system and had many of the normal effects, but the conscious monitoring system did not “own” them and so they were not consciously felt.

Anosognosia (Galin, 1992; Ramachandran, 1995, 1996; Ramachandran, et al., 1996) is a denial of deficits after neurological injury. This denial can take the form of a rigid delusion that is defended with tenacity and resourcefulness. Ramachandran et al. (1996) reported the case of Mrs. R., a right-hemisphere stroke patient who denied the paralysis of her left arm. Ramachandran asked her to point to him with her right hand, and she did. When asked to point with her paralyzed left hand, the hand remained immobile, but she insisted that she was following the instruction. When challenged, she said, “I have severe arthritis in my shoulder, you know that doctor. It hurts.”

Bisiach and Geminiani (1991) reported the case of a woman suddenly stricken with paralysis of the left side who complained on the way to the hospital that another patient had forgotten a left hand and left it on the ambulance bed. She was able to agree that the left shoulder and the upper arm were hers, but she became evasive about the forearm and continued to deny the hand altogether.

Denials of this sort are consistent with a dissociation between the representation of the body part or the function (Anton’s syndrome is denial of loss of vision, for example) and the representation of the self. Because anosognosia is specific to the neurological conditions (almost always righthemisphere damage), it is difficult to argue that the denial comes from an unwillingness to admit the deficit. Anosognosia is rarely found with equally severe paralysis resulting from left-hemisphere strokes (see the section titled “Observations from Human Pathology” for more on the neurological basis for anosognosia and related dissociations).

Vaudeville and circus sideshows are legendary venues for extreme and ludicrous effects of hypnotic suggestion, such as blisters caused by pencils hypnotically transformed to red-hot pokers, or otherwise respectable people clucking like chickens and protecting eggs they thought they laid on stage. It is tempting to reject these performances as faked, but extreme sensory modifications can be replicated under controlled conditions (Hilgard, 1968). The extreme pain of cold-pressor stimulation can be completely blocked by hypnotic suggestion in well-controlled experimental situations. Recall of a short list of words learned under hypnosis can also be blocked completely by posthypnotic suggestion. In one experiment Kihlstrom (1994) found that large monetary rewards were ineffective in inducing recall, much to the bewilderment of the participants, who recalled the items quite easily when suggestion was released but the reward was no longer available.

Despite several dissenting voices (Barber, 2000), hypnotism does seem to be a real phenomenon of extraordinary and verifiable modifications of consciousness. Hilgard’s (1992) neodissociation theory treats hypnosis as a form of dissociation whereby the self system can be functionally disconnected from other sources of information, or even divided internally into a reporting self and a hidden observer.

One concern with the dissociative or any other theory of hypnosis is the explanation of the power of the hypnotist. What is the mechanism by which the hypnotist gains such control over susceptible individuals? Without a good explanation of the mechanism of hypnotic control, the theory is incomplete, and any results are open to dismissive speculation. We suggest that the mechanism may lie in a receptivity to control by others that is part of our nature as social animals.By this account hypnotic techniques are shortcuts to manipulating—for a brief time but with great force—the social levers and strings that are engaged by leaders, demagogues, peers, and groups in many situations.

What Is Consciousness For? Why Aren’t We Zombies?

Baars (1988, 1997) suggested that a contrastive analysis is a powerful way to discover the function of consciousness. If unconscious perception does take place, what are the differences between perception with and without consciousness? We can ask the same question about memory with and without awareness. To put it another way, what does consciousness add? As Searle (1992, 1993) pointed out, consciousness is an important aspect of our mental life, and it stands to reason that it must have some function. What is it?

A few regularities emerge when the research on consciousness is considered. One is that strategic control over action and the use of information seems to come with awareness. Thus, in the experiments of Cheesman and Merikle (1986) or Merikle et al. (1995), the material presented below the conscious threshold was primed but could not be excluded from response as well as it could when presentation was above the subjective threshold. As Shiffrin and Schneider (1977) showed, when enough practice is given to make detection of a given target automatic (i.e., unconscious), the system becomes locked into that target and requires relearning if the target identity is changed. Automaticity and unconscious processing preserve capacity when they are appropriate, but the cost is inflexibility. These results also suggest that consciousness is a limited-capacity medium and that the choice in processing is between awareness, control, and limited capacity, on the one hand, or automaticity, unconsciousness, and large capacity, on the other.

Another generalization is that consciousness and the self are intimately related. Dissociation from the self can lead to unconsciousness; conversely, unconscious registration of material can cause it not to be “owned” by the self. This is well illustrated in the comparison between implicit and explicit memory. Implicit memory performance is automatic and not accompanied by a feeling of the sense that “I did it.” Thus, after seeing a list containing the word “motorboat,” the individual with amnesia completely forgets the list or even the fact that he saw a list, but when asked to write a word starting with “mo—,” he uses “motorboat” rather than more common responses such as “mother” or “moth.” When asked why he used “motorboat,” he would say, “I don’t know. It just popped into my mind.” The person with normal memory who supplies a stem completion that was primed by a word no longer recallable would say the same thing: “It just popped into my head.” The more radical lack of ownership in anosognosias is a striking example of the disconnection between the self and perceptual stimulation. Hypnosis may be a method of creating similar dissociations in unimpaired people, so that they cannot control their actions, or find memory recall for certain words blocked, or not feel pain when electrically shocked, all because of an induced separation between the self system and action or sensation.

We could say that consciousness is needed to bring material into the self system so that it is owned and put under strategic control. Conversely, it might be said that consciousness emerges when the self is involved with cognition. In the latter case, consciousness is not “for” anything but reflects the fact that what we call conscious experience is the product of engagement of the self with cognitive processing, which could otherwise proceed unconsciously. This leaves us with the difficult question of defining the self.

Conclusions

Probably the most important advance in the study of consciousness might be to replace the model of Figure 1.1 with something more compatible with findings on the function of consciousness. There are several examples to consider. Schacter (1987) proposed a parallel system with a conscious monitoring function. Marcel’s (1983a, 1983b) proposed model is similar in that the conscious processor is a monitoring system. Baars’s (1988) global workspace model seems to be the most completely developed model of this type (see Franklin & Graesser, 1999, for a similar artificial intelligence model), with parallel processors doing much of the cognitive work and a self system that has executive functions. We will not attempt a revision of Figure 1.1 more in accord with the current state of knowledge, but any such revision would have parallel processes, some of which are and some of which are not accessible to consciousness. The function of consciousness in such a picture would be controlling processes, monitoring activities, and coordinating the activities of disparate processors. Such an intuitive model might be a better starting point, but we are far from having a rigorous, widely accepted model of consciousness.

Despite the continuing philosophical and theoretical difficulties in defining the role of consciousness in cognitive processing, the study of consciousness may be the one area that offers some hope of integrating the diverse field of cognitive psychology. Virtually every topic in the study of cognition, fromperceptiontomotorcontrol,hasanimportantconnection with the study of consciousness. Developing a unified theory of consciousness could be a mechanism for expressing how these different functions could be integrated. In the next section we examine the impact of the revolution in neuroscience on the study of consciousness and cognitive functioning.

Neuroscientific Approaches to Consciousness

Data from Single-Cell Studies

One of the most compelling lines of research grew out of Nikos Logothetis’s discovery that there are single cells in macaque visual cortex whose activity is well correlated with the monkey’s conscious perception (Logothetis, 1998; Logothetis & Schall, 1989). Logothetis’s experiments were a variant on the venerable feature detection paradigm. Traditional feature detection experiments involve presenting various visual stimuli to a monkey while recording (via an implanted electrode) the activity of a single cell in some particular area of visual cortex. Much of what is known about the functional organization of visual cortex was discovered through such studies; to determine whether a given area is sensitive to, say, color or motion, experimenters vary the relevant parameter while recording from single cells and look for cells that show consistently greater response to a particular stimulus type.

Of course, the fact that a single cell represents some visual feature does not necessarily imply anything about what the animal actually perceives; many features extracted by early visual areas (such as center-surround patches) have no direct correlate in conscious perception, and much of the visual system can remain quite responsive to stimuli in an animal anesthetized into unconsciousness.The contribution of Logothetis and his colleagues was to explore the distinction between what is represented by the brain and what is perceived by the organism. They did so by presenting monkeys with “rivalrous” stimuli—stimuli that support multiple, conflicting interpretations of the visual scene. One common rivalrous stimulus involves two fields of lines flowing past each other; humans exposed to this stimulus report that the lines fuse into a grating that is either upward-moving or downwardmoving and that the perceived direction of motion tends to reverse approximately once per second.

In area MT, which is known to represent visual motion, some cells will respond continuously to a particular stimulus (e.g., an upward-moving grating) for as long as it is present. Withinthispopulation,asubpopulationwasfoundthatshowed a fluctuating response to rivalrous stimuli, and it was shown thattheactivityofthesecellswascorrelatedwiththemonkey’s behavioral response. For example, within the population of cellsthatrespondedstronglytoupward-movinggratings,there was a subpopulation whose activity fluctuated (approximately once per second) in response to a rivalrous grating, and whose periods of high activity were correlated with the monkey’s behavioral reports of seeing an upward-moving grating.

This discovery was something of a watershed in that it established that the activity of sensory neurons is not always explicable solely in terms of distal stimulus properties. Comparing the trials where a given neuron is highly active with those where it is less active, no difference can be found in the external stimulus or in the experimental condition. The only difference that tracks the activity of the cell is the monkey’s report about its perception of motion. One might propose that the cells are somehow tracking the monkey’s motor output or intention, but this would be hard to support given their location and connectivity. The most natural interpretation is that these neurons reflect—and perhaps form the neural basis for—the monkey’s awareness of visual motion.

Some single-cell research seems to show a direct effect of higher level processes, perhaps related to awareness or intentionality, on lower level processes. For example, Moran and Desimone (1985) showed that a visual cell’s response is modified by the monkey’s attentional allocation in its receptive field.

Data from Human Pathology