Sample Conditioning and Learning Research Paper. Browse other research paper examples and check the list of research paper topics for more inspiration. iResearchNet offers academic assignment help for students all over the world: writing from scratch, editing, proofreading, problem solving, from essays to dissertations, from humanities to STEM. We offer full confidentiality, safe payment, originality, and money-back guarantee. Secure your academic success with our risk-free services.

Earth’s many microenvironments change over time, often creating conditions less hospitable to current life-forms than conditions that existed prior to the change. Initially, lifeforms adjusted to these changes through the mechanisms now collectively called evolution. Importantly, evolution improves a life-form’s functionality (i.e., so-called biological fitness as measured in terms of reproductive success) in the environment across generations. It does nothing directly to enhance an organism’s fit to the environment within the organism’s life span. However, animals did evolve a mechanism to improve their fit to the environment within each animal’s life span. Specifically, animals have evolved the potential to change their behavior as a function of experienced relationships among events, with eventshere referring to both events under the control of the animal (i.e., responses) and events not under the direct control of the animal (i.e., stimuli). Changing one’s behavior as a function of prior experience is what we mean by conditioning and learning (used here synonymously). The observed behavioral changes frequently are seemingly preparatory for an impending, often biologically significant event that is contingent upon immediately preceding stimuli, and sometimes the behavioral changes serve to modify the impending event in an adaptive way.

Academic Writing, Editing, Proofreading, And Problem Solving Services

Get 10% OFF with 24START discount code

In principle, there are many possible sets of rules by which an organism might modify its behavior to increase its biological fitness (preparing for and modifying impending events) as a result of prior exposure to specific event contingencies. However, organisms use only a few of these sets of rules; these constitute what we call biological intelligence. Here we summarize, at the psychological level, the basic principles of elementary biological intelligence: conditioning and elementary learning. At the level of the basic learning described here, research has identified a set of rules (laws) that appear to apply quite broadly across many species, including humans. Moreover, within subjects these laws appear to apply, with only adjustments of parameters being required, across motivational systems and tasks (e.g., Domjan, 1983; Logue, 1979). Obviously, as we look at more complex behavior, species and task differences have greater influence, which seemingly reflects the differing parameters previously mentioned interacting with one another. For example, humans as well as dogs readily exhibit conditioned salivation or conditioned fear, whereas social interactions are far more difficult to describe through a general set of laws.

Learning is the intervening process that mediates between an environmental experience and a change in the behavior of the organism. More precisely, learning is ordinarily defined as a relatively permanent change in a subject’s response potential, resulting from experience, that is specific to the presence of stimuli similar to those from that experience, and cannot be attributed entirely to changes in receptors or effectors. Notably, the term response potential allows for learning that is not necessarily immediately expressed in behavior (i.e., latent learning), and the requirement that a stimulus from the experience be present speaks to learning being stimulus specific as opposed to a global change in behavior. Presumably, more complex changes in behavior are built from a constellation of such elementary learned relationships (hereafter called associations).

Interest in the analysis of basic learning began a century ago with its roots in several different controversies. Among these was the schism between empiricism, represented by the British empiricist philosophers, Hume and J. S. Mill, and rationalism, represented by Descartes and Kant. The empiricists assumed that knowledge about the world was acquired through interaction with events in the world, whereas rationalists argued that knowledge was inborn (at least in humans) and experience merely helped us organize and express that knowledge. Studies of learning were performed in part to determine the degree to which beliefs about the world could be modified by experience. Surely demonstrations of behavioral plasticity as a function of experience were overtly more compatible with the empiricist view, but the rationalist position never denied that experience influenced knowledge and the behavior. It simply held that knowledge arose within the organism, rather than directly from the experiencing of events. Today, this controversy (reflected in more modern terms as the nature vs. nurture debate) has faded due to the realization that experience provides the content of knowledge about the world, but extracting relationships between events from experience requires a nervous system that is predisposed to extract these relationships. Predispositions to identify relationships between events, although strongly modulated during development by experience, are surely influenced by genetic composition. Hence, acquired knowledge, as revealed through a change in behavior, undoubtedly reflects an interaction of genes (rationalism-nature) and experience (empiricism-nurture).

The second controversy that motivated studies of learning was a desire to understand whether acquired thought and behavior could better be characterized by mechanism, which left the organism as a vessel in which simple laws of learning operated, or by mentalism, which often attributed to the organism some sort of conscious control of its thought and behavior. The experimental study of learning that began in the early twentieth century was partly in reaction to the mentalism implicit in the introspective approach to psychology that prevailed at that time (Watson, 1913). Mechanism was widely accepted as providing a compelling account of simple reflexes. The question was whether it also sufficed to account for behaviors that were more complex and seemingly volitional. Mechanism has been attacked for ignoring the (arguably obvious) active role of the organism in determining its behavior, whereas mentalism has been attacked for passing the problem of explaining behavior to a so-called homunculus. Mentalism starts out with a strong advantage in this dispute because human society, culture, and religion are all predicated on people’s being free agents who are able to determine and control their behavior. In contrast, most theoretical accounts of learning (see Tolman, e.g., 1932, as an exception) are mechanistic and try to account for acquired behavior uniquely in terms of (a) past experience, which is encoded in neural representations; (b) present stimulation; and (c) genetic predispositions (today at least), notably excluding any role for free will. To some degree, the mechanism-mentalism controversy has been confounded with levels of analysis, with mechanistic accounts of learning tending to be more molecular. Obviously, different levels of analysis may be complementary rather than contradictory.

The third controversy that stimulated interest in learning was the relationship of humans to other species. Human culture and religion has traditionally treated humans as superior to animals on many dimensions. At the end of the nineteenth century, however, acceptance of Darwin’s theory of evolution by natural selection challenged the uniqueness of humans. Defenders of tradition looked at learning capacity as a demonstration of the superiority of humans over animals, whereas Darwinians looked to basic learning to demonstrate continuity across species. A century of research has taught us that, although species do differ appreciably in behavioral plasticity, with parametric adjustment a common set of laws of learning appears to apply across at least all warmblooded animals (Domjan, 1983). Moreover, these parametric adjustments do not always reflect a greater learning capacity in humans than in other species. As a result of evolution in concert with species-specific experience during maturation, each species is adept at dealing with the tasks that the environment commonly presents to that particular species in its ecological niche. For example, Clark’s nutcrackers (birds that cache food) are able to remember where they have stored thousands of edible items (Kamil & Clements, 1990), a performance that humans would be hard-pressed to match.

The fourth factor that stimulated an interest in the study of basic learning was a practical one. Researchers such as Thorndike (1949) and Guthrie (1938) were particularly concerned with identifying principles that might be applied in our schools and toward other needs of our society. Surely this goal has been fulfilled at least in part, as can be seen for example in contemporary use of effective procedures for behavior modification.

Obviously, the human-versus-animal question (third factor listed) required that nonhuman animals be studied, but the other questions in principle did not. However, animal subjects were widely favored for two reasons. First, the behavior of nonhuman subjects was assumed by some researchers to be governed by the same basic laws that apply to human behavior, but in a simpler form which made them more readily observable. Although many researchers today accept the assumption of evolutionary continuity, research has demonstrated that the behavior of nonhumans is sometimes far from simple. The second reason for studying learning in animals has fared better. When seeking general laws of learning that obtain across individuals, individual differences can be an undesirable source of noise in one’s data. Animals permit better control of irrelevant differences in genes and prior experience, thereby reducing individual differences, than is ethically or practically possible with humans.

The study of learning in animals within simple Pavlovian situations (stimulus-stimulus learning) had many parallels with the study of simple associative learning in humans that was prevalent from the 1880s to the 1960s. The so-called cognitive revolution that began in the 1960s largely ended such research with humans and caused the study of basic learning in animals to be viewed by some as irrelevant to our understanding of human learning. The cognitive revolution was driven largely by (a) a shift from trying to illuminate behavior with the assistance of hypothetical mental processes, to trying to understand mental processes through the study of behavior, and (b) the view that the simple tasks that were being studied until that time told us little about learning and memory in the real world (i.e., lacked ecological validity). However, many of today’s cognitive psychologists often return to the constructs that were initially developed before the advent of the field now called cognitive psychology (e.g., McClelland, 1988). Of course, issues of ecological validity are not to be dismissed lightly. The real question is whether complex behavior in natural situations can better be understood by reducing the behavior into components that obey the laws of basic learning, or whether a more molar approach will be more successful. Science would probably best be served by our pursuing both approaches. Clearly, the approach of this research paper is reductionist. Representative of the potential successes that might be achieved through application of the laws of basic learning, originally identified in the confines of the sterile laboratory, are a number of quasinaturalistic studies of seemingly functional behaviors. Some examples are provided by Domjan’s studies of how Pavlovian conditioning improves the reproductive success of Japanese quail (reviewed in Domjan & Hollis, 1988), Kamil’s studies of how the laws of learning facilitate the feeding systems of different species of birds (reviewed in Kamil, 1983), and Timberlake’s studies of how different components of rats’behavior, each governed by general laws of learning, are organized to yield functional feeding behavior in quasi-naturalistic settings (reviewed in Timberlake & Lucas, 1989).

Although this research paper focuses on the content of learning and the conditions that favor its occurrence and expression rather than the function of learning, it is important to emphasize that the capacity for learning evolved because it enhances an animal’s biological fitness (reviewed in Shettleworth, 1998). The vast majority of instances of learning are clearly functional. However, there are many documented cases in which specific instances of learned behavior are detrimental to the well-being of an organism (e.g., Breland & Breland, 1961; Gwinn, 1949). Typically, these instances arise in situations with contingencies contrary to those prevailing in the animal’s natural habitat or inconsistent with its past experience (see this research paper’s section entitled “Predispositions: Genetic and Experiential”). An increased understanding of when learning will result in dysfunctional behavior is currently contributing to contemporary efforts to design improved forms of behavior therapy.

This research paper selectively reviews research on both Pavlovian (i.e., stimulus-stimulus) and instrumental (response-stimulus) learning. In many respects, an organism’s response may be functionally similar to a discrete stimulus, as demonstrated by the fact that most phenomena identified in Pavlovian conditioning have instrumental counterparts. However, one important difference is that Pavlovian research has generally studied qualitative relationships (e.g., whether the frequency or magnitude of an acquired response increases or decreases with a specific treatment). In contrast, much instrumental research has sought quantitative relations between the frequency of aresponse and its (prior) environmental consequences. Readers interested in the preparations that have traditionally been used to study acquired behavior should consult Hearst’s (1988) excellent review, which in many ways complements this research paper.

Empirical Laws Of Pavlovian Responding

Given appropriate experience, a stimulus will come to elicit behavior that is not characteristic of responding to that stimulus, but is characteristic for a second stimulus (hereafter called an outcome). For example, in Pavlov’s (1927) classic studies, dogs salivated at the sound of a bell if previously the bell had been rung before food was presented. That is, the bell acquired stimulus control over the dogs’ salivation. Here we summarize the relationships between stimuli that promote such acquired responding, although we begin with changes in behavior that occur to a single stimulus.

Single-Stimulus Phenomena

The simplest type of learning is that which results from exposure to a single stimulus. For example, if you hear a loud noise, you are apt to startle. But if that noise is presented repeatedly, the startle reaction will gradually decrease, a process called habituation. Occasionally, responding may increase with repeated presentations of a stimulus, a phenomenon called sensitization. Habituation is far more common than sensitization, with sensitization ordinarily being observed only with very intense stimuli. Habituation is regarded as a primitive form of learning, and is sometimes studied explicitly because researchers thought that its simplicity might allow the essence of the learning process to be observed more readily than in situations involving multiple stimuli. Consistent with this view, habituation exhibits many of the same characteristics of learning seen with multiple stimuli (Thompson & Spencer, 1966). These include (a) decelerating acquisition per trial over increasing numbers of trials; (b) a so-called spontaneous loss of habituation over increasing retention intervals; (c) more rapid reacquisition of habituation over repeated series of habituation trials; (d) slower habituation over trials if the trials are spaced, but slower spontaneous loss of habituation thereafter (rate sensitivity); (e) further habituation trials after behavioral change over trials has ceased retard spontaneous loss from habituation (i.e., overtraining results in some sort of initially latent learning); (f) generalization to other stimuli in direct relation to the similarity of the habituated stimulus to the test stimulus; and (g) temporary masking by an intense stimulus (i.e., strong responding to a habituated stimulus is observed if the stimulus is presented immediately following presentation of an intense novel stimulus). As we shall see, these phenomena are shared with learning involving multiple events.

Traditionally, sensitization was viewed as simply the opposite of habituation. But as noted by Groves and Thompson (1970), habituation is highly stimulus-specific, whereas sensitization is not. Stimulus specificity is not an all-or-none matter; however, sensitization clearly generalizes more broadly to relatively dissimilar stimuli than does habituation. Because of this difference in stimulus specificity and because different neural pathways are apparently involved, Groves and Thompson suggested that habituation and sensitization are independent processes that summate for any test stimulus. Habituation is commonly viewed as nonassociative. However, Wagner (1978) has suggested that long-term habituation (that which survives long retention intervals) is due to an association between the habituated stimulus and the context in which habituation occurred (but see Marlin & Miller, 1981).

Phenomena Involving Two Stimuli: Single Cue–Single Outcome

Factors Influencing Acquired Stimulus Control of Behavior

Stimulus Salience and Attention. The rate at which stimulus control by a conditioned stimulus (CS) is achieved (in terms of number of trials) and the asymptote of control attained are both positively related to the salience of both the CS and the outcome (e.g., Kamin, 1965). Salience here refers to a composite of stimulus intensity, size, contrast with background, motion, and stimulus change, among other factors. Salience is not only a function of the physical stimulus, but also a function of the state of the subject (e.g., food is more salient to a hungry than a sated person). Ordinarily, the salience of a cue has greater influence on the rate at which stimulus control of behavior develops (as a function of number of training trials), whereas the salience of the outcome has greater influence on the ultimate level of stimulus control that is reached over many trials. Clearly, the hybrid construct of salience as used here has much in common with what is commonly called attention, but we avoid that construct because of its additional implications. Stimulus salience is not only important during training; conditioned responding is directly influenced by the salience of the test stimulus, a point long ago noted by Hull (1952).

Predispositions: Genetic and Experiential. The construct of salience speaks to the ease with which a cue will come to control behavior, but it does not take into account the nature of the outcome. In fact, some stimuli more readily become cues for a specific outcome than do other stimuli. For example, Garcia and Koelling (1966) gave thirsty rats access to flavored water that was accompanied by sound and light stimuli whenever they drank. For half the animals, drinking was immediately followed with foot shock, and for the other half it was followed by an agent that induced gastric distress. Although all subjects received the same audiovisual-plusflavor compound stimulus, the subjects that received the foot shock later exhibited greater avoidance of the audiovisual cues, whereas the subjects that received the gastric distress exhibited greater avoidance of the flavor. These observations cannot be explained in terms of the relative salience of the cues. Although Garcia and Koelling interpreted this cueto-consequence effect in terms of genetic predispositions reflecting the importance of flavor cues with respect to gastric consequences and audiovisual cues with respect to cutaneous consequences, later research suggests that pretraining experience interacts with genetic factors in creating predispositions that allow stimulus control to develop for some stimulus dyads more readily than for others. For example, Dalrymple and Galef (1981) found that rats forced to make a visual discrimination for food were more apt to associate visual cues with an internal malaise.

Spatiotemporal Contiguity (Similarity). Stimulus control of acquired behavior is a strong direct function of the proximity of a potential Pavlovian cue to an outcome in space (Rescorla & Cunningham, 1979) and time (Pavlov, 1927). Contiguity is so powerful that some researchers have suggested that it is the only nontrivial determinant of stimulus control (e.g., Estes, 1950; Guthrie, 1935). However, several conditioning phenomena appear to violate the so-called law of contiguity. One long-standing challenge arises from the observation that simultaneous presentation of a cue and outcome results in weaker conditioned responding to the cue than when the cue slightly precedes the outcome. However, this simultaneous conditioning deficit has now been recognized as reflecting a failure to express information acquired during simultaneous pairings rather than a failure to encode the simultaneous relationship (i.e., most conditioned responses are anticipatory of an outcome, and are temporally inappropriate for a cue that signals that the outcome is already present). For example, Matzel, Held, and Miller (1988) demonstrated that simultaneous pairings do in fact result in robust learning, but that this information is behaviorally expressed only if an assessment procedure sensitive to simultaneous pairings is used.

A second challenge to the law of contiguity has been based on the observation that conditioned taste aversions yield stimulus control even when cues (flavors) and outcome (internal malaise) are separated by hours (Garcia, Ervin, & Koelling, 1966). However, even with conditioned taste aversions, stimulus control (i.e., aversion to the flavor) decreases as the interval between the flavor and internal malaise increases. All that differs here from other conditioning preparations is the rate of decrease in stimulus control as the interstimulus interval in training increases. Thus, conditioned taste aversion is merely a parametric variation of the law of contiguity, not a violation of it.

Another challenge to the law of contiguity that is not so readily dismissed is based on the observation that the effect of interstimulus interval is often inversely related to the average interval between outcomes (e.g., an increase in the CS-US interval has less of a decremental effect on conditioned responding if the intertrial interval is correspondingly increased). That is, stimulus control appears to depend not so much on the absolute interval between a cue and an outcome (i.e., absolute temporal contiguity) as on the ratio of this interval to that between outcomes (i.e., relative contiguity; e.g., Gibbon, Baldock, Locurto, Gold, & Terrace, 1977). A further challenge to the law of contiguity is discussed in this research paper’s section entitled “Mediation.”

According to the British empiricist philosophers, associations between elements were more readily formed when the elements were similar (Berkeley, 1710/1946). More recently, well-controlled experiments have confirmed that development of stimulus control is facilitated if paired cues and outcome are made more similar (e.g., Rescorla & Furrow, 1977). The neural representations of paired stimuli seemingly include many attributes of the stimuli, including their temporal and spatial relationships. This is evident in conditioned responding reflecting not only an expectation of a specific outcome, but the outcome occurring at a specific time and place (e.g., Saint Paul, 1982; Savastano & Miller, 1998). If temporal and spatial coordinates are viewed as stimulus attributes, contiguity can be viewed as similarity on the temporal and spatial dimensions, thereby subsuming spatiotemporal contiguity within a general conception of similarity. Thus, the law of similarity appears able to encompass the law of contiguity.

Objective Contingency. When a cue is consistently followed by an outcome and these pairings are punctuated by intertrial intervals in which neither the cue nor the outcome occurs, stimulus control of behavior ordinarily develops over trials. However, when cues or outcomes sometimes occur by themselves during the training sessions, conditioned responding to the cue (reflecting the outcome) is often slower to develop (measured in number of cue-outcome pairings) and is asymptotically weaker (Rescorla, 1968).

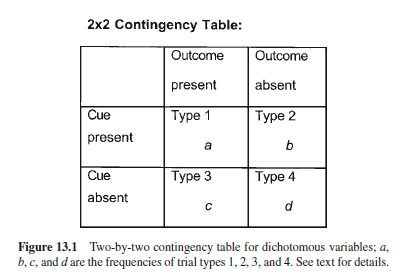

There are four possibilities for each trial in which a dichotomous cue or outcome might be presented, as shown in Figure 13.1:

- Cue–outcome.

- Cue–no outcome.

- No cue–outcome.

- No cue–no outcome.

The frequencies of trials of type 1, 2, 3, and 4 are a, b, c, and d, respectively. The objective contingency is usually defined in terms of the difference in conditional probabilities of the outcome in the presence (a/[a + b]) and in the absence (c/[c + d]) of the cue. If the conditional probability of the outcome is greater in the presence rather than absence of the cue, the contingency is positive; conversely, if the conditional probability of the outcome is less in the presence than absence of the cue, the contingency is negative. Alternatively stated, contingency increases with the occurrence of a- and d-type trials and decreases with b- and c-type trials. In terms of stimulus control, excitatory responding is observed to increase and behavior indicative of conditioned inhibition (see this research paper’s later section on that topic) is seen to decrease with increasing contingency, and vice versa with decreasing contingency. Empirically, the four types of trials are seen to have unequal influence on stimulus control, with Type 1 trials having the greatest impact and Type 4 trials having the least impact (e.g., Wasserman, Elek, Chatlosh, & Baker, 1993). Note that although we previously described the effect of spaced versus massed cue-outcome pairings as a qualifier of contiguity, such trial spacing effects are readily subsumed under objective contingency because long intertrial intervals are the same as Type 4 trials, provided these intertrial intervals occur in the training context.

Conditioned responding can be attenuated by presentations of the cue alone before the cue-outcome pairings, intermingled with the pairings, or after the pairings. If they occur before the pairings, the attenuation is called the CS-preexposure (also called latent inhibition) effect (Lubow & Moore, 1959); if they occur during the pairings, they (in conjunction with the pairings) are called partial reinforcement (Pavlov, 1927); and if they occur after the pairings, the attenuation is called extinction (Pavlov, 1927). Notably, the operations that produce the CS-preexposure effect and habituation (i.e., presentation of a single stimulus) are identical; the difference is in how behavior is subsequently assessed. Additionally, based on the two phenomena being doubly dissociable, Hall (1991) has argued that habituation and the CS-preexposure effect arise from different underlying processes. That is, a change in context between treatment and testing attenuates the CS-preexposure effect more than it does habituation, whereas increasing retention interval attenuates habituation more than it does the CS-preexposure effect.

Conditioned responding can also be attenuated by presentations of the outcome alone before the cue-outcome pairings, with the pairings, or after the pairings. If they occur before the pairings, the attenuation is called the US-preexposure effect (e.g., Randich & LoLordo, 1979); if they occur during the pairings, it (in conjunction with the pairings) is called the degraded contingency effect (in the narrow sense, as any presentation of the cue or outcome alone degrades the objective contingency, Rescorla, 1968); and if they occur after the pairings, it is an instance of retrospective revaluation (e.g., Denniston, Miller, & Matute, 1996). The retrospective revaluation effect has proven far more elusive than any of the other five means of attenuating excitatory conditioned responding through degraded contingency, but it occurs at least under select conditions (Miller & Matute, 1996).

If compounded, these different types of contingencydegrading treatments have a cumulative effect on conditioned responding that is at least summative (Bonardi & Hall, 1996) and possibly greater than summative (Bennett, Wills, Oakeshott, & Mackintosh, 2000). A prime example of such a compound contingency-degrading treatment is so-called learned irrelevance, in which cue and outcome presentations truly random with respect to one another precede a series of cue-outcome pairings (Baker & Mackintosh, 1977). This pretraining treatment has a decremental effect on conditioned responding greater than either CS preexposure or US preexposure.

Objective contingency effects are not merely a function of the frequency of different types of trials depicted in Figure 13.1. Two important factors that influence contingency effects are (a) trial order and spacing, and (b) modulatory stimuli. When contingency-degrading Type 2 and 3 trials are administered phasically (rather than interspersed with cueoutcome pairings), recency effects are pronounced. The trials that occur closest to testing have a relatively greater impact on responding; such recency effects fade with time (i.e., longer retention intervals, or at least as a function of the intervening events that occur during longer retention intervals). Additionally, if there are stimuli that are present during the pairings but not the contingency-degrading treatments (or vice versa), presentation of these stimuli immediately prior to or during testing with the target cue causes conditioned responding to better reflect the trials that occurred in the presence of the stimuli. These modulatory stimuli can be either contextual stimuli (i.e., the static environmental cues present during training: the so-called renewal effect, Bouton & Bolles, 1979) or discrete stimuli (e.g., Brooks & Bouton, 1993). Such modulatory stimuli appear to have much in common with so-called priming cues in cognitive research.

Modulatory effects can be obtained even when the cueoutcome pairings are interspersed with the contingency degrading events. For example, if stimulus A always precedes pairings of cue X and an outcome, and does not precede presentations of cue X alone, subjects will come to respond to the cue if and only if it is preceded by stimulus A; this effect is called positive occasion setting (Holland, 1983a). If stimulus Aonly precedes the nonreinforced presentations of cue X, subjects will come to respond to cue X only when it has not been preceded by stimulus A; this effect is called negative occasion setting. Surprisingly, behavioral modulation by contexts appears to be acquired in far fewer trials than with discrete stimuli, perhaps reflecting the important role of contextual modulation of behavior in each species’ ecological niche.

Attenuation of stimulus control through contingencydegrading events is often at least partially reversible without further cue-outcome pairings. This is most evident in the case of extinction, for which (so-called) spontaneous recovery from extinction and external disinhibition (i.e., temporary release from extinction treatment as a result of presenting an unrelated intense stimulus immediately prior to the extinguished stimulus) are examples of recovery of behavior indicative of the cue-outcome pairings without the occurrence of further pairings (e.g., Pavlov, 1927). Similarly, spontaneous recovery from the CS-preexposure effect has been well documented (e.g., Kraemer, Randall, & Carbary, 1991). These phenomena suggest that the pairings of cue and outcome are encoded independently of the contingency-degrading events, but the behavioral expression of information regarding the pairings can be suppressed by additional learning during the contingencydegrading events.

Cue and Outcome Duration. Cue and outcome durations have great impact on stimulus control of behavior. The effects are complex, but generally speaking, increased cue or outcome duration reduces behavioral control (provided one controls for any greater hedonic value of the outcome due to increased duration). What makes these variables complex is that different components of a stimulus can contribute differentially to stimulus control. The onset, presence, and termination of a cue can each influence behavior through its own relationship to the outcome; this tendency towards fragmentation of behavioral control appears to increase with the length of the duration of the cue (e.g., Romaniuk & Williams, 2000). Similarly, outcomes have components that can differentially contribute to control by a stimulus. As an outcome is prolonged, its later components are further removed in time from the cue and presumably are less well-associated to the cue.

Response Topology and Timing

The hallmark of conditioned responding is that the observed response to the cue reflects the nature of the outcome. For example, pigeons peck an illuminated key differently depending on whether the key signals delivery of food or water, and their manner of pecking is similar to that required to ingest the specific outcome (Jenkins & Moore, 1973). However, the nature of the signal also may qualitatively modulate the conditioned response. For instance, Holland (1977) has described how rats’ conditioned responses to a light and an auditory cue differ, despite their having been paired with the same outcome.

Conditioned responding not only indicates that the cue and outcome have been paired, but also reflects the spatial and temporal relationships that prevailed between the cue and outcome during those pairings (giving rise to the mentalistic view that subjects anticipate, so to speak, when and where the outcome will occur). If a cue has been paired with a rewarding outcome in a particular location, subjects are frequently observed to approach the location at which the outcome had been delivered (so-called goal tracking). For example, Burns and Domjan (1996) observed that Japanese quail, as part of their conditioned response to a cue for a potential mate, oriented to the absolute location in which the mate would be introduced, independent of their immediate location in the experimental apparatus. The temporal relationship between a cue and outcome that existed in training is evidenced in two ways. First, with asymptotic training, the conditioned response ordinarily is emitted just prior to the time at which the outcome would occur based on the prior pairings (Pavlov, 1927). Second, the nature of the response often changes with different cue-outcome intervals. In some instances, when an outcome (e.g., food) occurs at regular intervals, during the intertrial interval subjects emit a sequence of behaviors with a stereotypic temporal structure appropriate for that outcome in the species’ ecological niche (e.g., Staddon & Simmelhag, 1970; Timberlake & Lucas, 1991).

Pavlovian conditioned responding often closely resembles a diminished form of the response to the unconditioned outcome (e.g., conditioned salivation with food as the outcome). Such a response topology is called mimetic. However, conditioned responding is occasionally diametrically opposed to the unconditioned response (e.g., conditioned freezing with pain as the outcome, or a conditioned increase in pain sensitivity with delivery of morphine as the outcome; Siegel, 1989). Such a conditioned response topology is called compensatory. We do not yet have a full understanding of when one or the other type of responding will occur (but see this research paper’s section entitled “What Is a Response?”).

Stimulus Generalization

No perceptual event is ever exactly repeated because of variation in both the environment and in the nervous system. Thus, learning would be useless if organisms did not generalize from stimuli in training to stimuli that are perceptually similar. Therefore, it is not surprising that conditioned responding is seen to decrease in an orderly fashion as the physical difference between the training and test stimuli increases. This reduction in responding is called stimulus generalization decrement. Response magnitude or frequency plotted as a function of training-to-test stimulus similarity yields a symmetric curve that is called a generalization gradient (e.g., Guttman & Kalish, 1956). Such gradients resulting from simple cue-outcome pairings can be made steeper by introducing trials with a second stimulus that is not paired with the outcome. Such discrimination training not only steepens the generalization gradient between the reinforced stimulus and nonreinforced stimulus, but often shifts the stimulus value at which maximum responding is observed from the reinforced cue in the direction away from the value of the nonreinforced stimulus (the so-called peak shift; e.g., Weiss & Schindler, 1981). With increasing retention intervals between the end of training and a test trial, stimulus generalization gradients tend to grow broader (e.g., Riccio, Richardson, & Ebner, 1984)

Phenomena Involving More Than Two Stimuli: Competition, Interference, Facilitation, and Summation

When more than two stimuli are presented in close proximity during training, one might expect that the representation of each stimulus-outcome dyad would be treated independently according to the laws described above. Surely these laws do apply, but the situation becomes more complex because interactions between stimuli also occur. That is, when stimuli X, Y, and Z are trained together, behavioral control by X based on X’s relationship to Y is often influenced by the presence of Z during training. Although these interactions (described in the following sections) are often appreciable, they are neither ubiquitous (i.e., they are more narrowly parameter dependent) nor generally as robust as any of the phenomena described under “Phenomena Involving Two Stimuli.”

Multiple Cues With a Common Outcome

Cues Trained Together and Tested Apart: Competition and Facilitation. For the last 30 years, much attention has beenfocusedoncuecompetitionbetweencuestrainedincompound, particularly overshadowing and blocking. Overshadowing refers to the observed attenuation in conditioned responding to an initially novel cue (X) paired with an outcome in the presence of an initially novel second cue (Y), relative to responding to X given the same treatment in the absence of Y (Pavlov, 1927).The degree thatYwill overshadow X depends on their relative saliences; the more salient Y is compared to X, the greater the degree of overshadowing of X (Mackintosh, 1976). When two cues are equally salient, overshadowing is sometimes observed, but is rarely a large effect. Blocking refers to attenuated responding to a cue (X) that is paired with an outcome in the presence of a second cue (Y) when Y was previously paired with the same outcome in the absence of X, relative to responding to X when Y had not been pretrained (Kamin, 1968). That is, learning as a result of the initial Youtcome association blocks (so to speak) responding to X that the XY-outcome pairings would otherwise support. (Thus, observation of blocking requires good responding to X by the control group, which necessitates the use of parameters that minimize overshadowing of X byYin the control group.)

Both overshadowing and blocking can be observed with a single compound training trial (e.g., Balaz, Kasprow, & Miller,1982;Mackintosh&Reese,1979),areusuallygreatest with a few compound trials, and tend to wane with many compound trials (e.g., Azorlosa & Cicala, 1988). Notably, recovery from each of these cue competition effects can sometimes be obtained without further training trials through various treatments including (a) lengthening the retention interval (i.e., so-called spontaneous recovery; Kraemer, Lariviere, & Spear, 1988); (b) administration of so-called reminder treatments, which consists of presentation of either the outcome alone, the cue alone, or the training context (e.g., Balaz, Gutsin, Cacheiro, & Miller, 1982); and (c) posttraining massiveextinctionoftheovershadowingorblockingstimulus (e.g., Matzel, Schachtman, & Miller, 1985). The theoretical implications of such recovery (paralleling the recovery often observed following the degradation of contingency in the two-stimulus situation) are discussed later in this research paper (see sections entitled “Expression-Focused Models” and “Accounts of Retrospective Revaluation”).

Although competition is far more commonly observed, under certain circumstances the presence of a second cue during training has exactly the opposite effect; that is, it enhances (i.e., facilitates) responding to the target cue. When this effect is observed within the overshadowing procedure, it is called potentiation (Clarke, Westbrook, & Irwin, 1979); and when it is seen in the blocking procedure, it is called augmentation (Batson & Batsell, 2000). Potentiation and augmentation are most readily observed when the outcome is an internal malaise (usually induced by a toxin), the target cue is an odor, and the companion cue is a taste. However, enhancement is not restricted to these modalities (e.g., J. S. Miller, Scherer, & Jagielo, 1995). Another example of enhancement, although possibly with a different underlying mechanism, is superconditioning, which refers to enhanced responding to a cue that is trained in the presence of a cue previously established as a conditioned inhibitor for the outcome, relative to responding to the target cue when the companion cue was novel. In most instances, enhancement appears to be mediated at test by the companion stimulus that was present during training, in that degrading the associative status of the companion stimulus between training and testing often attenuates the enhanced responding (Durlach & Rescorla, 1980).

Cues Trained Apart and Tested Apart. Although theory and research in learning over the past 30 years have focused on the interaction of cues trained together, there is an older literature concerning the interaction of cues with common outcomes trained apart (i.e., X→A, Y→A). This research was conducted largely in the tradition of associationistic studies of human verbal learning that was popular in the mid-twentieth century.Atypical example is the attenuated responding to cue X observed when X→A training is either preceded (proactive interference) or followed (retroactive interference) byY→Atraining, relative to subjects receiving no Y→A training (e.g., Slamecka & Ceraso, 1960). The stimuli used in the original verbal learning studies were usually consonant trigrams, nonsense syllables, or isolated words. However, recent research using nonverbal preparations has found that such interference effects occur quite generally in both humans (Matute & Pineño, 1998) and nonhumans (Escobar, Matute, & Miller, 2001). Importantly,Y→Apresentations degrade the X→A objective contingency because they include presentations of A in the absence of X. This degrading of the X-A contingency sometimes does contribute to the attenuation of responding based on the X→Arelationship (as seen in subjects who receive A-alone as the disruptive treatment relative to subjects who receive no disruptive treatment). However,Y→Atreatment ordinarily produces a larger deficit, suggesting that, in addition to contingency effects, associations with a common element interact to reduce target stimulus control (e.g., Escobar et al., 2001). Although interference is the more frequent result of the X→A, Y→A design, facilitation is sometimes observed, most commonly when X and Y are similar (e.g., Osgood, 1949).

Cues Trained Apart and Tested Together. When two independently trained cuesare compounded at test, responding is usually at least as or more vigorous than when only one of the cues is tested (see Kehoe & Gormezano, 1980). When the response to the compound is greater than to either element, the phenomenon is called response summation. Presumably, a major factor limiting response summation is that compounding two cues creates a test situation different from that of training with either cue; thus, attenuated responding to the compound due to generalization decrement is expected. The question is under what conditions will generalization decrement counteract the summation of the tendencies to respond to the two stimuli. Research suggests that when subjects treat the compound as a unique stimulus in itself, distinct from the original stimuli (i.e., configuring), summation will be minimized (e.g., Kehoe, Horne, Horne, & Macrae, 1994). Well-established rules of perception (e.g., gestalt principles; Köhler, 1947) describe the conditions that favor and oppose configuring.

Multiple Outcomes With a Single Cue

Just as Y→Atrials can interact with behavior based on X→A training, so too can X→B trials interact with behavior based on X→A training.

Multiple Outcomes Trained Together With a Single Cue. When a cue X is paired with a compound of outcomes (i.e., X→AB), responding on tests of the X→A relationship often yield less responding than that of a control group for which B was omitted, provided A and B are sufficiently different. Such a result might be expected based on either distraction during training or response competition at test, both of which are well-established phenomena. However, some studies have been designed to minimize these two potential sources of outcome competition. For example, Burger, Mallemat, and Miller (2000) used a sensory preconditioning procedure (see this research paper’s section entitled “Second-Order Conditioning and Sensory Preconditioning”) in which the competing outcomes were not biologically significant; and only just before testing did they pair A with a biologically significant stimulus so that the subjects’learning could be assessed. As neither Anor B was biologically significant during training, (a) distraction by B from A was less apt to occur (although it cannot be completely discounted), and (b) B controlled no behavior that could have produced response competition at test. Despite minimization of distraction and response competition, Burger et al. still observed competition between outcomes (i.e., the presence of B during training attenuated responding based on X and A having been paired). To our knowledge, no one to date has reported facilitation from the presence of B during training. But analogy with the multiple-cue case suggests that facilitation might occur if the two outcomes had strong within-compound links (i.e., A and B were similar or strongly associated to each other).

Multiple Outcomes Trained Apart With a Single Cue: Counterconditioning. Just as multiple cues trained apart with a common outcome can result in an interaction, so too can an interaction be observed when multiple outcomes are trained apart with a common cue. Alternatively stated, responding based on X→A training can be disrupted by X→B training. The best known example of this is counterconditioning (e.g., responding to a cue based on cue→food training is disrupted by cue→footshock training). The interfering training (X→B) can occur before, among, or after the target training trials (X→A). Although response competition is a likely contributing factor, there is good evidence that such interference effects are due to more than simple response competition (e.g., Dearing & Dickinson, 1979). Just as interference produced by Y→A in the X→A, Y→A situation can be due in part to degrading the X-A contingency, so attenuated responding produced by X→B in the X→A, X→B situation can arise in part from the degrading of the X-A contingency that is inherent in the presentations of X during X→B trials. However, research has found that the response attenuation produced by the X→B trials is sometimes greater than that produced by X-alone presentations; hence, this sort of interference cannot be treated as simply an instance of degraded contingency (Escobar, Arcediano, & Miller, 2001).

Resolving Ambiguity

The magnitude of the interference effects described in the two previous sections is readily controlled by conditions at the time of testing. If the target and interfering treatments have been given in different contexts (i.e., competing elements trained apart), presentation at test of contextual cues associated with the interfering treatment enhances interference, whereas presentation of contextual cues associated with target training reduces interference. These contextual cues can be either diffuse background cues or discrete stimuli that were presented with the target (Escobar et al., 2001). Additionally, more recent training experience typically dominates behavior (i.e., a recency effect), all other factors being equal. Such recency effects fade with increasing retention intervals, with the consequence that retroactive interference fades and, correspondingly, proactive interference increases when the posttraining retention interval is increased (Postman, Stark, & Fraser, 1968).

Notably, the contextual and temporal modulation of interference effects is highly similar to the modulation observed with degraded contingency effects (see this research paper’s section entitled “Factors Influencing Aquired Stimulus Control of Behavior”). This similarity is grounds for revisiting the issue of whether interference effects are really different from degraded contingency effects. We previously cited grounds for rejecting the view that interference effects were no more than degraded contingency effects (see this research paper’s section on that topic). However, if the training context is regarded as an element that can become associated with a cue on a cuealone trial or with an outcome on an outcome-alone trial, contingency degrading trials could be viewed as target cuecontext or context-outcome trials that interfere with behavior promoted by target cue-outcome trials much as Y-outcome or target-B trials do within the interference paradigm. In principle, this allows degraded contingency effects to be viewed as a subset of interference effects. However, due to the vagueness of context as a stimulus, this approach has not received widespread acceptance.

Mediation

Mediated changes in control of behavior by a stimulus refers to situations in which responding to a target cue is at least partially a function of the training history of a second cue that has at one time or another been paired with the target. Depending on the specific situation, mediational interaction between the target and the companion cues can occur either at the time that they are paired during training (e.g., aversively motivated second-order conditioning, see section entitled “Second-Order Conditioning and Sensory Preconditioning”; Holland & Rescorla, 1975) or at test (e.g., sensory preconditioning, see same section; Rizley & Rescorla, 1972). As discussed below, the mediated control transferred to the target can be either consistent with the status of the companion cue (e.g., second-order conditioning) or inverse to the status of the companion cue (e.g., conditioned inhibition, blocking). Testing whether a mediational relationship between two cues exists usually takes the form of presenting the companion cue with or without the outcome in the absence of the target and seeing whether that treatment influences responding to the target. This manipulation of the companion cue can be done before, interspersed among, or after the target training trials. However, sometimes posttargettraining revaluation of the companion does not alter responding to the target, suggesting that the mediational process occurs during training (e.g., aversively motivated secondorder conditioning).

Second-Order Conditioning and Sensory Preconditioning

If cue Y is paired with a biologically significant outcome (A) such that Y comes to control responding, and subsequently cue X is paired with Y (i.e., Y→A, X→Y), responding to X will be observed. This phenomenon is called second-order conditioning (Pavlov, 1927). Cue X can similarly be imbued with behavioral control if the two phases of training above are reversed (i.e., X→Y, followed by Y→A). This latter phenomenon is called sensory preconditioning (Brogden, 1939). Second-order conditioning and sensory preconditioning are important for two reasons. First, these phenomena are simple examples of mediated responding—that is, acquired behavior that depends on associations between stimuli that are not of inherent biological significance. Second, these phenomena pose a serious challenge to the principle of contiguity. For example, consider sensory preconditioning: A light is paired with a tone, then the tone is paired with an aversive event (i.e., electric shock); at test, the light evokes a conditioned fear response. Thus, the light is controlling a response appropriate for the aversive event, despite its never having been paired with that event. This is a direct violation of contiguity in its simplest form. Based on the observation of mediated behavior, the law of contiguity must be either abandoned or modified. Given the enormous success of contiguity in describing the conditions that foster acquired behavior, researchers generally have elected to redefine contiguity as spatiotemporal proximity between the cue or its surrogate and the outcome or its surrogate, thereby incorporating mediation within the principle of contiguity.

Mediation appears to occur when two different types of training share a common element (e.g., X→Y, Y→A). Importantly, the mediating stimulus ordinarily does not simply act as a (weak) substitute for the outcome (as might be expected of a so-called simple surrogate). Rather, the mediating stimulus (i.e., first-order cue) carries with it its own spatiotemporal relationship to the outcome, such that the secondorder cue supports behavior appropriate for a summation of the mediator-outcome spatiotemporal relationship and the second-order cue-mediator spatiotemporal relationship (for spatial summation, see Etienne, Berlie, Georgakopoulos, & Maurer, 1998; for temporal summation, see Matzel, Held et al., 1988). In effect, subjects appear to integrate the two separately experienced relationships to create a spatiotemporal relationship between the second-order cue and the outcome, despite their never having been physically paired.

The mediating process that links two stimuli that were never paired could occur in principle either during training or during testing. To address this issue, researchers have asked what happens to the response potential of a second-order cue X when its first-order cue is extinguished between training and testing. Rizley and Rescorla (1972) reported that such posttraining extinction of Y did not degrade responding to a second-order cue (X), but subsequent research has under some conditions found attenuated responding to X (Cheatle & Rudy, 1978). The basis for this difference is not yet completely clear, but Nairne and Rescorla (1981) have suggested that it depends on the valence of the outcome (i.e., appetitive or aversive).

Conditioned Inhibition

Conditioned inhibition refers to situations in which a subject behaves as if it has learned that a particular stimulus (a so-called inhibitor) signals the omission of an outcome. Conditioned inhibition is ordinarily assessed by a combination of (a) a summation test in which the putative inhibitor is presented in compound with a known conditioned excitor (different from any excitor that was used in training the inhibitor) and seen to reduce responding to the excitor; and (b) a retardation test in which the inhibitor is seen to be slow in coming to serve as a conditioned excitor in terms of required number of pairings with the outcome (Rescorla, 1969). Because the standard tests for conditioned excitation and conditioned inhibition are operationally distinct, stimuli sometimes can pass tests for both excitatory and inhibitory status after identical treatment. The implication is that conditioned inhibition and conditioned excitation are not mutually exclusive (e.g., Matzel, Gladstein, & Miller, 1988), which is contrary to some theoretical formulations (e.g., Rescorla & Wagner, 1972).

There are several different procedures that appear to produce conditioned inhibition (LoLordo & Fairless, 1985). Among them are (a) explicitly unpaired presentations of the cue (inhibitor) and outcome (described in objective contingency on pp. 361–363); (b) Pavlov’s (1927) procedure in which a training excitor (Y) is paired with an outcome, interspersed with trials in which the training excitor and intended inhibitor (X) are presented in nonreinforced compound; and (c)so-calledbackwardpairingsofa cuewithanoutcome(outcome→X; Heth, 1976). What appears similar across these various procedures is that the inhibitor is present at a time that anothercue(discreteorcontextual)signalsthattheoutcomeis apt to occur, but in fact it does not occur. Conditioned inhibition is stimulus-specific in that it generates relatively narrow generalization gradients, similar to conditioned excitation (Spence, 1936).Additionally, it is outcome-specific in that an inhibitor will transfer its response-attenuating influence on behavior between different cues for thesame outcome, but not between cues for different outcomes (Rescorla & Holland, 1977). Hence, conditioned inhibition, like conditioned excitation, is a form of stimulus-specific learning about a relationship between a cue and an outcome. But because it is necessarily mediated (the cue and outcome are never paired), conditioned inhibition is more similar to second-order conditioning than it is to simple (first-order) conditioning. Moreover, just as responding to a second-order conditioned stimulus not only appears as if the subject expects the outcome at a time and place specified conjointly by the spatiotemporal relationships between X and Y and between Y and the outcome (e.g., Matzel, Held et al., 1988), so too does a conditioned inhibitor seemingly signal not only the omission of the outcome but also the time and place of that omission as well (e.g., Denniston, Blaisdell, & Miller, 1998).

One might ask about the behavioral consequences for conditioned inhibition of posttraining extinction of the mediating cue. Similar to corresponding tests with second-order conditioning, the results have been mixed. For example, Rescorla and Holland (1977) found no alteration of behavior indicative of inhibition, whereas others (e.g., Best, Dunn, Batson, Meachum, & Nash, 1985; Hallam, Grahame, Harris, & Miller, 1992) observed a decrease in inhibition. Yin, Grahame, and Miller (1993) suggested that the critical difference between these studies is that massive posttraining extinction of the mediating stimulus is necessary to obtain changes in behavioral control by an inhibitor.

Despite these operational and behavioral similarities of conditioned inhibition and second-order conditioning, there is one most fundamental difference. Responding to a second-order cue is appropriate for the occurrence of the outcome, whereas responding to an inhibitor is appropriate for the omission of the outcome. In sharp contrast to secondorder conditioning (and sensory preconditioning), which are examples of positive mediation (seemingly passing information, so to speak, concerning an outcome from one cue to a second cue), conditioned inhibition is an example of negative mediation (seemingly inverting the expectation of the outcome conveyed by the first-order cue as the information is passed to the second-order cue). Why positive mediation should occur in some situations and negative mediation in other apparently similar situations is not yet fully understood. Rashotte, Marshall, and O’Connell (1981) and Yin, Barnet, and Miller (1994) have suggested that the critical variable may be the number of nonreinforced X-Ytrials. Asecond difference between inhibition and second-order excitation that is likely related to the aforementioned one is that nonreinforced exposure to an excitor produces extinction, whereas nonreinforced exposure to an inhibitor not only does not reduce its inhibitory potential, but also sometimes increases it (DeVito & Fowler, 1987).

Retrospective Revaluation

Mediated changes in stimulus control of behavior can often be achieved by treatment (reinforcement or extinction) of a target cue’s companion stimulus either before, during, or after the pairings of the target and companion stimuli (reinforced or nonreinforced). Recent interest has focused on treatment of the companion stimulus alone after the completion of the compound trials, because in this case the observed effects on responding to the target are particularly problematic to most conventional associative theories of acquired behavior. A change in stimulus control following the termination of training with the target cue is called retrospective revaluation. Importantly, both positive and negative mediation effects have been observed with the retrospective revaluation procedure. Sensory preconditioning is a long-known but frequently ignored example of retrospective revaluation in its simplest form. It is an example of positive retrospective revaluation because the posttarget-training treatment with the companion stimulus produces a change in responding to the target that mimics the change in control by the companion stimulus. Other examples of positive retrospective revaluation include the decrease in responding sometimes seen to a cue trained in compound when its companion cue is extinguished (i.e., mediated extinction; Holland & Forbes, 1982). In contrast, there are also many reports of negative retrospective revaluation, in which the change in control by the target is in direct opposition to the change produced in the companion during retrospective revaluation. Examples of negative retrospective revaluation include recovery from overshadowing as a result of extinction of the overshadowing stimulus (e.g., Matzel et al., 1985), decreases in conditioned inhibition as a result of extinction of the inhibitor’s training excitor (e.g., DeVito & Fowler, 1987), and backward blocking (AX→outcome, followed by A→outcome, e.g., Denniston et al., 1996).

The occurrence of both positive and negative mediation in retrospective revaluation parallels the two opposing effects that are observed when the companion cue is treated before or during the compound stimulus trials. In the section entitled “Multiple Cues With a Common Outcome,” we described not only overshadowing but also potentiation, which, although operationally similar to overshadowing, has a converse behavioral result. Notably, the positive mediation apparent in potentiation can usually be reversed by posttraining extinction of the mediating (potentiating) cue (e.g., Durlach & Rescorla, 1980). Similarly, the negative mediation apparent in overshadowing can sometimes be reversed by massive posttraining extinction of the mediating (overshadowing) cue (e.g., Kaufman & Bolles, 1981; Matzel et al., 1985). However, currently there are insufficient data to specify a rule for the changes in control by a cue that will be observed when its companion cue is reinforced or extinguished. That is to say, we do not know the critical variables that determine whether mediation will be positive or negative. As previously mentioned (see section titled “Conditioned Inhibition”), the two prime candidates for determining the direction of mediation are the number of pairings of the target with the mediating cue and whether those pairings are simultaneous or serial. Whatever the outcome of future studies, research on retrospective revaluation has clearly demonstrated that the previously accepted view— that the response potential of a cue cannot change if it is not presented—was incorrect.

Models of Pavlovian Responding: Theory

Here we turn from our summary of variables that influence acquired behavior based on cue-outcome (Pavlovian) relationships to a review of accounts of this acquired behavior. In this section, we contrast the major variables that differentiate among models, and we refer back to our list of empirical variables (see section titled “Factors Influencing Acquired Stimulus Control of Behavior”) to ask how the different families of models account for the roles of these variables. Citations are provided for the interested reader wishing to pursue the specifics of one or another model.

Units of Analysis

What Is a Stimulus?

Before we review specific theories, we must briefly consider how an organism perceives a stimulus and processes its representation. Different models of acquired behavior use different definitions of stimuli. In some models, the immediate perceptual field is composed of a vast number of microelements (e.g., we learn not about a tree, but each branch, twig, and leaf; Estes & Burke, 1953; McLaren & Mackintosh, 2000). In other models, the perceptual field at any given moment consists of a few integrated sources of receptor stimulation (e.g., the oak tree, the maple tree; Rescorla & Wagner, 1972; Gallistel & Gibbon, 2000). For yet other models, the perceptual field at any given moment is fully integrated and contains only one so-called configured stimulus, which consists of all that immediately impinges on the sensorium (the forest; Pearce, 1987). Although each approach offers its own distinct merits and demerits, they have all proven viable. Generally speaking, the larger the number of elements assumed, the more readily can behavior be explained post hoc, but the more difficult it is to make testable a priori predictions. By increasing the number of stimuli, each of which can have its own associative status, one is necessarily increasing the number of variables and often the number of parameters. Thus, it may be difficult to distinguish between models that are correct in the sense that they faithfully represent some fundamental relationship between acquired behavior and events in the environment, and models that succeed because there is enough flexibility in the model’s parameters to account for virtually any result (i.e., curve fitting). Most models assume that subjects process representations of a small number of integrated stimuli at any one time. That is, the perceptual field might consist of a tone and a light and a tree, each represented as an integrated and inseparable whole.

WorthyofspecialnotehereistheMcLarenandMackintosh (2000) model with its elemental approach. This model not only addresses the fundamental phenomena of acquired behavior, but also accounts for perceptual learning, thereby providing an account of how and by what mechanism organisms weave the stimulation provided by many microelements into the perceptual fabric of lay usage. In other words, the model offers an explanation of how experience causes us to merge representations of branches, twigs, and leaves into a compound construct like a tree.

What Is a Response?

In Pavlovian learning, the conditioned response reflects the nature of the outcome, which is ordinarily a biologically significant unconditioned stimulus (but see Holland, 1977). However, this is not sufficient to predict the form of conditioned behavior. Although responding is often of the same formastheunconditionedresponsetotheunconditionedstimulus (i.e., mimetic), it is sometimes in the opposite direction (i.e., compensatory). Examples of mimetic conditioned responding include eyelid conditioning, conditioned salivation, and conditioned release of endogenous endorphins with aversive stimulation as the unconditioned stimulus. Examples of compensatory conditioned responding include conditioned freezing with foot shock as the unconditioned stimulus, and conditioned opiate withdrawal symptoms with opiates as the unconditioned stimulus. The question of under what conditionswillconditionedrespondingbecompensatoryasopposed to mimetic has yet to be satisfactorily answered. Eikelboom and Stewart (1982) argued that all conditioned responding is mimetic, and that compensatory instances simply reflect our misidentifying the unconditioned stimulus—that is, for unconditioned stimuli that impinge primarily on efferent neural pathways of the peripheral nervous system, the real reinforcer is the feedback to the central nervous system. Thus, what is often called the unconditioned response precedes a later behavior that constitutes the effective unconditioned response. This approach is stimulating, but encounters problems: Most unconditioned stimuli impinge on both afferent and efferent pathways, and there are complex feedback loops at various anatomical levels between these two pathways.

Conditioned responding is not just a reflection of past experience with a cue indicating a change in the probability of an outcome. Acquired behavior reflects not only the likelihood that a reinforcer will occur, but when and where the reinforcer will occur. This is evident in most learning situations (see “Response Topology and Timing”). For example, Clayton and Dickinson (1999) have reported that scrub jays, which cache food, remember not only what food items have been stored, but where and when they were stored. Additionally, there is evidence that subjects can integrate temporal and spatial information from different learning experiences to create spatiotemporal relationships between stimuli that were never paired in actual experience (e.g., Etienne et al., 1998; Savastano & Miller, 1998). Alternatively stated, in mediated learning, not only does the mediating stimulus become a surrogate for the occurrence of the outcome, it carries with it information concerning where and when the outcome will occur, as is evident in the phenomenon of goal tracking (e.g., Burns & Domjan, 1996).

What Mental Links Are Formed?

In the middle of the twentieth century, there was considerable controversy about whether cue-outcome, cue-response, or response-outcome relationships were learned (i.e., associations, links).The major strategies used to resolve this question were to either (a) use test conditions that differed from those of training by pitting one type of association against another (e.g., go towards a specific stimulus, or turn right); or (b) degrade one or another component after training (e.g., satiation or habituation of the outcome or extinction of the eliciting cue) and observe its effect on acquired behavior. The results of such studies indicated that subjects could readily learn all three types of associations, and ordinarily did to various degrees, depending on which allowed the easiest solution of the task facing the subject (reviewed by Kimble, 1961). That is, subjects are versatile in their information processing strategies, opportunistic, and ordinarily adept at using whichever combination of environmental relationships is most adaptive.

Although much stimulus control of behavior can be described in terms of simple associations among cues, responses, and outcomes, occasion setting (described under the section entitled “Objective Contingency”) does not yield to such analyses. One view of how occasion setting works is that occasion setters serve to facilitate (or inhibit) the retrieval of associations (e.g., Holland, 1983b). Thus, they involve hierarchical associations; that is, they are associated with associations rather than with simple representations of stimuli or responses (cf. section entitled “Hierarchical Associations”). Such a view introduces a new type of learning, thereby adding complexity to the compendium of possible learned relationships. The leading alternative to this view of occasion setting is that occasion setters join into configural units with the stimuli that they are modulating (Schmajuk, Lamoureux, & Holland, 1998). This latter approach suffices to explain behavior in most occasion-setting situations, but to date has led to few novel testable predictions. Both approaches appear strained when used to account for transfer of modulation of an occasion setter from the association with which they were trained to another association. Such transfer is successful only if the transfer association itself was previously occasion set (Holland, 1989).

Acquisition-Focused (Associative) Models

All traditional models of acquired behavior have assumed that critical processing of information occurs exclusively when target stimuli occur—that is, at training, at test, or at both. The various contemporary models of acquired behavior can be divided into those that emphasize processing that occurs during training (hereafter called acquisition-focused models) and those that emphasize processing that occurs during testing (hereafter called expression-focused models). For each of these two families of models in their simplest forms, there are phenomena that are readily explained and other phenomena that are problematic. However, theorists have managed to explain most observed phenomena within acquired behavior in either framework (see R. R. Miller & Escobar, 2001) when allowed to modify models after new observations are reported (see section entitled “Where Have the Models Taken Us?”).

The dominant tradition since Thorndike (1932) has been the acquisition-focused approach, which assumes that learning consists of the development of associations. In theoretical terms, each association is characterized by an associative strength or value, which is a kind of summary statistic representing the cumulative history of the subject with the associated events. Hull (1943) and Rescorla and Wagner (1972) providetwoexamplesofacquisition-focusedmodels,withthe latter being the most influential model today (see R. R. Miller, Barnet, & Grahame, 1995, for a critical review of this model). Contemporary associative models today are perhaps best represented by that of Rescorla and Wagner, who proposed that time was divided into (training) trials and on each trial for which a cue of interest was present, there was a change in that cue’s association to the outcome equal to the product of the saliences of the cue and outcome, times the difference between the outcome experienced and the expectation of the outcome based on all cues present on that trial. Notably, in acquisition-focused models, subjects are assumed not to recall specific experiences (i.e., training trials) at test; rather they have accessible only the current associative strength between events. Models within this family differ primarily in the rules used to calculate associative strength, and whether other summary statistics are also computed. For example, Pearce and Hall (1980) proposed that on each training trial, subjects not only update the associative strength between stimuli present on that trial, but also recalculate the so-called associability of each stimulus present on that trial. What all contemporary acquisition-focused models share is that new experience causes an updating of associative strength; hence, recent experience is expected to have a greater impact on behavior than otherwise equivalent earlier experience. The result is that these models are quite adept at accounting for those trial-order effects that can be viewed as recency effects; conversely, they are challenged by primacy effects (which, generally speaking, are far less frequent than recency effects). In the following section, we discuss some of the major variables that differentiate among the various acquisition-focused models. Specifics of individual models are not described here, but relevant citations are provided.

Addressing Critical Factors of Acquired Behavior

Stimulus Salience and Attention

Nearly all models (acquisition- and expression-focused) represent the saliencies of the cue and outcome through one conjoint (e.g., Bush & Mosteller, 1951) or two independent parameters (one for the cue and the other for the outcome, e.g., Rescorla & Wagner, 1972). A significant departure from this standard treatment of salience-attention is Pearce and Hall’s (1980) model, which sharply differentiates between salience, which is a constant for each cue, and associability, which changes with experience and affects the rate (per trial) at which new information about the cue is encoded.

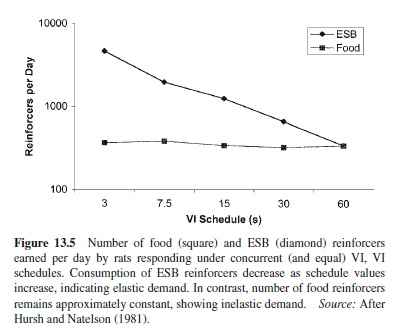

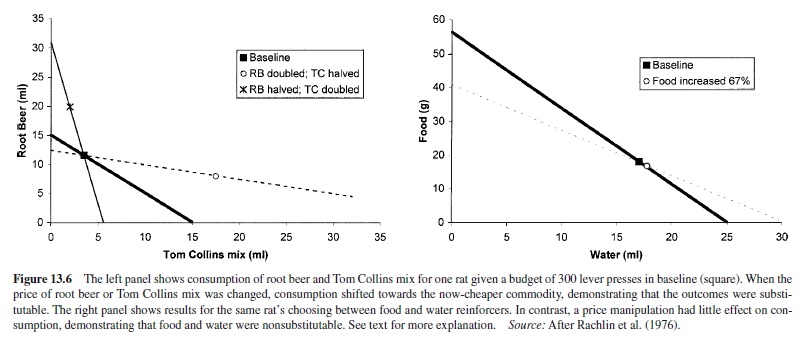

Predispositions: Genetic and Experiential. Behavioral predispositions, which depend on evolutionary history, specific prior experience, or both, are very difficult to capture in models meant to have broad generality across individuals within a species and across species. In fact, most models of acquired behavior (acquisition- and expression-focused) have ignored the issue of predispositions. However, those models that use a single parameter to describe the conjoint associability (growth parameter) for both the cue and outcome (as opposed to separate associabilities for the cue and outcome) can readily incorporate predispositions within this parameter. For example, in the well-known Garcia and Koelling (1966) demonstration of flavors joining into association with gastric distress more readily than with electric shock and audiovisual cues entering into association more readily with electric shock than with gastric distress, separate (constant) associabilities for the flavor, audiovisual cue, electric shock, and gastric distress cannot account for the observed predispositions. In contrast, this example of cue-toconsequence effects is readily accounted for by high conjoint associabilities for flavor–gastric distress and for audiovisual cues–electric shock, and low conjoint associabilities for flavor–electric shock and for audiovisual cues–gastric distress. However, to require a separate associability parameter for every possible cue-outcome dyad creates a vastly greater number of parameters than simply having a single parameter for each cue and each outcome with changes in behavior being in part a function of these two parameters (usually their product). Hence, we see here the recurring trade-off between oversimplifying (separate parameters for each cue and each outcome) and reality (a unique parameter for each cue-outcome dyad).