Sample Attention Research Paper. Browse other research paper examples and check the list of research paper topics for more inspiration. iResearchNet offers academic assignment help for students all over the world: writing from scratch, editing, proofreading, problem solving, from essays to dissertations, from humanities to STEM. We offer full confidentiality, safe payment, originality, and money-back guarantee. Secure your academic success with our risk-free services.

We live in a sea of information. The amount of information available to our senses vastly exceeds the informationprocessing capacity of our brains. How we deal with this overload is the topic of this research paper—attention. Consider your experience as you read this page. You are focused on just a word or two at a time. The rest of the page is available but is not being actively processed at this time. Indeed, quite apart from the other words on the page, there are many stimuli impinging on you that you are probably not aware of, such as the pressure of your chair against your back. Of course, as soon as that pressure is mentioned you probably shifted your attention to that source of stimulation, at which point you most likely stopped reading briefly. Some external stimuli do not need to be pointed out to you in order for you to become aware of them—for example, a mosquito buzzing around your face or the backfire of a car outside your window. These simple observations point to the selectivity of attention, its ability to shift quickly from one stimulus or train of thought to another, the difficulty we have in attending to more than one thing at a time, and the ability of some stimuli to capture attention. These are all important aspects of the topic of attention that will be explored in this research paper.

Academic Writing, Editing, Proofreading, And Problem Solving Services

Get 10% OFF with 24START discount code

Perhaps the most fundamental point about attention is its selectivity. Attention permits us to play an active role in our interaction with the world; we are not simply passive recipients of stimuli. A great deal of the theoretical focus of research on attention has been concerned with how we come to select some information while ignoring the rest. Work in the years immediately following World War II led to the development of a theory that holds that information is filtered at an early stage in perceptual processing (Broadbent, 1958). According to this approach, there is a bottleneck in the sequence of processing stages involved in perception. Whereas physical properties such as color or spatial position can be extracted in parallel with no capacity limitations, further perceptual analysis (e.g., identification) can be performed only on selected information. Thus, unattended stimuli, which are filtered out as a result of attentional selection, are not fully perceived.

Subsequent research was soon to call filter theory into question. One striking result comes from Moray’s (1959) studies using the dichotic listening paradigm, in which headphones are used to present separate messages to the two ears. The subject is instructed to shadow one message (i.e., repeat it back as it is spoken); the other message is unattended. Ordinarily, there is little awareness of the contents of the unattended message (e.g., Cherry, 1953). However, Moray found that when a message in the unattended ear is preceded by the subject’s own name, the likelihood of reporting the unattended message is increased. This suggests that the unattended message had not been entirely excluded from further analysis. Treisman (1960) proposed a modification of the filter model that was designed to handle this problem. She assumed the existence of a filter that attenuated the informational content of an unattended input without eliminating it entirely. This model was capable of predicting the occasional intrusion of meaningful material from an unattended message. However, the discrepant data that led Treisman to propose an attenuator instead of an all-or-none filter led other theorists in quite another direction. Thus, according to the late-selection view (e.g., Deutsch & Deutsch, 1963), perceptual processing operates in parallel and selection occurs after perceptual processing is complete (e.g., after identification), with capacity limitations arising only from later, responserelated processes.

After nearly three decades of intensive research and debate, recent reviews have suggested that the apparent controversy between the two views may stem from the fact that the empirical data in support of each of them has typically been drawn from different paradigms.

For instance, Yantis and Johnston (1990) noted that evidence favoring the existence of late selection was typically obtained with divided-attention paradigms (e.g., Duncan, 1980; Miller, 1982). These findings showed only that there can be selection after identification, rather than entailing that selection must occur after identification. Yantis and Johnston (1990) set out to determine whether early selection is at all possible. By creating optimal conditions for the focusing of attention, they showed that subjects were able to ignore irrelevant distractors, thus demonstrating the perfect selectivity that is diagnostic of early selection. Yantis and Johnston proposed a hybrid model with a flexible locus for visual selection—namely, an early locus when the task involves filtering out irrelevant objects, and a late locus, after identification, when the task requires processing multiple objects.

Kahneman and Treisman (1984) noted that whereas the early-selection approach initially gained the lion’s share of empirical support (e.g., von Wright, 1968), later studies presented mounting evidence in favor of the late-selection view (e.g., Duncan, 1980). They attributed this dichotomy to a change in paradigm that took place in the field of attention beginning in the late 1970s. Specifically, early studies used the filtering paradigm, in which subjects are typically overloaded with relevant and irrelevant stimuli and required to perform a complex task. Later studies used the selective-set paradigm, in which subjects are typically presented with few stimuli and required to perform a simple task. Thus, based on the observation that the conditions prevailing in the two types of study are very different, Kahneman and Treisman cautioned against any generalization across these paradigms.

Lavie and Tsal (1994) elaborated on this idea by proposing that perceptual load may determine the locus of selection. They showed that early selection is possible only under conditions of high perceptual load (viz., when the task at hand is demanding or when the number of different objects in the display is large), whereas results typical of late selection are obtained under conditions of low perceptual load. In other words, when the task is not demanding, the spare capacity that is unused by that task is automatically diverted to the processing of irrelevant stimuli.

The idea of a fixed locus of selection (whether early or late) implies a distinction between a preattentive stage, in which all information receives a preliminary but superficial analysis, followed by an attentive stage, in which only selected parts of the information receive further processing (Neisser, 1967). The preattentive stage has been further characterized as being automatic (i.e., triggered by external stimulation), spatially parallel, and unlimited in capacity, whereas the attentive stage is controlled (i.e., guided by the observer’s goals and intentions), spatially restricted to a limited region, and limited in capacity. Within this framework, an important question becomes, To what extent are stimuli processed during the preattentive stage?

One implication of the proposed resolutions of the earlyversus-late debate (Kahneman & Treisman, 1984; Lavie & Tsal, 1994; Yantis & Johnston, 1990) is that one cannot draw inferences from findings concerning the locus of selection to the question of how extensive preattentive processing is. That is, how efficient selection can be and what is accomplished during the preattentive stage are separate issues. For instance, the idea of a flexible locus of selection advanced by Yantis and Johnston (1990) implies that the level at which selection can be accomplished does not reveal intrinsic capacity limitations but depends only on task demands, and thus does not tell anything about preattentive processing. Similarly, the finding that perceptual load is a major determinant of selection efficiency (Lavie & Tsal, 1994) makes a useful methodological contribution, because it shows that a failure of selectivity does not reveal how extensively unattended objects are processed, but may instead reflect the mandatory allocation of unused attentional resources to irrelevant objects.

Efficiency of Selection

Failures of Selectivity

Various factors affect the efficiency of attentional selection. As was mentioned earlier, Lavie and Tsal (1994) proposed that low perceptual load may impair selectivity because spare attentional resources are automatically allocated to irrelevant distractors. Similarly, grouping between target and distractors may impair attentional selectivity. Another case of selectivity failure is evident in the ability of certain knownto-be-irrelevant stimuli to capture attention automatically.

Effects of Grouping on Selection

The principles of perceptual organization articulated by the Gestalt psychologists at the beginning of the last century (e.g., proximity, similarity, good continuation) correlate certain stimulus characteristics with the tendency to perceive certain parts of the visual field as belonging together—that is, as forming the same perceptual object. Kahneman and Henik (1981) considered the possibility that such grouping principles may impose strong constraints on visual selection, with attention selecting whole objects rather than unparsed regions of space. Beginning in the early 1980s, this object-based view of selection has gained increased empirical support from a variety of experimental paradigms.

Rock and Gutman (1981) showed object-specific attentional benefits in an early study. Subjects were presented with a sequence of 10 stimuli, each of which consisted of two overlapping outline drawings of novel shapes, one drawn in red and one in green. Thus, in each of the overlapping pairs, the two shapes occupied essentially the same overall location in space. Subjects were required to make aesthetic judgments concerning only those stimuli in one specific color (e.g., the red stimuli). At the end of the sequence, they were given a surprise recognition test. Subjects were much more likely to report attended items (those about which they had rendered aesthetic judgments) as old than to report unattended items as old. In fact, unattended items were as likely to be recognized as were new items.

This finding shows that attention can be directed to one of two spatially overlapping items. Note, however, that objectbased selection was required by the task, which leaves open the possibility that object-based selection may not be mandatory. Moreover, the fact that the unattended stimulus was not recognized does not necessarily entail that it was not perceived; in particular, it may have been forgotten during the interval between presentation and the recognition test.

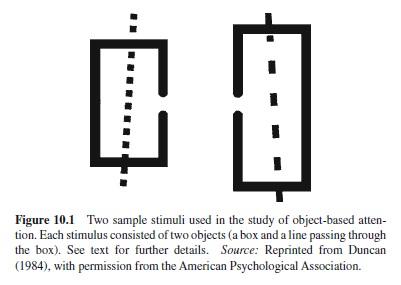

In a later article, Duncan (1984) explicitly laid out the distinction between space-based and object-based views of attention and tested them with a perceptual version of the Rock and Gutman (1981) memory task. In Duncan’s study, objectbased selection was no more task relevant than space-based selection. Subjects were presented with displays containing two objects: an outline box and a line that was struck through the box (see Figure 10.1). The box was either short or tall, and had a gap on either its left or right side. The line was dashed or dotted and was slanted either to the right or to the left. Subjects were found to judge two properties of the same object as readily as one property. However, there was a decrement in performance when they had to judge two properties belonging to two different objects. These results showed a difficulty in dividing attention between objects that could not be accounted for by spatial factors, because the objects were superimposed in the same spatial region.

This very influential study has generated a whole body of research concerned with the issue of object-based selection,although it has been criticized by several authors (e.g., Baylis & Driver, 1993). Later studies where the problems associated with Duncan’s study were usually overcome also demonstrated a cost in dividing attention between two objects (e.g., Baylis & Driver, 1993; but see Davis, Driver, Pavani, & Shepherd, 2000, for a spatial interpretation of object-based effects obtained using divided attention tasks).

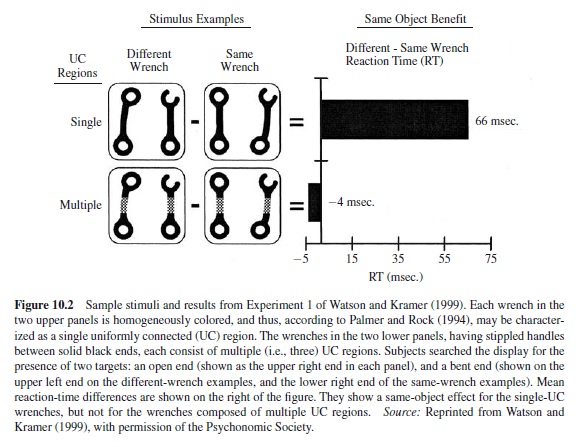

Recently, Watson and Kramer (1999) added an important contribution to this line of research by attempting to specify a priori the stimulus characteristics that define the objects upon which selection takes place. They proposed a framework that allows one to predict whether object-based effects will be found,depending on stimulus characteristics. Borrowing from Palmer and Rock’s (1994) theory of perceptual organization, they distinguished among three hierarchically organized levels of representation: (a) single, uniformly connected (UC) regions, defined as connected regions with uniform visual properties such as color or texture; (b) grouped-UC regions, which are larger representations made up of multiple singleUC regions grouped on the basis of Gestalt principles; and (c) parsed-UC regions, which are smaller representations segregated by parsing single-UC regions at points of concavity (e.g., the pinched middle of an hourglass is such a region of concavity; it permits parsing the hourglass into its two main parts, the upper and lower chambers).

They used complex familiar objects (pairs of wrenches) and had subjects identify whether one or two predefined target properties were present in these objects (see Figure 10.2). They examined under which conditions objectbased effects (i.e., a performance cost for trials in which two targets belong to different wrenches rather than to the same wrench) could be obtained for each of the three representational levels. They found that (a) object-based effects are obtained when the to-be-judged object parts belong to the same single-UC region, but not when they are separate single-UC regions, and concluded that the default level at which selection occurs is the single-UC level; and (b) selection may occur at the grouped-UC level when it is beneficial to performing the task or when this level has been primed.

The finding that it is easier to divide attention between two properties when these belong to the same object suggests that perceptual organization affects the distribution of attention. Another empirical strategy used to reveal these effects is to show that subjects are unable to ignore distractors when these are grouped with the to-be-attended target (e.g., Banks & Prinzmetal, 1976). Other studies following this line of reasoning used the Eriksen response competition paradigm (Eriksen & Hoffman, 1973), where the presence of distractors flanking the target and associated with the wrong response is shown to slow choice reaction to the target. They demonstrated that distractors grouped with the target (e.g., by common color or contour) slow response more than do distractors that are not grouped with it, even when target-distractor distance is the same in the two conditions (Kramer & Jacobson, 1991).

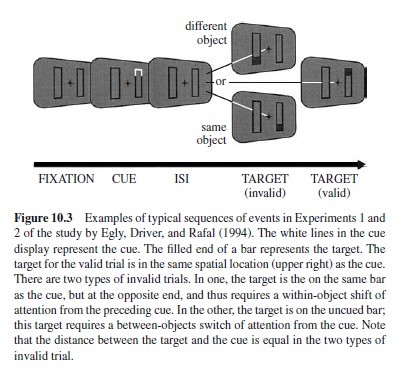

Perhaps the strongest support for the idea that attention selects perceptual groups rather than unparsed locations was provided by Egly, Driver, and Rafal’s (1994) spatial cueing study. Subjects had to detect a luminance change at one of the four ends of two outline rectangles (see Figure 10.3). One end was precued. On valid-cue trials, the target appeared at the cued end of the cued rectangle, whereas on invalid-cue trials, it appeared either at the uncued end of the cued rectangle, or in the uncued rectangle. The distance between the cued location and the location where the target appeared was identical in both invalid-cue conditions. On invalid-cue trials, targets were detected faster when they belonged to the same object as the cue, rather than to the other object. Several replications were reported, with detection (e.g., Lamy & Tsal, 2000; Vecera, 1994) as well as identification tasks (e.g., Lamy & Egeth, 2002; Moore, Yantis, & Vaughan, 1998).

Although some individual studies have been criticized or proved difficult to replicate and limiting conditions for object-based selection have been identified (Lamy & Egeth, in press; Watson & Kramer, 1999), the overall picture that emerges from this selective review is that the segmentation of the visual field into perceptual groups imposes constraints on attentional selection. It is important to note, however, that this conclusion does not necessarily imply that grouping processes are preattentive. Indeed, in all the studies surveyed above, at least one part of the relevant object (i.e., of the perceptual group for which object-based effects were measured) was attended. As a result, one may conceive of the possibility that attending to an object part causes other parts of this object to be attended.

For this reason, a safer avenue to investigate whether grouping requires attention may be to measure grouping effects when the relevant perceptual group lies entirely outside the focus of attention. The studies pertaining to this issue will be discussed in the section on “Preattentive and Attentive Processing.”

Capture of Attention by Irrelevant Stimuli

Goal-directed or top-down control of attention refers to the ability of the observer’s goals or intentions to determine which regions, attributes, or objects will be selected for further visual processing. Most current models of attention assume that top-down selectivity is modulated by stimulusdriven (or bottom-up) factors, and that certain stimulus properties are able to attract attention in spite of the observer’s effort to ignore them. Several models, such as the guided search model of Cave and Wolfe (1990), posit that an item’s overall level of attentional priority is the sum of its bottom-up activation level and its top-down activation level. Bottom-up activation is a measure of how different an item is from its neighbors. Top-down activation (Cave & Wolfe, 1990) or inhibition (Treisman & Sato, 1990) depends on the degree of match between an item and the set of target properties specified by task demands. However, the relative weight allocated to each factor and the mechanisms responsible for this allocation are left largely unspecified. Curiously enough, no particular effort has been made to isolate the effects on visual search of bottom-up and top-down factors, which were typically confounded in the experiments held to support these theories (see Lamy & Tsal, 1999, for a detailed discussion). For instance, the fact that search for feature singletons is efficient has been demonstrated repeatedly (e.g., Egeth, Jonides, & Wall, 1972; Treisman & Gelade, 1980) and has been termed pop-out search (or parallel feature search). It is often assumed that this phenomenon reflects automatic capture of attention by the feature singleton. However, in typical popout search experiments, the singleton target is both task relevant and unique. Thus, it is not possible to determine in these studies whether efficient search stems from top-down factors, bottom-up factors, or both (see Yantis & Egeth, 1999).

Recently, new paradigms have been designed that allow one to disentangle bottom-up and top-down effects more rigorously. The general approach has been to determine the extent to which top-down factors may modulate the ability of an irrelevant salient item to capture attention. Discontinuities, such as uniqueness on some dimension (e.g., color, shape, orientation) or abrupt changes in luminance, are typically used as the operational definition of bottom-up factors or stimulus salience. Based on the evidence that has accumulated in the last decade or so, two opposed theoretical proposals have emerged. Some authors have suggested that preattentive processing is driven exclusively by bottom-up factors such as salience, with a role for top-down factors only later in processing (e.g., M. S. Kim & Cave, 1999; Theeuwes, Atchley, & Kramer, 2000). Others have proposed that attentional allocation is always ultimately contingent on topdown attentional settings (e.g., Bacon & Egeth, 1994; Folk, Remington, & Johnston, 1992). A somewhat intermediate viewpoint is that pure, stimulus-driven capture of attention is produced only by the abrupt onset of new objects, whereas other salient stimulus properties do not summon attention when they are known to be irrelevant (e.g., Jonides & Yantis, 1988). Several sets of findings have shaped the current state of the literature on how bottom-up and top-down factors affect attentional priority.

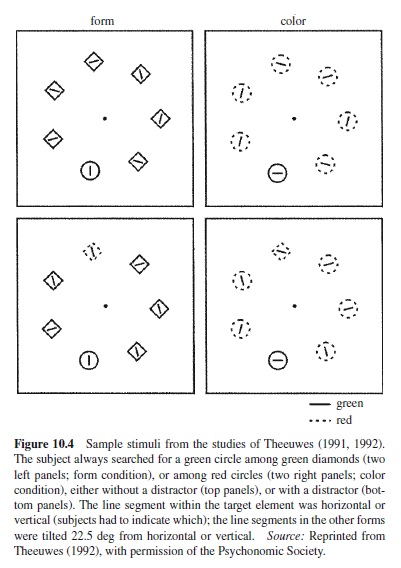

Beginning in the early 1990s, Theeuwes (e.g., 1991, 1992; Theeuwes et al., 2000) carried out several experiments suggesting that attention is captured by the element with the highest bottom-up salience in the display, regardless of whether this element’s salient property is task relevant. Capture was measured as slower performance in parallel search when an irrelevant salient object was present. For instance, Theeuwes (1991, 1992) presented subjects with displays consisting of varying numbers of colored circles and diamonds arranged on the circumference of an imaginary circle (see Figure 10.4). A line segment varying in orientation appeared inside each item, and subjects were required to determine the orientation of the line segment within a target item. In one condition, the target item was defined by its unique form (e.g., it was the single green diamond among green circles). In another condition, it was defined as the color singleton (e.g., it was the single red square among green squares). On half of the trials, an irrelevant distractor unique on an irrelevant dimension might be present. For instance, when the target item was a green diamond among green circles, a red circle was present. Theeuwes (1991) found that the presence of the irrelevant singleton slowed reaction times (RTs) significantly. However, this effect occurred only when the irrelevant singleton was more salient than the singleton target, suggesting that items are selected by order of salience. In a later study, Theeuwes (1992) reported distraction effects even when the target’s unique feature value was known (see Pashler, 1988a, for an earlier report of this effect). Theeuwes concluded that when subjects are engaged in a parallel search, perfect top-down selectivity based on stimulus features (e.g., red or green) or stimulus dimensions (e.g., shape or color) is not possible.

Bacon and Egeth (1994) questioned this conclusion. Using a distinction initially suggested by Pashler (1988a), they proposed that in Theeuwes’s (1992) experiment, two search strategies were available: (a) singleton detection mode, in which attention is directed to the location with the largest local feature contrast, and (b) feature search mode, which entails directing attention to items possessing the target visual feature. Indeed, the target was defined as being a singleton and as possessing the target attribute. If subjects used singleton detection mode, both relevant and irrelevant singletons could capture attention, depending on which exhibited the greatest local feature contrast. To test this hypothesis, Bacon and Egeth (1994) designed conditions in which singleton detection mode was inappropriate for performing the task. As a result, the disruption caused by the unique distractor disappeared. They concluded that irrelevant singletons may or may not cause distraction during parallel search for a known target, depending on the search strategy employed.

Another set of experiments revealed that abrupt onsets do produce involuntary attentional capture (Hillstrom & Yantis, 1994; Jonides & Yantis, 1988), whereas feature singletons on dimensions such as color and motion do not (e.g., Jonides & Yantis, 1988). These authors concluded that (a) abrupt onsets are unique in their ability to summon attention to their location automatically, and (b) feature singletons do not capture attention when they are task irrelevant.

The idea that the ability of a salient stimulus to capture attention depends on top-down settings—specifically, on whether subjects use singleton detection mode or feature searchmode—is consistent with the contingent attentional capture hypothesis (e.g., Folketal., 1992). According to this theory, attentional capture is ultimately contingent on whether a salient stimulus property is consistent with top-down attentional control settings. The settings are assumed to reflect current behavioral goals determined by the task to be performed. Once the attentional system has been configured with appropriate control settings, a stimulus property that matches the settings will produce “on-line” involuntary capture to its location. Stimuli that do not match the top-down attention settings will not capture attention.

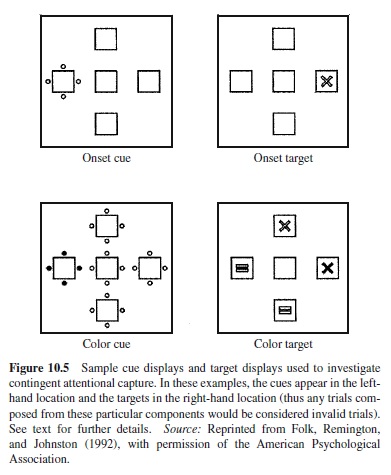

Folk et al. (1992) provided support for this claim using a novel spatial cuing paradigm. In Experiment 3, for instance, subjects saw a cue display followed by a target display (see Figure 10.5). They were required to decide whether the target was an x or an “=” sign. The target was defined either as a color singleton target (e.g., the single red item among white items) or as an onset target (i.e., a unique abruptly onset item in the display). Two types of distractors were used. A color distractor consisted of four colored dots surrounding a potential target location, and arrays of white dots surrounded the remaining three locations. An onset distractor consisted of a unique array of four white dots surrounding one of the potential target locations. The two distractor types were factorially combined with the two target types, with each combination presented in a separate block. The locations of the distractor and target were uncorrelated. The authors reasoned that if a distractor were to capture attention, a target sharing its location would be identified more rapidly than a target appearing at a different location. Thus, they measured capture as the difference in performance between conditions in which distractors appeared at the target location versus nontarget locations. The question was whether capture would depend on the match between the salient property of the distractor and the property defining the target. The results showed that it did: Whereas capture was found when the distractor and target shared the same property, virtually no capture was observed when they were defined by different properties.

The foregoing discussion of attentional capture suggests that the conditions under which involuntary capture occurs remain controversial. Studies that reached incompatible conclusions usually presented numerous procedural differences. For instance, Folk (e.g., Folk et al., 1992) and Yantis (e.g., Yantis, 1993) disagree on what status should be assigned to new (or abruptly onset) objects. Yantis claims that abrupt onsets capture attention irrespective of the observer’s intentions, whereas Folk argues that involuntary capture by abrupt onsets happens only when subjects are set to look for onset targets. Note, however, that Yantis’s experiments typically involved a difficult search, for instance, one in which the target was a specific letter among distracting letters (e.g., Yantis & Jonides, 1990) or a line differing only slightly in orientation from surrounding distractors (e.g., Yantis & Egeth, 1999). In contrast, Folk’s subjects typically searched for, say, a red target among white distractors—that is, for a target that sharply differed from the distractors on a simple dimension (e.g., Folk et al., 1992). Thus, the two groups of studies differed as to how much top-down guidance was available to find the target. This factor may possibly account for the better selectivity obtained in Folk’s studies. Further research is needed to settle this issue.

The main point of agreement seems to be that an irrelevant feature singleton will not capture attention automatically when the task does not involve searching for a singleton target. This finding has been obtained using three different paradigms, under which attentional capture was gauged using different measures: a difference between distractor-present versus distractor-absent trials (Bacon & Egeth, 1994); a difference between trials in which the target and cue occupy the same versus different locations in spatial cueing tasks (e.g., Folk et al., 1992); and the difference between trials in which the target and salient item do versus do not coincide (e.g., Yantis & Egeth, 1999). Although most of the evidence provided by Theeuwes (e.g., 1992) for automatic capture was drawn from studies in which the target was a singleton, his position on whether capture occurs when the target is not a singleton is not entirely clear (see, e.g.,Theeuwes & Burger, 1998).

Note, however, that in the current state of the literature, the implied distinction between singleton detection mode, in which any salient distractor will capture attention, and feature search mode, in which only singletons sharing a task-relevant feature will capture attention, suffers from two problems.

First, it is based on the yet-untested assumption that the singleton detection mode of processing is faster or less cognitively demanding than is the feature search mode. Indeed, one observes that subjects will use the feature search mode only if the singleton detection mode is not an option. For instance, when the strategy of searching for the odd one out is not available (e.g., Bacon & Egeth, 1994, Experiments 2 & 3), an irrelevant singleton does not capture attention. However, the same irrelevant singleton does capture attention when subjects search for a singleton target with a known feature (e.g., Bacon & Egeth, 1994, Experiment 1). Capture by the irrelevant singleton occurs despite the fact that using the singleton detection mode will tend to guide attention first toward a salient nontarget on 50% of the trials (or even on 100% of the trials; see M. S. Kim & Cave, 1999), whereas using the feature search mode will tend to guide attention directly to the target on 100% of the trials. The intuitive explanation for the fact that subjects use a strategy that is nominally less efficient is that the singleton-detection processing mode itself must be structurally more efficient. Yet, no study to date has put this assumption to test.

Second, in studies in which subjects must look for a unique target with a known feature, there is often an element of circularity in inferring from the data which processing mode subjects use. Indeed, if an irrelevant singleton captures attention, then the conclusion is that subjects used the singleton detection mode. If, in contrast, no capture is observed, the conclusion is that they used the feature search mode. However, the factors that induce subjects to use one mode rather than the other when both modes are available remain unspecified.

Selection by Location and Other Features

The foregoing section was concerned with factors that limit selectivity. Next, we turn to a description of the mechanisms underlying the different ways by which attention can be directed toward to-be-selected or relevant areas or objects.

Selection by Location

“Attention is quite independent of the position and accommodation of the eyes, and of any known alteration in these organs; and free to direct itself by a conscious and voluntary effort upon any selected portion of a dark and undifferenced field of view” (von Helmholtz, 1871, p. 741, quoted by James, 1890/1950, p. 438). Since this initial observation was made, a large body of research has investigated people’s ability to shift the locus of their attention to extra-foveal loci without moving their eyes (e.g., Posner, Snyder, & Davidson, 1980), a process called covert visual orienting (Posner, 1980).

Covert visual orienting may be controlled in one of two ways, one involving peripheral (or exogenous) cues, and the other, central (or endogenous) cues. Peripheral cues traditionally involve abrupt changes in luminance—usually, abrupt object onsets, which on a certain proportion of the trials appear at or near the location of the to-be-judged target. With central cues, knowledge of the target’s location is provided symbolically, typically in the center of the display (e.g., an arrow pointing to the target location). Numerous experiments have shown that detection and discrimination of a target displayed shortly after the cue is improved more on valid trials—that is, when this target appears at the same location as the cue (peripheral cues) or at the location specified by the cue (central cues)—than on invalid trials, in which the target appears at a different location. Some studies also include neutral trials or no-cue trials, in which none of the potential target locations is primed (but see Jonides & Mack, 1984, for problems associated with the choice of neutral cues). Neutral trials typically yield intermediate levels of performance. Peripheral and central cues have been compared along two main avenues.

Some studies have focused on differences in the way attention is oriented by each type of cue. The results from this line of research have suggested that peripheral cues capture attention automatically (but see the earlier section, “Capture of Attention by Irrelevant Stimuli,” for a discussion of this issue), whereas attentional orienting following a central cue is voluntary (e.g., Müller & Rabbitt, 1989; Nakayama & Mackeben, 1989). Moreover, attentional orienting to the cued location was found to be faster with peripheral cues than with central cues. For instance, in Muller and Rabbitt’s (1989) study, subjects had to find a target (T) among distractors (+) in one of four boxes located around fixation. The central cue was an arrow at fixation, pointing to one of the four boxes. The peripheral cue was a brief increase in the brightness of one of the boxes. With peripheral cues, costs and benefits grew rapidly and reached their peak magnitudes at cue-to-target onset asynchronies (SOAs) in the range of 100– 150 ms. With central cues, maximum costs and benefits were obtained for SOAs of 200–400 ms.

Other studies have focused on differences in information processing that occur as a consequence of the allocation of attention by peripheral versus central cues. Two broad classes of mechanisms have been proposed to describe the effects of spatial cues. According to the signal enhancement hypothesis (e.g., Henderson, 1996), attention strengthens the stimulus representation by allocating the limited capacity available for perceptual processing. In other words, attention facilitates perceptual processing at the cued location. According to the uncertainty or noise reduction hypothesis (e.g., Palmer, Ames, & Lindsay, 1993) spatial cues allow one to exclude distractors from processing by monitoring only the relevant location rather than all possible ones. Thus, cueing attention to a specific location reduces statistical uncertainty or noise effects, which stem from information loss and decision limits, not from changes in perceptual sensitivity or limits of information-processing capacity.

In order to test the two hypotheses against each other, several investigators have sought to determine whether spatial cueing effects would be observed when the target appears in an otherwise empty field. The signal enhancement hypothesis predicts such effects, as the allocation of attentional resources at the cued location should facilitate perceptual processing at that location, even in the absence of noise. In contrast, the noise reduction hypothesis predicts no cueing effects with single-element displays, because no spatial uncertainty or noise reduction should be required in the absence of distractors.

This line of research has generated conflicting findings, with reports of small effects (Posner, 1980), significant effects (e.g., Henderson, 1991) or no effect (e.g., Shiu & Pashler, 1994). Relatively subtle methodological differences have turned out to play a crucial role. For instance, Shiu and Pashler (1994) criticized earlier single-target studies (Henderson, 1991) on the grounds that the masks presented at each potential location after the target display may have been confusable with the target, thus making the precue useful in reducing the noise associated with the masks. They compared a condition in which masks were presented at all potential locations vs. a condition with a single mask at the target location. Precue effects were found only in the former condition, supporting the idea that these reflect noise reduction rather than perceptual enhancement. However, recent evidence showed that spatial cueing effects can be found with a single target and mask, and are larger with additional distractors or masks. These findings suggest that attentional allocation by spatial precues leads both to signal enhancement at the cued location and noise reduction (e.g., Cheal & Gregory, 1997; Henderson, 1996).

Most of the reviewed studies employed informative peripheral cues, which precludes the possibility of determining whether the observed effects of attentional facilitation should be attributed to the exogenous or to the endogenous component of attentional allocation, or to both. Studies that employed non-informative peripheral cues (Henderson, 1996; Luck & Thomas, 1999) showed that these lead to both perceptual enhancement and noise reduction. Recently, Lu and Dosher (2000) directly compared the effects of peripheral and central cues and reported results suggesting a noise reduction mechanism of central precueing and a combination of noise reduction and signal enhancement for peripheral cueing.

To conclude, the current literature points to notable differences in the way attention is oriented by peripheral vs. central cues, as well as differences in information processing when attention is directed by one type of spatial cue vs. the other.

Is Location Special?

The idea that location may deserve a special status in the study of attention has generated a considerable amount of research, and the origins of this debate can be traced back to the notion that attention operates as a spotlight (e.g., Broadbent, 1982; Eriksen & Hoffman, 1973; Posner et al., 1980), which has had a major influence on attention research.According to this model, attention can be directed only to a small contiguous region of the visual field. Stimuli that fall within that region are extensively processed, whereas stimuli located outside that region are ignored. Thus, the spotlight model— as well as models based on similar metaphors, such as zoom lenses (e.g., Eriksen & Yeh, 1985) and gradients (e.g., Downing & Pinker, 1985; LaBerge & Brown, 1989)— endows location (or space) with a central role in the selection process. Later theories making assumptions that markedly depart from spotlight theories also assume an important role for location in visual attention (see Schneider, 1993 for a review). These include for instance Feature Integration Theory (Treisman & Gelade, 1980), the Guided Search model (Cave & Wolfe, 1990; Wolfe, 1994), van der Heidjen’s model (1992, 1993), and the FeatureGate model (Cave, 1999).

A comprehensive survey of the debate on whether or not location is special is beyond the scope of the present endeavor (see for instance, Cave & Bichot, 1999; Lamy & Tsal, 2001, for reviews of this issue). Here, two aspects of this debate will be touched on, which pertain to the efficiency of selection. First, we shall briefly review the studies in which selectivity using spatial vs. non-spatial cues is compared. Then, the idea that selection is always ultimately mediated by space, which entails that selection by location is intrinsically more direct, will be contrasted with the notion that attention selects space-invariant object-based representations.

Selection by Features Other Than Location. Numerous studies have shown that advance knowledge about a non-spatial property of an upcoming target can improve performance (e.g., Carter, 1982). Results arguing against the idea that attention can be guided by properties other than location are typically open to alternative explanations (see Lamy & Tsal, 2001, for a review). For instance, Theeuwes (1989) presented subjects with two shapes that appeared simultaneously on each side of fixation. The target was defined as the shape containing a line segment, whereas the distractor was the empty shape. Subjects responded to the line’s orientation. The target was cued by the form of the shape within which it appeared, or by its location. Validity effects were obtained with the location cue but not with the form cue. The author concluded that advance knowledge of form cannot guide attention. Note however, that it may have been easier for subjects to look for the filled shape, that is, to use the “defining attribute” (Duncan, 1985), rather than to use the form cue. In this case, subjects may simply not have used the cue, which would explain why it had no effect. According to this logic, using the location cue was easier than looking for the filled shape, but looking for the filled shape was easier than using the form cue. Thus, whereas Theeuwes’s finding indicates that location cueing may be more efficient than form cueing, it does not preclude the possibility that form cues may effectively guide attention when no other, more efficient strategy is available.

Whereas it is generally agreed that spatial cueing is more efficient than cueing by other properties, there has been some debate as to whether qualitative differences exist between attentional allocation using one type of cue vs. the other (e.g., Duncan, 1981; Tsal, 1983). It seems that non-spatial cues differ from peripheral spatial cues in that they only prioritize the elements possessing the cued property rather than improving their perceptual representation. Moore and Egeth (1998) recently presented evidence showing that “feature-based attention failed to aid performance under ‘data-limited’conditions (i.e., those under which performance was primarily affected by the sensory quality of the stimulus), but did affect performance under conditions that were not data-limited.” Moreover, in several physiological studies that compared the event-related potentials (ERP) elicited by stimuli attended on the basis of location vs. other features, a qualitatively different pattern of activity was found for the two types of cues, which was taken to indicate that selection by location may occur at an earlier stage than selection by other properties (e.g., Hillyard & Munte, 1984; Näätänen, 1986).

Is Selection Mediated by Space? The idea that selection is always ultimately mediated by space, as is assumed in numerous theories of attention, has been challenged by research showing that attention is paid to space-invariant object-based representations rather than to spatial locations. Studies favoring the space-based view typically manipulated only spatial factors. The reasoning was that if spatial effects can be found when space is task irrelevant, then selection must be mediated by space, and does not therefore operate on space-invariant representations. In contrast, in studies supporting the space-invariant view, spatial factors were usually kept constant and objects were separated from their spatial location via motion. In spite of intensive investigation, no consensus has yet emerged.

It is important to make it clear that the body of research concerned with the effects of Gestalt grouping on the distribution of attention that was reviewed earlier is not relevant here. Both issues are generally conflated under the general term of “object-based selection.” However, whether attention selects spatial or spatially-invariant representations concerns the medium of selection, whereas effects of grouping on attention speak to the efficiency of selection (see Lamy & Tsal, 2001; Vecera, 1994; Vecera & Farah, 1994, for further explication of this distinction).

One of the most straightforward methods used to investigate whether selection is fundamentally spatial is to have subjects attend to an object that happens to occupy a certain location in a first display and then attend to a different object occupying either the same or a different location in a subsequent display. With this procedure, sometimes referred to as the “post-display probe technique” (e.g., Kramer, Weber, & Watson, 1997), an advantage in the same-location condition is taken to support the idea that selection is space-based. The crux of this method is that it shows spatial effects in tasks where space is utterly irrelevant to the task at hand. For instance, Tsal and Lavie (1993, Experiment 4) showed that when subjects had to attend to the color of a dot (its location being task irrelevant), they responded faster to a subsequent probe when it appeared in the location previously occupied by the attended dot than in the alternative location (see M. S. Kim & Cave, 1995, for similar results).

Following a related rationale, other authors used rapid serial visual presentation (RSVP) tasks (e.g., McLean, Broadbent, & Broadbent, 1983) or partial report tasks (e.g., Butler, Mewhort, & Tramer, 1987) and showed that when subjects have to report an item with a specific color, nearlocation errors are the most frequent. In the same vein, Tsal and Lavie (1988) showed that when required to report one letter of a specified color and then any other letters they could remember from a visual display, subjects tended to report letters adjacent to the first-reported letter more often than letters of the same (relevant) color (see van der Heijden, Kurvink, de Lange, de Leeuw, & van der Geest, 1996, for a criticism and Tsal & Lamy, 2000, for a response). These results suggest that selecting an object by any of its properties is mediated by a spatial representation.

Other investigators attempted to demonstrate that selection is mediated by space by showing effects of distance on attention. In early studies, interference was found to be reduced as the distance between target and distractors increased (e.g., Gatti & Egeth, 1978). Attending to two stimuli was also found to be easier when these were close together rather than distant from each other (e.g., Hoffman & Nelson, 1981). More recent studies showed that distance modulates samevs.-different object effects, as the difficulty in attending to two objects increases with the distance between these objects (e.g., Kramer & Jacobson, 1991; Vecera, 1994. See Vecera & Farah, 1994, for a failure to find distance effects on object selection, and Kramer et al., 1997; Vecera, 1997, for a discussion of these results). There is some contrary evidence, suggesting that performance gets better as the separation between attended elements increases (e.g., Bahcall & Kowler, 1999; Becker, 2001) and still other findings showing that performance is unaffected by the separation between attended stimuli (e.g., Kwak, Dagenbach, & Egeth, 1991).

The experimental strategy of manipulating distance to demonstrate that selection is mediated by space has been criticized on several grounds. For instance, distance effects in divided attention tasks may only reflect the effects of grouping by proximity. That is, when brought closer together, two objects may be perceived as a higher-order object (e.g., Duncan, 1984). Accordingly, distance effects are attributed to effects of grouping on the distribution of attention and say nothing about whether or not the medium of attention is spatial. In tasks involving a shift of attention over small vs. large distances, the assumption underlying the use of a distance manipulation is that attention moves in an analog fashion through visual space, the time needed for attention to move from one location to another being proportional to the distance between them. However, this assumption may be unwarranted (e.g., Sperling & Weichselgartner, 1995).

Support for the Space-Invariant View. Whether attention may select from space-invariant object-based representations has been investigated by separating objects from their locations via motion. Kahneman, Treisman, and Gibbs (1992) found that the focusing of attention on an object selectively activates the recent history of that object (i.e., its previous states) and facilitates recognition when the current and previous states of the object match. They found this matching process, called “reviewing,” to be successful only when the objects in the preview and probing displays shared thesame“object-file,”namely,whenoneobjectwasperceived to move smoothly from one display to the other. This finding is typically taken to show that attention selects object-files, that is, representations that maintain their continuity in spite of location changes (e.g., Kanwisher & Driver, 1992).

Further support for the idea that attention operates in object-based coordinates comes from experiments by Tipper and his colleagues. They used the inhibition of return paradigm (e.g., Tipper, Weaver, Jerreat, & Burak, 1994) and the negative priming paradigm (Tipper, Brehaut, & Driver, 1990), as well as measurements of the performance of neglect patients (Behrmann & Tipper, 1994). Inhibition of return studies show that it is more difficult to return one’s attention to a previously attended location. (Immediately after a spatial location is cued, a stimulus is relatively easy to detect at the cued location. However, after a cue-target SOA of about 300 ms, target detection is relatively difficult at the cued location. This is known as inhibition of return.) Negative priming experiments demonstrate that people are slower to respond to an item if they have just ignored it. Finally, the neurobiological disorder called unilateral neglect is characterized by the patients’failure to respond or orient to stimuli on the side contralateral to a lesion.Although early studies suggested that all three phenomena are associated with spatial locations (e.g., Posner & Cohen, 1984; Tipper, 1985; and Farah, Brunn, Wong, Wallace, & Carpenter, 1990, respectively), recent studies using moving displays showed that the attentional effects revealed by each of these experimental methods can be associated with object-centered representations.

Lamy andTsal (2000, Experiment 3) used a variant of Egly et al.’s (1994) task. Subjects had to detect a target at one of the four ends of two objects, differing in color and shape. A precue appeared at one of the four ends and indicated the location where the target was most likely to show up. To dissociate the cued object from its location, the two objects were made to exchange locations between the cueing and target displays, by moving smoothly, on half of the trials. Reaction times were faster at the uncued location within the cued object than at an equally distant location within the uncued object, thus indicating that attention followed the cued object-file.

Conclusions. To summarize, in studies that measured only space-based effects using either the distance manipulation or the post-display probe technique, it was typically found that selection is mediated by space. In studies that measured the cost of redirecting attention to the same vs. a different object-file using moving objects while keeping spatial factors constant, attention was typically found to follow the object initially attended as it moved. Note that the strongest support for the view that selection is mediated by space comes from studies in which response to a new object was found to be faster if this object occupied the location of a previously attended object even when space was irrelevant to the task. Thus, in these studies, the object initially attended was no longer present in the subsequent display, where attentional effects were measured: A different object typically replaced it. Such findings may therefore only indicate that space-based selection prevails when the task is such that object continuity is systematically disrupted. In other words, selection may be space-based only under this specific condition, which does not abound in a natural environment.

On the other hand, support for the idea that selection operates on space-invariant representations of objects comes from studies showing that attending to an object entails that attentional effects remain associated with this object as it moves. However, space and object-file effects may not be as antithetical as is usually assumed. Finding that attention follows the cued object-file as it moves does not necessarily argue against the idea that selection is mediated by space. Attention may simply accrue to the locations successively occupied by the moving object (e.g., Becker & Egeth, 2000). As yet, no empirical data have been reported that preclude this possibility.

Preattentive and Attentive Processing

As was mentioned in the introductory part of this research paper, inquiring which processes are not contingent on capacity limitations for their execution amounts to inquiring which processes are preattentive, that is, do not require attention. “What does the preattentive world look like? We will never know directly, as it does not seem that we can inquire about our perception of a thing without attending to that thing” (Wolfe, 1998, p. 42). Therefore, it takes ingenious experimental designs to investigate the extent to which unattended portions of the visual field are processed.

Two general empirical strategies have traditionally been used to address this question, and differ somewhat in the underlying definition of “preattentiveness” they adopt. In some paradigms (e.g., visual search), whatever processes do not require focused attention and can be performed in parallel with attention widely distributed over the visual field are considered to be preattentive. In other paradigms (e.g., dual task), preattentive processes are those processes that can proceed without attention, that is, when attentional resources are exhausted by some other task. As we shall see, interpreting results obtained pertaining to preattentive processing has proved to be tricky.

Distributed Attention Paradigms

Visual Search

In a standard visual search experiment, the subject might be asked to indicate whether a specified target is present or absent, or which of two possible targets is present among an array of distractors. The total number of items in the display, known as the set size or display size, usually varies from trial to trial. The target is typically present on 50% of the trials, the display containing only distractors on the remaining trials. On each trial, subjects have to judge whether a target is present. In studies measuring reaction times, the search display remains visible until subjects respond. Of chief interest is the way reaction times vary as a function of set size on targetpresent and target-absent trials. In studies measuring accuracy, search displays are presented briefly and then masked. Accuracy can be plotted as a function of set size to reveal the processes underlying search. Acommon alternative approach is to determine the exposure duration (typically, the asynchrony between the onsets of the search display and of a subsequent masking display) required to achieve some fixed level of accuracy (e.g., 75% correct).

If finding the target (i.e., distinguishing it from the distractors) involves processes that do not require attention and are performed in parallel over the whole display, one expects to observe parallel search. With studies measuring reaction time, this means that the number of distractors present in the displayshouldnotaffectperformance;withstudiesmeasuring accuracy, this means that beyond a relatively short SOA, increasing the time available to inspect the display should not improve performance. Thus, parallel search is held to be diagnostic of preattentive (i.e., parallel, resource-free) processing. If, in contrast, distinguishing the target from the distractors involves processes that do require attention, then attention must be directed to the items one at a time (or perhaps to one subset of them at a time), until the target is found. In this case, the time required to find the target increases as the number of distractors increases. Moreover, if search is terminated as soon as the target is found, the target should be found, on average, halfway through the search process.Thus,searchslopes for target-absent trials should be twice as large as for targetpresent trials (Sternberg, 1969). In studies measuring accuracy, if search requires attention, the more items in the display the longer the exposure time necessary to find the target.

This rationale was criticized very early on (Luce, 1986; Townsend, 1971; Townsend & Ashby, 1983). On the one hand, slopes are usually shallow, perhaps 10 ms per item, rather than null. In principle, they could reflect the operation of a serial mechanism that processes 100 items every second. However, such fast scanning is held to be physiologically not feasible (Crick, 1984).

On the other hand, linear search functions do not necessarily reflect serial processing. They are consistent with capacity-limited parallel processing, in which all items are processed at once, although the rate at which information accumulates at each location for the presence of the target or of a nontarget item decreases as the number of additional comparisons concurrently performed increases (Murdock, 1971; Townsend & Ashby, 1983).

Linear search functions are also compatible with unlimitedcapacity parallel processing, in which set size affects the discriminability of elements in the array rather than processing speed per se. According to this view, the risk of confusing the target with a distractor increases as the number of elements increases, due to decision processes or to sensory processes (Palmer et al., 1993).

Finally, it has been argued that even the steepest serial slopes cannot reflect serial item-by-item attentional scanning. Whereas these range from 40 to 100 ms per item, Duncan, Ward, and Shapiro (1994) have claimed that attention must remain focused on an object for several hundred milliseconds before being shifted to another object. They referred to this period as the attentional dwell time. However, Moore, Egeth, Berglan, and Luck (1996) have shown that the long estimates of dwell time were caused, at least in part, by the use of masked targets.

Simultaneous versus Successive Presentation

Considering the complexities involved in interpreting search slopes, several investigators have explored the ability of individuals to discriminate between two targets in displays of a fixed size in which the critical manipulation involves the way the stimuli are presented over time. These experiments compare a condition in which all of the stimuli are presented simultaneously with a condition in which they are presented sequentially. (They may be presented one at a time or in larger groups.) Each stimulus is followed by a mask. The logic is that if capacity is limited, then it should be more difficult to detect a target when all of the stimuli are presented at the same time than when they are presented in smaller groups, which would permit more attention to be devoted to each item.

Shiffrin and Gardner (1972) showed that when a fairly simple discrimination was involved, such as indicating whether a T or an F target was present in a display (the nontargets here were hybrid T-F characters), and the number of display elements was small (four), then there was good evidence of parallel processing with unlimited capacity (see also Duncan, 1980). However, when the number of elements in the display was increased (e.g., Fisher, 1984) or the complexity of the stimuli was increased, advantages for successive presentation have been observed (e.g., Duncan, 1987; see also Kleiss & Lane, 1986).

Change Blindness

In an interesting variant of a search task, subjects are presented with a display that is replaced with a second display after a delay filled with a blank field, and have to indicate what, if anything, is different about the second display. The displays can be of any sort, from random displays of dots (Pollack, 1972) to real-life visual events (e.g., Simons & Levin, 1998). These conditions lead to a wide deployment of attention over the visual field. The striking result is that subjects show very poor performance in detecting the change, an effect that has been dubbed change blindness.

The change blindness effect is reminiscent of subjects’ failure to detect changes that occur during a saccadic eye movement (e.g., Bridgeman, Hendry, & Stark, 1975). However, subsequent research has shown that it may occur independently of saccade-specific mechanisms (Rensink, O’Regan, & Clark, 1997). The two paradigms that are most frequently used to investigate the change blindness phenomenon are the flicker paradigm (Rensink et al., 1997) and the forced-choice detection paradigm (e.g., Pashler, 1988b; Phillips, 1974).

In the forced-choice detection paradigm, each trial consists of one presentation each of an original and a modified image. Only some of the trials contain changes, which makes it possible to use signal detection analyses in addition to measuring response latency and accuracy. For instance, Phillips (1974) presented matrices that contained abstract patterns of black and white squares and asked subjects to detect changes between the first and second displays. When the interstimulus interval was short (tens of milliseconds) the task was easy because subjects saw either flicker or motion at the location where a change was made. However, when the interstimulus interval was longer the task became very difficult because offset and onset transients occurred over the entire visual field and thus could not be used to localize the matrix locations that had been changed.

In the flicker paradigm, the original and the modified image are presented in rapid alternation with a blank screen between them. Subjects respond as soon as they detect the modification. The results typically show that subjects almost never detect changes during the first cycle of alternation, and it may take up to 1 min of alternation before some changes are detected (Rensink et al., 1997), even though the changes are usually substantial in size (typically about 20 deg.2) and once pointed out or detected are extremely obvious to the observers. Moreover, changes to objects in the center of interest of a scene are detected more readily than peripheral, or marginal-interest, changes (Rensink et al.). Rensink et al. concluded that “visual perception of change in an object occurs only when that object is given focused attention; in the absence of such attention, the contents of visual memory are simply overwritten (i.e., replaced) by subsequent stimuli, and so cannot be used to make comparisons” (p. 372). Based on the change blindness finding and the results from studies of visual integration (e.g., Di Lollo, 1980), Rensink (2000) speculated that the preattentive representation of a scene “formed at any fixation can be highly detailed, but will have little coherence, constantly regenerating as long as the light continues to enter the eyes, and being created anew after each eye movement” (p. 22).

However, as was noted by Simons (2000), there are other possible accounts for the change blindness effect. “For example, we might retain all of the visual details across views, but never compare the initial representation to the current percept. Or, we might simply lack conscious access to the visual representation (or to the change itself) thereby precluding conscious report of the change” (p. 7). Thus, the finding of change blindness does not necessarily imply that the representation of the initial scene is absent. Further research using implicit measures to evaluate the extent to which this representation is preserved will be useful in order to expand our knowledge not only concerning the change blindness phenomenon but more generally, concerning preattentive vision and the role of attention.

Inattention Paradigms: Dual-Task Experiments

In dual-task experiments designed to explore what processes are preattentive, subjects have to execute a primary task and a secondarytask.Insomecases(e.g.,Mack,Tang,Tuma,Kahn, & Rock, 1992; Rock, Linnett, Grant, & Mack, 1992), the primary task is assumed to exhaust subjects’processing capacities or to ensure optimal focusing of attention. If subjects can successfully perform the secondary task, then it is concluded thattheprocessesinvolvedinthattaskdonotrequireattention and are therefore preattentive. The studies using this logic usually suffered from memory confounds, as subjects were typically requested to overtly report what they had seen in the secondary task displays after performing the primary task.

In other cases (e.g., Joseph, Chun, & Nakayama, 1997; Braun & Sagi, 1990, 1991), performance is compared between a condition in which subjects have to perform both the primary and the secondary task (a dual-task condition) and a condition in which subjects are required to perform only the secondary task (a single-task condition). Sometimes an additional single-task control condition is used, in which subjects are required to perform only the primary task. When a given task is performed equally well in the single- and dualtask conditions, this performance is taken to indicate that processes involved in the secondary task are preattentive, whereas poorer performance in the dual-task condition is held to show that these processes require attention. A caveat that is sometimes associated with this rationale is that the performance impairment produced by the addition of the primary task may reflect the cost of making two responses versus only one, rather than the inability to process the secondary task preattentively. (The results of the studies cited above are discussed later in this research paper.)

We now proceed to present a few examples of efforts to distinguish between processes that require attention and processes that are preattentive.

Further Explorations of Preattentive Processing

Grouping

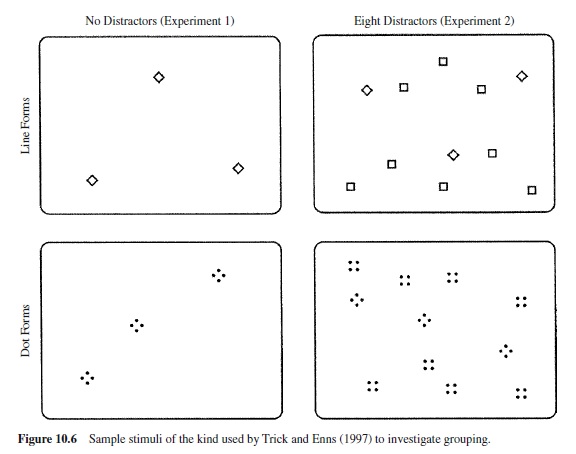

Is perceptual grouping accomplished preattentively? This has proven difficult to answer, in part because grouping itself is a complex concept. For example, Trick and Enns (1997), following Koffka (1935, pp. 125–127), distinguish between element clustering and shape formation. Their research suggests that the former is preattentive, whereas the latter requires attention. Consider the stimuli in Figure 10.6. In two panels the stimuli consist of small diamond shapes made up of continuous lines, while in the other two panels the diamonds are made up of four small dots. Subjects had to determine the number of diamonds present in a display; reaction time was the dependent variable of chief interest. The two panels on the left yielded essentially identical results. The fact that clusters of dots can be counted as quickly as continuous line forms, even for small numbers of elements in the subitizing range (1–3 or 4 items), is consistent with the idea that the dots composing the diamonds were clustered preattentively. For related results, see Bravo and Blake (1990). Interestingly, when shape discrimination was required (counting the diamonds in the face of square distractors, as shown on the right side of Figure 10.6), the continuous line forms were counted more efficiently than the stimuli made of dots. This suggests that the shape formation process may not be preattentive.

That the shape formation component of grouping may require attention is consistent with a number of experiments that suggest grouping outside the focus of attention is not perceived (e.g., Ben-Av, Sagi, & Braun, 1992; Mack et al., 1992; Rock et al., 1992), suggesting that attention selects unparsed areas of the visual field and that grouping requires attention. Ben-Av et al. showed that subjects’ performance in discriminating between horizontal and vertical grouping, or in simply detecting the presence or absence of grouping in the display background, was severely impaired when attention was engaged in a concurrent task of form identification of a target situated in the center of the screen. Mack et al. obtained similar results with grouping by proximity and similarity of lightness.

However, the dependent measure in these studies was subjects’ conscious report of grouping. The fact that grouping cannot be overtly reported when attention is engaged in a demanding concurrent task does not necessarily imply that grouping requires attention. For instance, failure to report grouping may result from memory failure. That is, grouping processes may occur preattentively, with grouping being perceived yet not remembered. In order to test this possibility, Moore and Egeth (1997) conducted a study with displays consisting of a matrix of uniformly scattered white dots on a gray background, in the center of which were two black horizontal lines (see Figure 10.7). Some of the dots were black, and on critical trials they were grouped and formed either the Ponzo illusion (Experiments 1 & 2) or the Müller-Lyer illusion (Experiment 3). Subjects attended to the two horizontal lines and reported which one was longer. Responses were clearly influenced by the two illusions. Therefore, the fact that elements lying entirely outside the focus of attention formed a group did affect behavior, indicating that grouping does not require attention. In a subsequent recognition test, subjects were unable to recognize the illusion patterns. This result confirmed the authors’hypothesis that implicit measures may reveal that subjects perceive grouping, whereas explicit measures may not.

Visual Processing of Simple Features versus Conjunctions of Features

Treisman’s feature integration theory (FIT; e.g., Treisman & Gelade, 1980; Treisman & Schmidt, 1982) has inspired much of the research on visual search ever since its inception in the early 1980s. According to the theory, input from a visual display is processed in two successive stages. During the preattentive stage, a set of spatiotopically organized maps is extracted in parallel across the visual field, with each map coding the presence of a particular elementary stimulus attribute or feature (e.g., red or vertical). In the second stage, attention becomes spatially focused and serves to glue features occupying the same location into unified objects.

The phenomenon of illusory conjunctions (e.g., Treisman & Schmidt, 1982; Prinzmetal, Presti, & Posner, 1986; Briand & Klein, 1987) provides empirical support for the FIT. In the experiments of Treisman and Schmidt displays consisted of several shapes with different colors flanked by two black digits. The primary task was to report the digits, and the secondary task was then to report the colored shapes. Subjects tended to conjoin the different colors and forms erroneously. For instance, they might report seeing a red square and a blue circle when in fact a red circle and a blue square had been present. This finding is thus consistent with the idea that features are “free-floating” at the preattentive stage and that focused attention is needed to correctly conjoin them. However, the fact that subjects were unable to remember how the forms and colors were combined does not necessarily entail that such unified representations were not extracted in the absence of attention. Indeed, an alternative explanation is that illusory conjunctions may not reflect separate coding of different features at the preattentive stage but rather the tendency of memorial representations of unified objects to quickly disintegrate, and more so when no attention is available to maintain these representations in memory (Virzi & Egeth, 1984; Tsal, 1989). More recent studies suggest that rather than deriving from imperfect binding of correctly perceived features, illusory conjunctions may stem from target-nontarget confusions (Donk, 1999), uncertainty about the location of visual features (Ashby, Prinzmetal, Ivry, & Maddox, 1996) or postperceptual factors (Navon & Ehrlich, 1995).

Acentral source of support for FIT also resides in the finding that searching for a target that is unique in some elementary feature (e.g., searching for a red target among green and blue distractors) yields fast reaction times and low error rates that are largely unaffected by set size (e.g., Treisman & Gelade, 1980; see also Egeth et al., 1972). According to the theory, features can be detected by monitoring in parallel the net activity in the relevant feature map (e.g., red). In contrast, searching for a target that is unique only in its conjunction of features (e.g., searching for a red vertical line among green vertical and red tilted lines) yields slower RTs and higher error rates that increase linearly with set size. Attention needs to be focused serially on each item in order to integrate information across feature modules, because correct feature conjunction is necessary in order to distinguish the target from the distractors. This interpretation of the results has been criticized on numerous grounds.

As we mentioned earlier, several alternative models show that parallel and serial processing cannot be directly inferred from flat and linear slopes, respectively. Moreover, new findings have seriously challenged the parallel versus serial processing dichotomy originally advocated by FIT. For instance, a number of studies have shown that feature search is not always parallel or effortless. Indeed, feature search was found to yield steep slopes when distractors were similar to the target or dissimilar to each other (e.g., Duncan & Humphreys, 1989; Nagy & Sanchez, 1990). Joseph et al. (1997) further showed that even a simple feature search (detecting an orientation singleton) that produces flat slopes when executed on its own may be impaired by the addition of a primary task with high attentional demands; the data for this experiment appear in the right panel of Figure 10.8. Although Braun (1998; see also Braun & Sagi, 1990) did not replicate Joseph et al.’s (1997) results when subjects were well practiced rather than naive, the Joseph et al. findings nevertheless, “seem to rule out a conceivable architecture for the visual system in which all feature differences are processed along a pathway that has a direct route to awareness, without having to pass through an attentional bottleneck” (Joseph et al., 1997, p. 807). Thus, Joseph et al.’s results do not challenge the idea that certain feature differences may be extracted preattentively and only overrule the notion that these differences may be reported without attention.

Other studies demonstrated that some conjunction searches are parallel (e.g., Duncan & Humphreys, 1989; Egeth, Virzi, & Garbart, 1984; Wolfe, Cave, & Franzel, 1989). For instance, Egeth et al. (1984) showed that subjects were able to limit their searches to items of a specific color or specific form. Wolfe et al. (1989) reported shallow search slopes for targets defined by conjunctions of color and form. Duncan and Humphreys (1989) showed that when targetdistractor and distractor-distractor similarity are equated between feature and conjunction search tasks, performance on these tasks behaves no differently, and concluded that there is nothing intrinsically different between feature and conjunction search (see Duncan & Humphreys, 1992, and Treisman, 1992, for a discussion of this idea). Recently, McElree and Carrasco (1999) used the response-signal speed-accuracy trade-off (SAT) procedure in order to distinguish between the effects of set size on discriminability and processing speed in both feature and conjunction search, and concluded that both feature and conjunctions are detected in parallel.

Asaresultofthisspateofinconsistentfindings,FIThasundergone several major modifications and alternative models have been developed, the most influential of which are the guided search model proposed by Cave and Wolfe (1990) and periodically revised by Wolfe (e.g., 1994, 1996) and Duncan and Humphreys’(1989) engagement theory, sometimes known as similarity theory. (The guided search model was described briefly in the section on “Capture of Attention by Irrelevant Stimuli.”)

Stimulus Identification

When a subject searches through a display for a target, the nontarget items obviously must be processed deeply enough to allow their rejection, but this does not necessarily mean that they are fully identified. For example, in search for a digit among letters, one does not necessarily have to know that a character is a G to know that it is not a digit. Thus, it is possible that some evidence for parallel processing (e.g., Egeth et al., 1972) may not indicate the ability to identify several characters in parallel.

Pashler and Badgio (1985) designed a search task not subject to this shortcoming; they showed several digits simultaneously and asked subjects to name the highest digit. This task clearly requires identification of all of the elements. To assess whether processing was serial or parallel, they did not simply vary the number of stimuli in the display, they also manipulated the quality of the display. That is, on some trials the digits were bright and on others they were dim. The logic of this experimental paradigm, introduced by Sternberg (1967), is as follows: Let us suppose that a dim digit requires k ms longer to encode than does a bright digit. If the subject performs the task by serially encoding each item in the display, then the reaction time to a dim display with d digits should take kd ms longer than if the same display were bright. In other words, the effect of display size should interact multiplicatively with the visual quality manipulation. However, if encoding of all the digits takes place simultaneously, then the k ms should be added in just once regardless of display size. In other words, display size and visual quality should be additive. It was this latter effect that Pashler and Badgio actually observed in their experiment, suggesting that the identities of several digits could be accessed in parallel.

Attention: Types and Tokens

Recently, the notion that attention acts on the outputs of early filters dedicated to processing simple features such as motion, color, and orientation has been challenged by the idea that the units on which attention operates are temporary structures stored in a capacity-limited store usually referred to as the visual short-term memory (vSTM). Several authors have invoked the existence of such structures in the last 10 years or so.