View sample biological psychology of language research paper. Browse research paper examples for more inspiration. If you need a psychology research paper written according to all the academic standards, you can always turn to our experienced writers for help. This is how your paper can get an A! Feel free to contact our writing service for professional assistance. We offer high-quality assignments for reasonable rates.

Language is a means of communicating information from one individual to others. It is a code that human societies have developed for the expression of meaning. Across all societies, the code involves words, as well as rules for linking words together (syntax). Words are arbitrary collections of sounds, produced by articulatory gestures (or manual signing, or writing) that convey particular meanings; a typical speaker acquires a vocabulary of at least 30,000 words in his or her native language. Sentences are combinations of words, governed by the syntax of the language, that encode more complex and, in many cases, novel messages. Comprehension entails recovery of the meaning intended by the speaker (or signer, or writer); production entails translating the message the speaker (or signer, or writer) wishes to convey into a series of words, constrained by the syntax of the language. Most children acquire the spoken words and syntax of their language community within a short period of time, and seemingly effortlessly. Once acquired, language comprehension and production processes appear automatic; people cannot help but understand what they hear in their native language, and they usually produce coherent sentences with little apparent effort, even when they are engaged in other tasks.

Academic Writing, Editing, Proofreading, And Problem Solving Services

Get 10% OFF with 24START discount code

Language is a uniquely human capacity. Although there is evidence of learned responses to specific calls in primates, and of brain asymmetries in chimpanzees that may foreshadow the asymmetrical representation of language in the human brain (Gannon, Holloway, Broadfield, & Braun, 1998), other animals do not exhibit communicative behavior that compares with the richness, structure, and combinatorial capacity of human language. Hence the investigation of language function and of language-brain relationships requires the use of human subjects. In recent years, the development of methods for monitoring brain activity as people engage in cognitive tasks has provided a means of studying languagebrain relationships in normal individuals. These techniques complement the study of disruptions to language function caused by brain damage—the aphasias—which for some time served as the primary means of investigating the neural substrates of language. The breakdown patterns observed in aphasia will be the focus of much of this research paper. We will also review evidence from studies that examine language activity in the normal brain.

We begin with an overview of relevant brain anatomy, historical treatment of aphasic disorders, and techniques currently being used to investigate brain-behavior relationships. Following this, we present a more detailed discussion of three specific content areas: semantics (the representation of meaning), spoken language comprehension, and spoken language production.

Brain and Language: A Brief Introduction

Brain Anatomy

The human brain is a very complex structure composed of billions of nerve cells (neurons) and their interconnections, which occur at synapses, or points of contact between one neuron and another. The vast majority of these synapses are formed between axons, which may travel short or long distances between neurons, and processes called dendrites, which extend from neuronal cell bodies. Such connections govern the patterns of activation among neurons and, thereby, the physical and mental activity of the organism. While the connectivity patterns are to some extent genetically determined, a major characteristic of the nervous system is the plasticity of neural connections. Organisms must learn to modify their behavior as a function of their experience; the child’s acquisition of the language s/he hears is an especially relevant example.

Insofar as language capability is concerned, the key structures reside in the cerebral cortex—the mantle of cells six layers deep that is the topmost structure of the brain. This tissue has an undulating appearance, composed of hills (gyri) and valleys (fissures or sulci) between them; this pattern reflects the folding of the cortex to fit within the limited space in the skull. In the majority of individuals, whether right- or left-handed, it is the left hemisphere of the cerebral cortex that has primary responsibility for language function.

Each hemisphere is divided into four lobes: the frontal, parietal, occipital, and temporal lobes. The occipital lobe has primary responsibility for visual function, which extends into neighboring areas of the temporal and parietal lobes. The frontal lobe is concerned with motor programming, including speech, as well as high-level cognitive functions. For example, sequential behavior is the province of prefrontal cortex, situated anterior to the frontal motor and premotor areas, which occupy the more posterior portions of the frontal lobe. Auditory processing is performed by areas of the temporal lobe, much of which is also concerned with language. There is evidence that inferior portions of the temporal lobe support semantic functions, whereas structures buried in the medial portions of the temporal lobe (the hippocampus, the amygdala) are concerned with mechanisms of memory and emotion. The parietal lobe is concerned with tactile and other sensory experiences of the body, and has an important role in spatial and attentional functions; it is involved in language, as well.

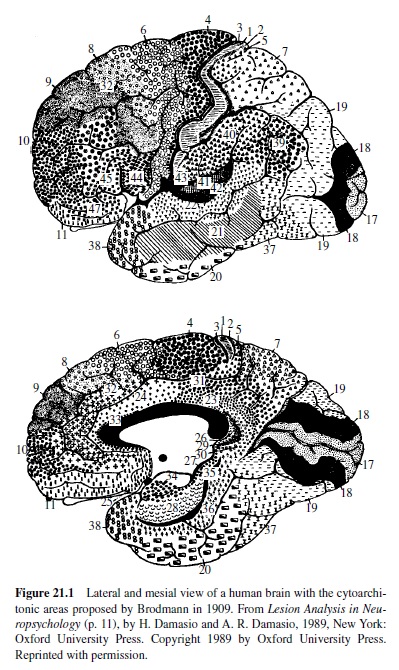

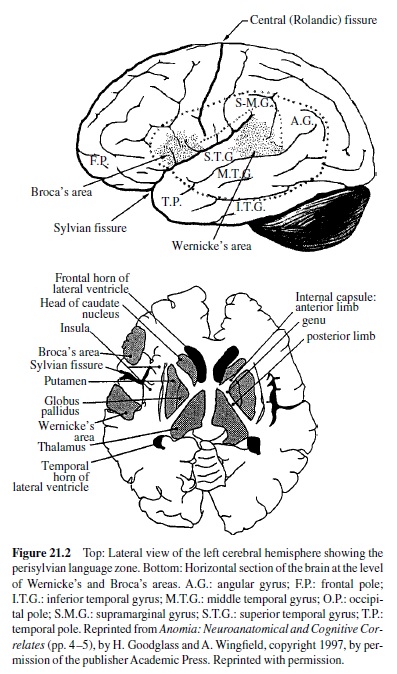

In the early years of the twentieth century, a neuroanatomist named Brodmann examined cortical tissue under the microscope and assigned numbers to areas that appeared different both with respect to types of neurons and their densities. A map of Brodmann’s areas can be found in Figure 21.1. To a large extent, these histological differences are associated with differences in function. The Brodmann numerical scheme is still in use today; for example, functional imaging studies of the human brain employ these numerical designations. Some areas have also been given names. For example, the deep fissure separating the temporal from the frontal and parietal lobes is known as the lateral or Sylvian fissure; areas bordering this fissure in the left hemisphere are essential to language, and the language zone of the left hemisphere is sometimes referred to as the perisylvian area. Another map containing names of regions that are relevant to a discussion of language is presented in Figure 21.2.

Although it has long been known that there are functional differences between the two cerebral hemispheres, it was not until the 1960s that corresponding differences in structure were identified. For example, Geschwind and Levitsky (1968) found that an area called the planum temporale, at the juncture of the temporal and parietal lobes, is generally larger in the left hemisphere than the right; this area is involved in language. However, it has recently been shown that chimpanzee brains contain the same asymmetry (Gannon et al., 1998), a finding that undermines what appeared to be a strong relationship between structural enlargement and functional capability. It may be, however, that this asymmetry foreshadows the dedication of this area to language function.

The cortical role in language depends critically on the receipt of information from lower brain centers, including the thalamus. The thalamus is an egg-shaped structure at the top of the brain stem, divided into regions called nuclei. Thalamic nuclei send projections to areas of the cortex and receive inputs from these areas. For example, auditory input from the medial geniculate nucleus of the thalamus projects to the temporal lobe; fibers from the lateral geniculate nucleus carry visual input to the occipital lobe. An extensive network of white matter, consisting of axons, underlies the six cell layers of the cortex, connecting cortical regions as well as carrying information to and from subcortical regions. The two hemispheres of the cerebral cortex are connected as the split brain operation, which is performed to relieve the spread of epileptic discharges that fail to respond to pharmacological intervention (e.g., Gazzaniga, 1983). There is evidence that other structures, such as the basal ganglia, which are located beneath the cerebral cortex, also contribute to language function (e.g., A. R. Damasio, Damasio, Rizzo, Varney, & Gersch, 1982; Naeser et al., 1982). The basal ganglia project to areas of the frontal cortex and are essential to motor activity. The motor aspects of speech production depend on the integrity of connections from motor areas in the frontal cortex to nuclei in the brain stem, which innervate the articulatory musculature.

The nature of the connection patterns is also important. Both hemispheres receive input from both ears and both eyes. However, in the case of vision, the left hemisphere is sensitive to the right half of space (or visual field), which projects from the left half of each retina—and vice versa for the visual projection to the right hemisphere. Thus, if the visual area of the left hemisphere is lesioned, vision from the right visual field is interrupted. The receipt of visual information from both eyes (but from the same visual field) is essential for depth vision. Cases where part or all of a hemifield is lost are termed hemianopias. If the corpus callosum is severed (or the posterior part of it lesioned), the left hemisphere will receive information from only the right visual field, and the right hemisphere from only the left visual field. The connectivity pattern is different for the auditory system, where fibers cross over at several levels from right to left and vice versa; however, the projection to each hemisphere from the ear on the opposite side dominates, reflecting the larger number of fibers that each receives from the contralateral ear. In the case of sensory and motor function, the left hemisphere receives input and exerts motor control over the right half of the body, and the right hemisphere does the same for the left. Because certain language areas lie in close proximity to left hemisphere motor cortex, some aphasic patients will have motor weakness on the right, particularly affecting the upper limb. Lesions of left temporal cortex, which also result in aphasia, may disrupt fibers carrying information to visual cortex, resulting in defects in the right visual field.

Historical Background to the Classification of the Aphasias

Systematic observation of the relationships between brain damage and language dysfunction began in the middle of the nineteenth century. The first steps in this direction are generally credited to a French physician, Paul Broca, who also had an interest in anthropology. Prior to his work, the strongest claims about mind-brain relationships were made by phrenologists, who took bumps on the skull to reflect the development of cortical areas beneath. Phrenologists attempted to localize such functions as spirituality and parental love; they also made claims about language, assigning it to the anterior tips of both frontal lobes, an area that lies just behind the eye. (The basis for this assignment was the acquaintance of phrenology’s founder, Gall, with an articulate schoolmate who had protruding eyes!)

Broca was properly skeptical about these pseudoscientific views, believing that functions must be directly related to the convolutions of the cerebral cortex. He observed a patient (Monsieur Leborgne) with a severe speech production impairment, whose output was limited to a single monosyllable (“tan”); in contrast, the gentleman’s comprehension of language appeared to be well preserved. Leborgne passed away, and Broca was able to examine his brain. He found an area of damage in the left inferior frontal lobe and later confirmed this observation in another patient with an articulatory impairment. Broca observed several additional patients with similar problems, although he did not always have information about the underlying neuropathology. In 1865, he postulated that the left frontal lobe was the substrate for the “faculty of articulate language.” He speculated that this function of the left frontal lobe was related to the left hemisphere’s control of the usually dominant right hand (Broca, 1865). Broca also hypothesized that the right hemisphere was responsible for language in left-handers, a conjecture that later proved to be incorrect, although there is evidence that language is less completely lateralized to the left hemisphere in left-handers (e.g., Rasmussen & Milner, 1977).

Several years later, a German physician, Carl Wernicke, observed a different form of language impairment, characterized by a severe comprehension deficit. In contrast to Broca’s cases, these patients spoke fluently, although their output was often uninterpretable. They frequently produced paraphasias (substituted words or speech segments), sometimes uttered nonwords (neologisms), and relied heavily on pronouns or general terms such as “place” and “thing.” Wernicke was able to examine the brain of one of the patients, and found a lesion in the superior temporal gyrus on the left. The area of damage was located in what would now be called the auditory association area, at the junction of the left temporal and parietal lobes. (Association cortex typically borders primary sensory cortex, which receives input from subcortical nuclei; association areas are involved in the further processing of sensory information.) As auditory comprehension and speech production were both compromised by the lesion, Wernicke (1874) speculated that this region contained “auditory word images” acquired in learning the language. In addition to their role in interpreting speech input, he suggested that these images guided the articulatory functions that were localized in Broca’s area. He further speculated that the anatomical connection between Wernicke’s and Broca’s areas would allow the listener to repeat the speech of others; damage to this pathway, he predicted, should result in a disorder in which production would be error-prone, although comprehension would be preserved. The existence of this disorder (conduction aphasia) was subsequently confirmed, although the locus of damage that gives rise to it is still debated (H. Damasio & Damasio, 1989; Dronkers, Redfern, & Knight, 2000).

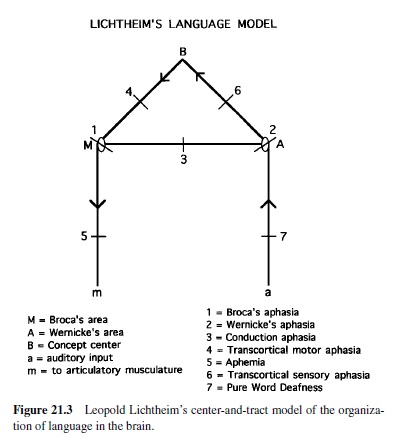

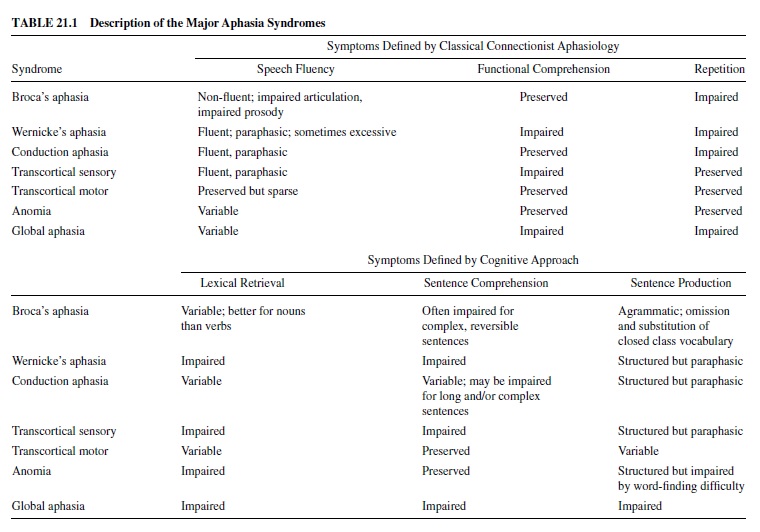

Wernicke’s early theory of brain-language relationships was elaborated by Leopold Lichtheim (1885), whose model is represented in Figure 21.3. Lichtheim added a concept center, which would serve as the seat of understanding for the listener (hence its connection toWernicke’s area) as well as the source of the messages ultimately expressed by the speaker (it is also connected to Broca’s area). Although he referred to it as a center, he believed that this information was diffusely represented in the brain. Lichtheim’s model predicted several disorders not yet observed. Among these were the transcortical aphasias (sensory and motor), in which repetition was better preserved than spontaneous production due to the isolation of Broca’s or Wernicke’s area from the concept center. Transcortical sensory aphasia also involved a comprehension problem, due to the disconnection between Wernicke’s area and conceptual information. Lichtheim also predicted a receptive disorder—word deafness—resulting from the disconnection of Wernicke’s area from auditory input, and a production disorder—aphemia—resulting from damage to the pathway from Broca’s area to brain centers concerned with motor implementation of spoken output. All of these patterns were later reported. The Wernicke-Lichtheim approach, which relied heavily on connections among brain centers, came to be known as connectionism (not to be confused with current computer models of cognitive function, which use the same term). Table 21.1 shows the taxonomy of aphasia syndromes, which took shape under this approach and is still in use today.

Connectionist aphasiology has always drawn opposition. One of the early critics was Sigmund Freud, who published a monograph on aphasia in 1891. Freud argued that the connectionist view was too simplistic to account for so complex a function as human language. He also showed that it could not explain the symptoms of several cases of aphasia that had been reported in the literature (Freud, 1891/1953). Contemporary language scientists would agree; the connectionist approach focused on word comprehension and production, completely ignoring sentence-level processes. (Famed neurologist Arnold Pick stood apart from this tradition and made outstanding contributions to the study of sentence-level breakdown; see Pick, 1913). Most of those who studied aphasia in the early to mid-twentiethth century adopted a more holistic approach to language-brain relationships (e.g., Head, 1926; Marie, 1906). However, the center-based approach, with emphasis on the importance of connections between areas responsible for particular functions, was revived in the 1960s, largely through the efforts of neurologist Norman Geschwind. He published two celebrated papers that explained a range of cognitive disturbances, in animals and man, in terms of the severing of connections between brain regions (Geschwind, 1965). Insofar as language was concerned, he favored the approach initiated by Wernicke and elaborated by Lichtheim. At about the same time, Schuell and her colleagues at the University of Minnesota conducted large group studies of aphasics, which led them to conclude that the language area of the brain was not differentiated with respect to function—that it operated as a whole (Schuell, Jenkins, & Carroll, 1962). One major difference between these approaches is that Geschwind was looking primarily at cases with restricted lesions, whereas the Minnesota study enrolled patients irrespective of the nature of their lesions and likely included individuals with extensive brain damage.

To a large extent, these polar views—componential or modular versus holistic—continue to characterize the debate among aphasia researchers, and in a somewhat different guise, among language researchers in general. Are language tasks delegated to distinct processing components that operate to a large degree independently of one another (the modular view) or does the system operate with a good deal of interaction? Is there a separate syntactic component, or are syntactic functions subsumed by the lexicon? We will try to address such questions wherever we can. It should be appreciated, however, that in many instances the answers are, at best, provisional. In view of the complexity of language processes, it is not surprising that many issues remain controversial. (To sample contemporary arguments against the modular approach, see Dick et al., 2001, and Van Orden, Pennington, & Stone, 2001.)

Methods Used to Study Brain-Language Relationships

Localizing Brain Lesions

Although encased in the protective skull, the brain is nevertheless susceptible to a wide variety of disorders. The circulatory system is the primary cause of aphasic impairments. Most are caused by disruption of the left middle cerebral artery, which supplies the lateral surface of the left hemisphere, including brain regions concerned with language function. Circulatory disorders include those that block an artery (ischemia), hemorrhages involving rupture of a blood vessel, and aneurysms, in which a weak arterial wall ultimately allows blood to leak into brain tissue—a major cause of aphasia in younger individuals. Other disorders include tumors, infections, degenerative diseases (such as Alzheimer’s), and traumatic brain injuries produced by falls, bullets, and vehicular accidents.

Until the early 1970s, techniques for imaging the brain in vivo were rudimentary; radiological techniques did not have much sensitivity. To gain precise information about the location of brain damage, it was necessary to examine the brain after the patient had died, or to rely on descriptions of tissue removed at surgery. The development of the CT scan (CT is an acronym for computer-assisted tomography) improved matters considerably. This is an X-ray procedure that is enhanced by the use of computers; it is possible to acquire images of successive slices through the brain, and due to differences in the density of neural tissue (denser tissue absorbs more radiation), to localize the damage rather precisely. Magnetic resonance imaging (MRI), also developed in the 1970s, led to further advances in brain imaging. One advantage of this technique is that it does not utilize radiation. Instead, a magnetic field is imposed that causes certain atoms (usually hydrogen, a major component of water) to orient in a particular direction; subsequent imposition of a radio frequency signal causes the atoms to spin, giving off radio waves that are registered by a computer. Again, it is possible to examine serial slices of brain tissue. The signal varies with the content of the tissue and yields sharp images that are becoming increasingly refined in their degree of resolution. These techniques have provided a great deal of information on lesion sites, which can then be related to the nature of the language impairment. Computerized methods for compiling lesion data across the brains of patients with similar deficits are proving particularly useful (e.g., Dronkers et al., 2000).

Although aphasia research has yielded much informative data, it must be acknowledged that the evidence is problematic in some respects. The injuries result from disease or trauma, accidents of nature that afford no control over the locus or size of the lesion. Moreover, the damage is often extensive, which makes it difficult to isolate the regions responsible for specific language functions. For example, the lesion that results in chronic Broca’s aphasia extends well beyond the area identified by Broca (Mohr et al., 1978), and it has been claimed that the same applies to Wernicke’s aphasia (Dronkers et al., 2000). Some cases of Broca’s and Wernicke’s aphasias have lesions that completely spare the classical Broca’s and Wernicke’s areas (Dronkers et al.). Furthermore, the delineation of these areas is complicated by the fact that human brains differ in the size and precise localization of particular areas (e.g., Uylings, Malofeeva, Bogolepova, Amunts, & Zilles, 1999).

Some aphasia researchers have tried to interpret aphasic deficits as purely subtractive, taking the residual behavior to represent normalcy minus the damaged component (e.g., Caramazza, 1984). This view is theoretically appealing, but increasingly untenable. Patients with language disorders struggle to communicate. In doing so, they often employ compensatory strategies, which speech pathologists strongly encourage in their attempts to rehabilitate aphasic disorders. This introduces another source of variability: Do different behavior patterns reflect different deficits, or different ways of compensating for the same underlying impairment?

It is also becoming clear that, in some cases at least, regions of the right hemisphere, normally thought to be little involved in basic language functions, provide support for residual language in aphasia. For example, functional imaging studies have shown increased brain activity in areas of the right hemisphere that are homologous to damaged language areas on the left (e.g., Cardebat et al., 1994; Weiller et al., 1995). Other studies have shown that patients whose language has improved subsequent to left hemisphere damage become worse as a result of subsequent right hemisphere lesions (e.g., Basso, Gardelli, Grassi, & Mariotti, 1989). These considerations should be kept in mind as we review the data; where appropriate, we will refer to them explicitly.

There are other reasons to be cautious when drawing inferences about lesion-deficit relations. A functional deficit, even if consistently related to the same locus of injury, may not directly reflect the localization of the impaired function. Much of the brain’s activity depends on connections between sets of neurons, and the deficit may reflect disruption of connectivity patterns as opposed to localization of the function at the site of damage per se. This point is supported by metabolic imaging studies of brain-damaged patients (see next subsection), which have shown hypometabolic changes at sites remote from the structural lesion, and, in some cases, changes in regions of brain of that show no evidence of focal brain damage on CT or MRI (e.g., Breedin, Saffran, & Coslett, 1994).

Imaging Brain Metabolism With Positron Emission Tomography

The imaging methods discussed previously provide static images of brain structures. With positron emission tomography (PET), it has become possible to explore the physiological effects of a structural lesion, for example, by measuring regional metabolism of glucose, the major energy source used by the brain. PET (and the lesser used SPECT) are methods that localize and quantify the radiation arising from positronemitting isotopes, which are injected into the bloodstream and which accumulate in different brain regions in proportion to the metabolic activity of those regions and the demands of this activity for greater cerebral blood flow (for a readable introduction, see Metter, 1987). The use of PET in studies of functional brain activity is discussed next. PET has also been used productively to measure resting-state activity in brains damaged by stroke or other neurological insult. As noted above, such studies have revealed that the areas of brain that are metabolically altered by a structural brain lesion far exceed the boundaries of the structural lesion. Furthermore, the metabolic maps provide a very different picture of function-lesion correlations in patients with aphasia (Metter; Metter et al., 1990).

Imaging Functional Brain Activity

A major innovation in cognitive neuroscience has been the extension of PETand MRI methods to the measurement of regional activation associated with ongoing cognitive behavior. What follows is a brief overview; for further details, the reader is referred to Friston (1997), Rugg (1999), and Bibliography: therein.

As implied earlier, there is a close coupling between the changes in activity level of a region of brain and changes in its blood supply, such that increased activity leads to an increase in blood supply. In so-called cognitive activation studies with PET, images are acquired while the subject performs two conditions: an experimental condition and a control condition that ideally differs from the experimental condition with respect to only a single cognitive operation. Computerized methods are then used to subtract the activation patterns in the control state from that induced by the experimental state. Regions that achieve above-threshold activation after the subtraction are taken to subserve the cognitive operation(s) of interest.

Functional MRI (fMRI) takes advantage of a hemodynamic effect called BOLD, for blood oxygenation level dependent. It happens that oxygen flows to activated brain areas in excess of the amount needed, so that the oxygen content of blood is higher when it leaves a highly active area compared with a less active one. Dynamic changes in the ratio of oxygenated to nonoxygenated blood as a cognitive task is performed thus provides an index of the changes of brain activity in areas of interest. Signals obtained from certain MRI measures are sensitive to these changes in blood oxygenation and, by extension, regional brain activity.

FMRI has a number of advantages over PET, not the least of which is that it does not require injection of radioactive compounds. This aspect of PET limits the number of scans that can safely be obtained from a single subject, which generally necessitates the pooling of data across subjects. FMRI, in contrast, can be used with single subjects. Moreover, the images can be acquired over shorter time periods (seconds, as opposed to minutes in the case of PET), and have better spatial resolution. On the other hand, fMRI suffers from artifacts introduced by movements, including small head movements such as occur during speech. This has limited fMRI research on speech production; however methods for correcting such artifacts are continually evolving and we can expect to see more such studies in the future. We can also expect more research on the application of fMRI methods to brain injured populations. As it stands, the hemodynamic models related to BOLD are of questionable validity when applied to patients with cerebrovascular alterations due to stroke or trauma.

Many fMRI experiments employ the same blocked designs and subtraction logic as are used with PET. This approach has been criticized, in that the results are heavily dependent on the choice of the control task. Indeed, it is arguable whether the idealized single-component difference between experimental and control tasks is ever, in reality, achieved (e.g., Friston, 1997). Other methods currently in use include parametric designs, in which the difficulty of a task is systematically varied and regions are identified that show a corresponding increase in activation; and designs in which trials, rather than blocks of trials, constitute the unit of analysis (Zarahn, Aguirre, & D’Esposito, 1997). Some of these newer methods take advantage of fMRI’s sensitivity to transient signal change in order to examine the dynamic response to a sensory event, similar to the electrophysiological ERP technique, discussed next.

Electrophysiological Methods

Electrophysiological methods have been used both to record events in the brain, and, by introducing current, to interfere with brain activity. Potentials temporally linked to sensory stimuli and recorded from the scalp—event-related potentials,orERPs—haveprovedextremelyusefulformappingthe time course of cognitive operations; but as the source of the current is difficult to specify, this approach is less useful for the localization of brain activity (see Kutas, Federeier, & Sereno, 1999, for a more extensive treatment of this topic). More precise localization data have been acquired from electrode grids placed on the cortex prior to surgical intervention, usuallyincasesofintractableepilepsy(e.g.,Nobre,Allison,& McCarthy, 1994). In some cases, electrodes have been used to apply current to brain regions, which disrupts ongoing brain activity. In the 1950s, such studies were used to map general brain functions (e.g., Penfield & Roberts, 1959); more recently, the technique has been used to identify brain areas associated with specific language functions (e.g., Boatman, Lesser, & Gordon, 1995; Ojemann & Mateer, 1979).

Recently, ERP techniques have been used to investigate aphasic disorders. One advantage of this approach is that it does not require a response on the part of the subject. Overt responses are often delayed or hesitant in patients with aphasia; in some cases, the patient may even say “yes” when he or she means “no,” or vice versa. The ERP methodology utilizes standard electrophysiological responses, such as the one generated by semantic anomaly (e.g., Kutas & Hillyard, 1980). This signal is called the N400—N because it involves a negative change from the baseline of ongoing electrical activity, and 400 because it occurs 400 msec after the anomalous word (e.g., after “socks,” given the sentence “He spread his warm bread with socks”).The amplitude of the N400 is related to the difficulty in integrating the word into the sentence context; in the following examples, it is smaller in the case of number 1 than number 2 (Hagoort, Brown, & Osterhout, 1999). There is evidence that the N400 is reduced in aphasics with severe comprehension deficits (Swaab, Brown, & Hagoort, 1997).

- The girl put the sweet in her mouth after the lesson.

- The girl put the sweet in her pocket after the lesson.

Magnetic Stimulation and Recording

Electrical activity in the brain generates magnetic changes that can be recorded from the surface of the skull (magnetoencepholography, or MEG; see Rugg, 1999, for more details.) With respect to the source of the activity, MEG is more restrictive than ERP, in that the decline in strength of the magnetic field drops off more sharply than that of the electrical field. This technique is now being applied in attempts to localize brain activity related to language function (e.g., Levelt, Praamstra, Meyer, Helenius, & Salmelin, 1998).

The application of a magnetic field to points on the skull, which generates electrical interference, has also been used to disrupt the brain’s electrical activity in the cortex below. This technique can be used to help localize brain activity in relation to ongoing tasks (e.g., Coslett & Monsul, 1994).

Inferences From Patterns of Language Breakdown

In addition to the use of patient data to localize language function in the brain, the patterns of language breakdown in aphasia are of interest for their bearing on the functional organization of language. For example, if it could be shown convincingly that syntactic processes are disrupted independently of lexical functions, and vice versa, it would lend support to the theory that these capacities constitute separate components of the language system. Over the past 25 years or so, this has been the enterprise of the field known as cognitive neuropsychology. Cognitive neuropsychologists study the fractionation of cognitive functions in cases of brain damage, with the aim of informing models of the functional architecture of human cognition. This work extends well beyond language, to research in perception, memory, attention, action, and so forth, but a good deal of this effort has focused on the language-processing system.

Much of this research involves the detailed study of individual cases. There are several reasons for this. One is that characterization of the deficit involves extensive examination, often with tasks devised specifically for the patient. These studies often take months to complete, and would be difficult to conduct with a large group of subjects. The second has to do with variability. The classical syndrome descriptors (e.g., Broca’s aphasia, Wernicke’s aphasia) tolerate a wide range of variation. For example, many patients considered to be Broca’s aphasics exhibit agrammatic production (reduction in syntactic complexity; omission of grammatical morphemes), but not all of them do, at least not to a degree that is clinically apparent. A third reason is that there are some disorders of considerable theoretical interest that are quite rare; examples of those to be discussed include word deafness and semantic dementia. If not studied as single cases, many of these disorders could not be investigated at all. Of course, it is risky to make generalizations on the basis of a single instance, and in the vast majority of cases the patterns have been replicated in other patients. It is also comforting to note that recent studies of brain activation in normal subjects have confirmed many of the functions assigned to particular areas on the basis of lesion data.

Computational Models and Simulated Lesions

The area of language study known as psycholinguistics aims to explain language performance in terms of transformations in the language code such as are affected at particular processing stages. Researchers interested in the cognitive neuroscience of language take as their ultimate to specify how these encoding-decoding operations are related to specific areas of the brain.

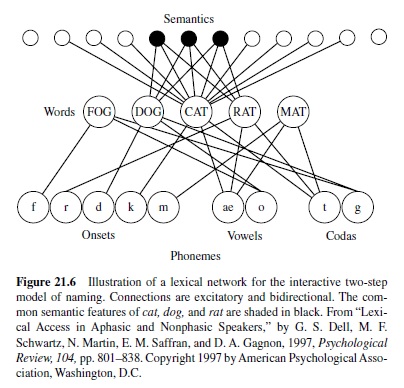

Until relatively recently, the models took the form of boxand-arrow diagrams representing how information flowed from one stage of processing to the next. Over the past decade, there has been an increasing interest in computational models that characterize the processing of information in enough detail that they can be implemented on a computer and experiments can be conducted as computer simulations. These models can also be lesioned—that is, they can be altered in some way (e.g., by increasing noise levels, weakening connections, etc.) to simulate effects of brain damage. (See Saffran, Dell, & Schwartz, 2000, for discussion of several such models.) Although vastly simplified in relation to the real language system, computational models seek to capture basic principles of neural function, in that the elements that comprise the model receive activation and pass this activation on to other units. Some models employ feedback as well as feed-forward activation, as feedback appears to be a widespread feature of neural systems. There are some that contain inhibitory as well as excitatory connections between units. One important class of models starts out with random connections from the layer receiving the input to the layer of units that generates output; the model is then trained by strengthening connections that produce the correct output. These are termed parallel distributed processing (PDP) models (e.g., McClelland & Rumelhart, 1986; see Plaut & Shallice, 1993, for one application to the effects of brain damage). In these models, the information about the relationship between input and output units is distributed across elements in a so-called hidden layer (or in some cases, layers) of units, which is intermediate between input and output. For example, consider the fact that there is no consistent relationship between semantics and phonology; cats and dogs share some similarities, but the sounds of the words that denote these entities are completely different. As a result of the inconsistent mapping between semantics and phonology, the relationship between them must be represented in a hidden layer. In other models (called localist models), the modeler specifies the connections among elements.

The attempt to model the effects of brain damage provides another way of testing the adequacy of computational models (in addition to simulating data from psycholinguistic experiments). In other words, it should be possible to take a model capable of generating normal language patterns and damage it so that it produces abnormal patterns that are actually observed following injury to the brain (e.g., Saffran et al., 2000; Haarmann, Just, & Carpenter, 1997). It is also possible that these efforts to simulate effects of lesions will yield insights into the nature of pathological states, and even contribute to approaches to remediating these disorders (Plaut, 1996). We will provide some examples of computer-based lesion studies as we go along.

The Semantic Representation of Objects

In 1972, psychologist Endel Tulving introduced the term semantic memory to denote the compendium of information that represents one’s knowledge of the world as derived from both linguistic and nonlinguistic sources. Tulving was interested in distinguishing this store of general knowledge from episodic or autobiographical memory, which preserves information about an individual’s personal experiences. That tigers have stripes, that cars have engines, that Egypt is in Africa—these facts are among the contents of semantic memory, whereas personal remembrances such as the site of one’s last vacation or the details of one’s most recent restaurant meal are entered in episodic memory. In this section, we consider how the brain represents one particular aspect of semantic memory—the knowledge that allows one to generate and understand words and pictures. Our major source of evidence will be individuals whose store of semantic knowledge is severely compromised by brain damage.

Semantic Disorders

Semantic Dementia

In the syndrome that has come to be known as semantic dementia, there is progressive erosion of semantic memory with relative sparing of other cognitive functions (Snowden, Goulding, & Neary, 1989; and for earlier cases that conform to this description, Schwartz, Marin, & Saffran, 1979, and Warrington, 1975). Patients initially complain of inability to remember the names of people, places, and things. Formal testing confirms a word retrieval deficit, often accompanied by impairment in word comprehension. As the disorder progresses, most patients also lose the ability to answer questions about real or depicted objects (e.g., regarding their color, size, or country of origin) or to indicate which two of three pictured objects are more closely related (e.g., horse, cow, bear). In other words, the semantic impairment affects nonverbal as well as verbal concepts. On the other hand, the ability of these patients to handle and use objects in practical tasks is generally far better than what their naming and matching performance would predict. This is presumed to reflect their preserved sensorimotor knowledge or practical problem solving (Hodges, Bozeat, Lambon Ralph, Patterson, & Spatt, 2000; and for critical discussion, Buxbaum, Schwartz, & Carew, 1997).

Semantic dementia is the manifestation of a degenerative brain disease (cause unknown) that targets the temporal lobes, in most cases predominantly the left (Hodges, Patterson, Oxbury, & Funnell, 1992). Radiological investigation with CT or MRI often reveals focal atrophy in the anterior and inferior temporal regions of one (the left) or both hemispheres. A quantitative analysis of gray-matter volumetric changes in 6 cases revealed that the atrophy begins in the temporal pole (Brodmann’s area [BA] 38) and spreads anteriorly into the adjacent ventromedial frontal region, and posteriorly into the inferior and medial temporal gyri (Mummery et al., 2000). In support of this, Breedin, Saffran, and colleague (1994) found SPECT abnormalities that were maximal in the anterior inferior temporal lobes in a semantic dementia patient who exhibited no structural brain changes on MRI. Other functional imaging studies with SPECT or PET have described hypometabolism outside the regions of atrophy, most notably in the temporo-occipitalparietal area known to be important for object identification and naming (e.g., Mummery et al., 1999). It is likely that this posterior hypometabolism reflects disruption of connections from the damaged anterior temporal lobes (Mummery et al., 2000).

Remarkably, the neuropathology in semantic dementia spares the classical anterior and posterior language zones (the parts damaged in Wernicke’s and Broca’s aphasias). As a result, aspects of language processing, including word repetition and grammatical encoding, remain largely intact (Schwartz et al., 1979; Breedin & Saffran, 1999). Also spared is the neural substrate for formation of episodic memories, in the medial temporal lobes and hippocampus. Thus, unlike Alzheimer’s disease, in which day-to-day memory loss is often one of the earliest symptoms, semantic dementia leaves recent autobiographical memory well preserved long into the course of the disease (Snowden, Griffiths, & Neary, 1994). Eventually, however, the degenerative process invades other areas and a general dementia sets it, rendering the individual incapable of caring for him- or herself. Autopsy studies of brain tissue reveal a spectrum of nonAlzheimer’s pathological changes, including, in many cases, those indicative of Pick’s disease (Hodges, Garrard, & Patterson, 1998).

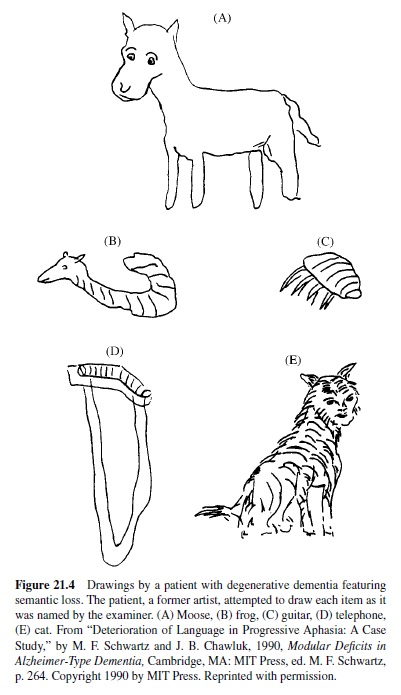

The loss of verbal and nonverbal concepts in semantic dementia happens gradually, in that specific features are lost before more general ones. This can be shown by asking subjects to name objects aloud, match words to pictures, or answer probe questions regarding the physical or other characteristics of objects. Until late in the clinical course, errors are mostly within category, such as naming a fork a “spoon” or a cow a “horse” (e.g., Schwartz et al., 1979). We saw something similar in the drawings of a patient who was formerly an artist. Her early depictions of named objects was generally accurate for category-level information, but not identifying detail (Figure 21.4).

When asked to define words, semantic dementia patients provide little information about an object’s perceptual characteristics (Lambon Ralph, Graham, & Patterson, 1999). Other than this, most semantic dementia patients demonstrate no striking selectivity in their semantic loss. There are, however, patients whose semantic impairment affects some types of entities more than others. The most impressive instances of selective impairment are the disorders that have been termed category specific. We turn to these next.

Disproportionate Impairment for Living Things

This condition was first described in detail by Warrington and Shallice (1984). They studied four patients, three of whom who were suffering the aftereffects of herpes encephalitis, which generally includes dense amnesia (reflecting damage to medial temporal and inferior frontal lobe structures) along with semantic impairment; the fourth patient had semantic dementia. The disproportionate impact on living things emerged clearly on a definitions test. For example, one patient defined a compass as “tools for telling direction you are going,” whereas a snail was, “an insect animal.” Another defined submarine as a “ship that goes underneath the sea,” but a spider as “person looking for things, he was a spider for a nation or country.” Warrington and Shallice’s patients were also impaired in their knowledge of foods, a category that includes manufactured items (e.g., bread, pizza) as well as biological entities such as fruits and vegetables. There were also indications of impairment on certain categories of man-made things, such as gemstones, fabrics, and musical instruments.

Warrington and Shallice’s study was followed by a number of others demonstrating similar deficits involving living things and foodstuffs in patients with damage to the temporal lobes (see Saffran & Schwartz, 1994, for a review of cases). The claim that these impairments represent the loss of knowledge for certain categories of object did not go unchallenged, however. In some cases, living and non-living categories were not matched for frequency or familiarity, and there are a few patients whose category differences disappeared when these factors were adequately controlled (Funnell & Sheridan, 1992; Stewart, Parkin, & Hunkin, 1992). This can be a particular problem with animals, which tend to be rated as less familiar than artifacts. On the other hand, foods are more familiar, yet they pattern with animals. Other factors that could contribute to the difficulty of living things include visual complexity and similarity in form, which are generally greater for living things than for artifacts (e.g., Gaffan & Heywood, 1993; Humphreys, Lamote, & Lloyd-Jones, 1995). In most cases, however, control of these factors through stimulus selection (e.g., Funnell & De Mornay Davies, 1997) or statistical analyses (e.g., Farah, Meyer, & McMullen, 1996) has not eliminated category-specific deficits for living things. Moreover, the factors that render living things more difficult cannot explain the occurrence of the opposite pattern—greater impairment on man-made objects than living things.

Disproportionate Impairment for Artifacts

This pattern was described in two case studies by Warrington and McCarthy (1983, 1987), and subsequently in patients studied by Behrmann and Leiberthal (1989), Hillis and Caramazza (1991), and Sacchett and Humphreys (1992). The subjects of these reports were aphasics with left hemisphere lesions. Three of the four cases had lesions involving frontoparietal cortex; in the fourth case (the patient reported by Hillis and Caramazza) the lesion involved the left temporal and basal ganglia, which project to the frontal lobe. Because Warrington and McCarthy’s patients (VER and YOT) were severely aphasic, they could be tested only on word comprehension. On a word-to-picture matching test, YOT scored 67% correct on artifacts, 86% correct on living things, and 83% on food; VER scored 58% correct on artifacts and 88% on food. YOT also proved to be impaired on body parts, scoring only 22% on this highly familiar category. Tested on picture naming, CW (Sacchett & Humphreys) and JJ (Hillis & Caramazza) scored 35% and 45%, respectively, on artifacts and body parts and 95% and 92%, respectively, on living things. Breedin, Martin, and Saffran (1994) have demonstrated a similar pattern in patients with left frontoparietal lesions using a word-similarity judgment task. One consistent finding is that the decrement on artifacts is associated with impaired performance on body parts.

The Weighted-Features Account of Category-Specific Disorders

How can we account for these category-specific semantic disorders? We have already mentioned the possibility that the brain organizes knowledge according to semantic category: animals in one network, foods in another, tools in a third, and so on. (See Caramazza & Shelton, 1998, for a proposal along these lines.) Warrington and her colleagues (Warrington & Shallice, 1984; Warrington & McCarthy, 1987) have taken a different stance, hypothesizing that category specificity in semantic breakdown reflects the properties that figure most importantly in the representations of objects. Warrington and Shallice pointed out that, unlike most plants and animals, artifacts

have clearly defined functions. The evolutionary development of tool use has led to finer and finer functional differentiations of artifacts for an increasing range of purposes. Individual inanimate objects have specific functions that are designed for activities appropriate to their function . . . jar, jug and vase are identified in terms of their function, namely, to hold a particular type of object, but the sensory features of each can vary considerably. By contrast, functional attributes contribute minimally to the identification of living things (e.g., lion, tiger and leopard), whereas sensory attributes provide the definitive characteristics (e.g., plain, striped, or spotted). (p. 849)

The idea here is that perceptual properties are more heavily weighted in differentiating representations of living things, whereas functional information figures more importantly in the representations of artifacts. The perceptual properties of living things are, of course, intrinsic to the entities and largely immutable, whereas, in the case of artifacts, many properties are free to vary. The range of objects that currently serve as radios, for example, necessitates that they be defined in terms of their function as opposed to their shape, color, or composition. The differential weighting of perceptual information in the case of living things was confirmed by Farah and McClelland (1991), who asked subjects to count the number of visual and functional descriptors in dictionary definitions of living and non-living entities. Visual properties dominated in both sets, but more so (a ratio of nearly 8:1) in the case of living things compared with artifacts (1.4:1).

The relative-weighting account does not deny that artifacts may have distinctive visual properties. However, it predicts that the loss of perceptual properties should be particularly damaging to the representations of living things, which are largely distinguished from one another by their physical characteristics. In contrast, artifacts are differentiated in terms of function, as well as by the manner in which they are manipulated. Body parts may pattern with artifacts because they, too, are differentiated by their functions, or possibly as a consequence of their roles in the utilization of these objects. In contrast, manufactured foods would be expected to pattern with living things: Foods serve the same basic function and are in large part distinguished by their sensory properties, such as color, shape, and taste.

The differential-weighting account is consistent with a model of semantic memory in which information about an object is distributed across a number of brain subsystems specialized for a particular type of knowledge. Allport (1985) outlined a network model consisting of subsystems that are specialized for particular types of information (visual, tactile, action-orientated). Information about a particular object (e.g., a telephone) is distributed across these subsystems, which are linked to one another by associations among co-occurring features. As a result, activation of features of an object in one subsystem will automatically activate other features of the object in other subsystems. On the assumption that these subsystems are anatomically distinct, it should be possible to disrupt them independently.

Warrington and McCarthy (1987) speculated that there might even be a finer-grained differentiation within the semantic system, such that some perceptual features (e.g., shape) figure more heavily in the representations of animals, whereas others (e.g., color, taste) are more salient in the distinctions among fruits and vegetables. As the experience of objects rests on their sensory and sensorimotor properties, it is reasonable to assume that these various characteristics are experienced through different modalities and that they are registered in different subsystems. As Shallice (1988) has put it,

such a developmental process can be viewed as one in which individual units (neurons) within the network come to be most influenced by input from particular input channels, and, in turn, come to have most effect on particular output channels. Complementarily, an individual concept will come to be most strongly represented in the activity of those units that correspond to the pattern of input-output pathways most required in the concept’s identification and use. The sets of units that are most critical for a related group of categories would then come to form semimodules. . . . The capacity to distinguish between members of a particular category would depend on whether there are sufficient neurons preserved in the relevant partially specialised region to allow the network to respond . . . differentially to the different items in the category. (pp. 302–303)

Although this position has sometimes been formulated in terms of distinct visual and verbal semantic systems (e.g., McCarthy & Warrington, 1988), “visual semantic” and “verbal semantic” can be conceptualized as “partially specialized subregions,” to use the terminology suggested by Shallice.

The Semantic Representation of Objects

It is possible to account for a number of neuropsychological phenomena within the framework of a distributed model. As we said, disproportionate impairment of living things (and foodstuffs) would reflect damage—or lack of access—to perceptual properties, which are heavily weighted in the representations of these entities. Worse performance on artifacts would reflect the loss of functional or perhaps action-based sensorimotor information (see Buxbaum & Saffran, 1998). The model also allows for the selective disruption of linkages between attribute domains, as well as damage to connections between specific domains and input and output systems. The literature contains descriptions of disorders of the latter type. For example, McCarthy and Warrington (1988) studied a patient (TOB) who was impaired on living things when queried verbally, but who was able to describe living things adequately when provided with pictures. Asked to define the word dolphin, for example, TOB said “a fish or a bird,” but when shown a picture he responded, “lives in water . . . trained to jump up and come out . . . In America during the war years they started to get this particular animal to go through to look into ships.” We have recently tested a patient with a similar deficit. She could not, for example, define the meaning of the word candle, responding that it had something to do with food (from can, perhaps, or candy), but when shown a picture she said, “You put them on the table at dinner, and they provide light.” The same patient performed at normal levels on an associative matching test with pictures but was severely impaired when the same items were presented as words. The model could account for this pattern by disruption of the linkages between lexical representations (word forms in Figure 21.5) and semantic information. Based on Warrington and McCarthy’s (1987) suggestion of finergrained distinctions, one should also see patients with deficits selective to animals or foods. Such patients have been reported; for example, Hart and Gordon (1992) described a patient who was impaired on animals but not fruits and vegetables, and Hart, Berndt, and Caramazza (1985) have reported the opposite pattern.

Does the living-things deficit go along with poor processing of perceptual features, as the weighted-features model would have it? This issue has been investigated in a number of different studies, with mixed results. Some have been favorable to the model (e.g., Breedin, Martin, et al., 1994; De Renzi & Lucchelli, 1994; Farah, Hammond, Mehta, & Ratcliff, 1989; Forde, Francis, Riddoch, Rumiati, & Humphreys, 1997; Gainotti & Silveri, 1996; Moss, Tyler, & Jennings, 1997), while others have found no difference between perceptual and other features (e.g., Barbarotto, Capitani, Spinnler, & Travelli, 1995; Caramazza & Shelton, 1998; Funnell & De Mornay Davies, 1996). Moreover, the positive findings are not as strong as they could be, in that the loss of perceptual information has generally been restricted to living things (Caramazza & Shelton). To explain why this feature deficit does not apply to artifacts as well, proponents of the model have proposed that because the features of man-made things are often closely related to their functions, it may be possible to generate perceptual properties for objects whose functional properties are retained (Moss et al., 1997; see also De Renzi & Lucchelli, 1994).

Despite these mixed findings, the weighted-features account, in our view, still merits serious consideration. For one thing, the anatomical locus of the living-things deficit is consistent with impaired processing of perceptual features. These patients tend to have damage in the inferior temporal cortex bilaterally (Breedin, Saffran, et al., 1994; Gainotti & Silveri, 1996; and note that herpes simplex encephalitis preferentially strikes at inferior and medial temporal cortices). The affected area borders on the region of visual association cortex that is concerned with the recognition of objects; and information from other sensory association areas converges on the anterior inferotemporal cortex on its way to medial structures such as the hippocampus. The model also makes sense from an evolutionary perspective. The need to know about the world in which we live is not unique to humans. Although language vastly expands the means for acquiring information, we, like other animals, learn about the world through visual and other sensory input.

Imaging Semantics in the Normal Brain

Recently, functional imaging techniques have been used in association with semantic tasks to identify brain regions involved in semantic operations. The neurologically intact participant is asked to name objects aloud or subvocally, to generate items from particular categories (e.g., names of animals), or to answer probe questions, at the same time that his or her brain activity is being imaged by PET or fMRI. One question addressed in such studies is whether semantic judgments to pictures and words activate a common substrate. The findings are that they do, and that the substrate is distributed within and around the left temporal lobe, specifically the temporoparietal junction, temporal-occipital junction (fusiform gyrus; BA 37), middle temporal gyrus, and inferior frontal gyrus (BA 11/47; Vandenberghe, Price, Wise, Josephs, & Frackowiak, 1996). This corresponds closely to the lesion sites in semantic dementia, except that anterior temporal lobe is not part of the activated network (see Murtha, Chertkow, Beauregard, & Evans, 1999). This raises questions as to whether anterior temporal atrophy affects semantic storage directly (as suggested by Breedin, Saffran, et al., 1994, among others) or indirectly, by interrupting connections to components of the semantic network located farther back in the temporal and occipital lobes. A third possibility, argued by H. Damasio, Grabowski, Tranel, Hichwa, and Damasio (1996), is that the left anterior temporal lobe plays a key role in mediating between semantics and the mental lexicon, such that damage to this area disrupts not semantics but lexical-phonological retrieval (for opposing arguments, see Murtha et al., 1999).

Other activation studies have sought to specify the particular functions of regions in this distributed network, by varying properties of the primary and baseline tasks. One finding is that the temporal-occipital area (fusiform gyrus; BA 37) is involved in the processing of semantics (Murtha et al., 1999), and not low-level perceptual processing (Kanwisher, Woods, Iacoboni, & Mazziotta, 1997). Support for this comes from a study by Beauregard and colleagues (1997), who described left fusiform activity during passive viewing of animal names but not abstract words. The suggestion from this study, and from others reporting enhanced fusiform activation during the processing of living entities (Perani et al., 1995), is that the left fusiform area is an important component of the circuitry involved in the processing of animate entities or visual semantic features.

As to the neural circuitry of inanimate entities, a study by A. Martin, Wiggs, Ungerleider, and Haxby (1996) comports well with the lesion evidence and the weighted-features account. These investigators examined silent and oral naming of animals and tools against a baseline task that involved the viewing of nonsense figures. In this study, both types of objects generated activity in the fusiform gyrus bilaterally; however, tools selectively activated left-middle temporal areas and the left premotor area. The premotor area activated in tool naming was also active in a previous study in which subjects imagined grasping objects with the right hand (A. Martin, Haxby, Lalonde, & Ungerleider, 1995). The implication is that sensorimotor circuits involved in grasp programming are activated during the naming of tools, and, hence, that grasp information is part of the semantic representation of tools (see also Grafton, Fadiga, Arbib, & Rizzolatti, 1997). It should be noted that not all neuroimaging studies of tools have described premotor activation. However, there is convergent evidence that whereas the network activated by animals has a bilateral distribution, the network for tools is restricted to the left hemisphere (Cappa, Perani, Schnur, Tettamanti, & Fazio, 1998; Perani et al., 1995; and for lesion evidence, Tranel, Damasio, & Damasio, 1997).

Recent studies have also shed light on why the left prefrontal region (BA 44, 45, 46, 47) is frequently activated in semantic tasks. It appears that these areas are not, as once thought, involved in the storage of semantic information (e.g., Peterson, Fox, Posner, Mintun, & Raichle, 1988). Rather, prefrontal activation in semantic tasks varies as a function of task difficulty and is more likely related to control processes, such as working memory (Murtha et al., 1999) and competitive selection (Thompson-Schill, D’Esposito, Aguirre, & Farah, 1997).

The Comprehension of Spoken Language

The comprehension of spoken input is a complex process, involving (a) analysis of speech sounds via the extraction of spectral and temporal cues from the speech signal; (b) use of the products of this analysis to access entries in the internal lexicon and ultimately the meanings of words; (c) syntactic analysis if the input is sentential; and (d) interpretation of the meaning of the sentence, a process that requires the integration of several different forms of information (lexical, syntactic, and semantic).

Speech Perception and Word Deafness

Spoken speech poses a number of problems for the listener. Much of the information is carried by rapid changes in the speech signal—the cues that differentiate consonants, for example. Also, the information in the speech stream is transient; although readers can reexamine a word (and there is evidence that they do; see, e.g., Altmann, Garnham, & Dennis, 1992), listeners cannot, particularly if the current word is followed (and thereby overwritten) by others. There are additional difficulties, identified in the literature as the segmentation and invariance problems. The segmentation problem refers to the fact that there are often no spaces—no silent gaps—to signal the boundaries between the words of an utterance. There is evidence that consistency in the stress patterns of words may be utilized for this purpose; for example, English generally places stress on the first syllable of nouns, a pattern that infants become familiar with during the first year of life (Jusczyk, Cutler, & Redanz, 1993). The invariance problem refers to the variability of the signals associated with a given phoneme, which reflect the influence of the phonemes that surround it (coarticulation). This variation is evident in spectrographic displays of speech stimuli, where it can be seen, for example, that the sound associated with the /b/ in about is not the same as that of the /b/ in table. Although speech perception has been studied extensively, there is no general agreement on how the human auditory system copes with these complexities (see Miller & Eimas, 1995, for a review).

There is also no consensus on the mechanisms that underlie the ability to identify spoken words. Some investigators claim that words are recognized on the basis of auditory properties alone (e.g., Klatt, 1989); others maintain that word perception is phonetically based, or that it utilizes abstract phonological representations, or relies on the analysis of syllabic units (see Miller & Eimas, 1995, for a summary of these views). Across languages, word recognition may depend on different aspects of auditory input; for example, some languages (Thai, Mandarin Chinese) utilize tonal information, although most do not.

There is experimental evidence that contextual information is influential in the perception of speech. For example, partial phonological information is more likely to be filled in by the perceiver if the absent phoneme (replaced by a cough or noise) is part of a word as opposed to a nonword (Ganong, 1980; Warren, 1970). This suggests that there is feedback from partially activated lexical representations to prelexical stages of analysis of the input signal; some models of speech perception incorporate such effects (e.g., McClelland & Elman, 1986), but others manage to accommodate this result without adopting this assumption (Miller & Eimas, 1995). There is also evidence that contextual information from other words in the sentence facilitates word recognition. Listeners are quicker to recognize a previously identified target word in a sentence context if the syntax is correct and the sentence is semantically coherent (e.g., Marslen-Wilson & Tyler, 1980). On the other hand, it has also been shown that word recognition does not require either full or accurate input; remarkably, the identification of a word is seldom impeded by errors on the part of the speaker or partial masking by noise (e.g., Miller & Eimas). It appears that words are activated in parallel on the basis of partial information (e.g., hearing the sound “sih” will activate city, citizen, silly, simple, etc.) and some words can be recognized before they are completed (the cohort theory; Marslen-Wilson & Welsh, 1978) although the presence of activated neighbors can also slow recognition of a given word (Luce, Pisoni, & Goldinger, 1990).

Whatever the nature of the mechanisms for speech perception and lexical access, it is clear that they are supported by portions of the temporal lobe—the left temporal lobe, in particular.Aportion of the superior temporal gyrus (Brodmann’s area 41, or Heschl’s gyrus), which extends medially into the Sylvian fissure, is the location of A1—primary auditory cortex, the brain region that receives input from earlier processing stations in the auditory pathway. Primary auditory cortex is surrounded by auditory association cortex, where the incoming signals undergo additional processing and identification. Evidence from functional imaging studies indicates that the left temporal lobe is more sensitive than the right when responding to auditory stimuli of brief temporal duration, an important characteristic of speech (e.g., Fitch, Miller, & Tallal, 1997). It is sometimes suggested that the left hemisphere’s dominant role in language function is an outgrowth of its capacity to process rapid changes in auditory signals (J. Schwartz & Tallal, 1980).

Lesions in the left temporal auditory association area (Wernicke’s area), which lies posterior and lateral to A1, give rise to an array of deficits that include impaired comprehension of spoken language as well as disturbances in production (see later discussion of Wernicke’s aphasia). More rare are cases in which the impairment is limited to speech perception. This disorder, known as pure word deafness, results from smaller lesions in the left temporal lobe, or in some cases from damage to the temporal lobes bilaterally (e.g., Takahashi et al., 1992; Yaqub, Gascon, Al-Nosha, & Whitaker, 1988). Both types of lesion are likely to cut off auditory input to the left temporal lobe; the input pathways include fibers from the thalamus (medial geniculate nucleus) and from the homologous area in the right hemisphere that projects to the left temporal lobe via the corpus callosum. One illustration of the effect of bilateral lesions is the case reported by Praamstra, Hagoort, Maasen, and Crul (1991). This patient initially manifested Wernicke’s aphasia as a result of a left temporal lesion; several years later, he suffered a right temporal lesion, which produced word deafness.

Patients with pure word deafness retain the ability to speak, and to understand written language; and while they continue to perceive spoken language as such, they have great difficulty comprehending speech and in repeating what is said to them. As an English-speaking word-deaf patient remarked to one of us, it seemed to him that people were speaking in a foreign language, and that his ears were disconnected from his voice (Saffran, Marin, & Yeni-Komshian, 1976). Word-deaf patients retain the ability to perceive vowels, which are long lasting and constant in form; but they perform poorly on tests of phoneme discrimination and identification that involve consonants. Consonants involve signals that undergo changes in frequency, and some include components that are very brief in duration.

Although word-deaf patients are severely impaired under most conditions, their comprehension of spoken language improves somewhat if they are allowed to read lips, or if other contextual information is provided (e.g., Saffran et al., 1976; Shindo, Kaga, & Tanaka, 1991). These effects suggest that top-down processes (information fed back from word representations) can be used to disambiguate a signal that is noisy or degraded. Some of these patients have no difficulty identifying nonspeech sounds, such as those produced by animals or musical instruments (e.g., Saffran et al.). Failure to recognize nonspeech stimuli is termed auditory agnosia, a condition generally associated with right temporal lobe lesions (e.g., Fujii et al., 1990). For a recent review and case summaries, see Simons and Lambon Ralph (1999).

Lexical Comprehension and Wernicke’s Aphasia

To comprehend a word, it is necessary for the input signal to contact the appropriate entry in the mental lexicon. The lexical entry provides access to the word’s meaning and to its grammatical properties (whether it is a noun or a verb; if a verb, whether it is transitive or intransitive, etc.), information that is required for the computation of syntactic structure.

Word comprehension failure is a cardinal feature of the syndrome known as Wernicke’s aphasia. These patients typically have large left temporal lobe lesions including not just the classical Wernicke’s area (posterior part of superior temporal gyrus) but also the posterior middle temporal gyrus and underlying white matter (Dronkers et al., 2000). Recent evidence suggests that a lesion restricted to Wernicke’s area will not produce a chronic Wernicke’s aphasia (Basso, Lecours, Moraschini, & Vanier, 1985; Dronkers et al.).

Wernicke’s aphasia is far more common than word deafness, and its impact on language functions is more extensive. The comprehension problem is not limited to spoken language; reading comprehension is usually affected as well, although there are cases in which the patient does much better with printed than spoken input (e.g., Ellis, Miller, & Sin, 1983; Heilman, Rothi, Campanella, & Wolfson, 1979; Hillis, Boatman, Hart, & Gordon, 1999). In addition, there are deficits in language production, written as well as spoken. These patients tend to have difficulty finding the right words. Instead, they may substitute words that are related in meaning, or they may rely on pronouns and general terms such as place and thing. Their production may also be riddled with nonwords (neologisms). In extreme cases, speech is reduced to semantic or neologistic jargon, which is difficult if not impossible to comprehend.

The nature of the word comprehension deficit in Wernicke’s aphasia is not well understood. Although it was earlier thought that the deficit reflects impaired phoneme perception (e.g., Luria, 1966), it is now recognized that there is little correlation between phoneme perception deficits and auditory comprehension impairments in aphasics (Blumstein, 1994). For example, Blumstein and her colleagues have demonstrated that patients with preserved phoneme discrimination may nevertheless be impaired in identifying speech sounds (Blumstein, Cooper, Zurif, & Caramazza, 1977; Blumstein, Tartter, Nigro, & Statlender, 1984), implying that comprehension may falter as a consequence of the speech input’s failing to contact phonemic representations. The evidence is less than definitive, however, since the phonemic identification task requires matching the spoken input to a printed representation, and it is not clear that all the patients tested in this way have been capable of meeting the task demands.

Another likely locus for impaired word comprehension is in the access to semantic representations. Word comprehension is most often assessed by means of word-picture matching tests. Wernicke’s aphasics perform poorly on such tests, and they have particular difficulty when the foils are phonologically similar to the target or belong to the same semantic category. The latter effect implicates semantic processing: If the patient were simply unable to perceive the speech sounds or map them onto a phonemic representation, the semantic similarity of the foils would not matter. That it does matter indicates that the perceived word is not accessing the full set of semantic features that distinguish one category member from another; recall that the same pattern was evident in semantic dementia.

One factor that differentiates at least some aphasics from semantic dementia patients is the aphasics’ relatively well preserved performance on tests that utilize pictorial material exclusively. A task of this nature is the Pyramids and Palm Trees test developed by Howard and Patterson (1992), in which the subject is required to match one of two pictures to a third on the basis of conceptual similarity (e.g., a palm or pine tree to a pyramid). The same task can be administered using word stimuli. Patients who do well on the picture version of the test but poorly on comparable word-based assessments clearly have difficulty accessing meaning from words. The neurological basis for such word-only semantic deficits has not been established. However, Hart and Gordon (1990) performed an anatomical study on 3 aphasic patients with unusually pure semantic comprehension deficits, manifested on tests with spoken and written words and with pictures. Lesion overlap was found in the posterior temporal (BA 22, 21) and inferior parietal (BA 39, 40) regions (Hart & Gordon). It is possible that lesions here disrupt pathways between regions concerned with phonemic or lexical aspects of word recognition and those where semantic information is stored.

The fact that Wernicke’s patients tend to perform better on picture-word matching tests when the foils are unrelated to the target suggests that they retain some knowledge of the meaning of the word. Other tasks provide additional evidence along these lines. One paradigm used to demonstrate partial preservation of semantic information depends on activation mediated by relationships among words, or priming. The subject hears or sees a word (the prime) that bears a relationship to a second word (the target); the task entails a response to the target, such as lexical decision (deciding whether it is a word or not) or pronunciation (if the word is written). Presentation of a semantically related prime normally speeds the response to the target word, in comparison to a prime that bears no relationship to the target. Milberg and Blumstein and their colleagues have shown thatWernicke’s aphasics who perform poorly on word comprehension tasks demonstrate semantic priming effects on tasks such as lexical decision (e.g., Milberg & Blumstein, 1981; Milberg, Blumstein, & Dworetzky, 1988).

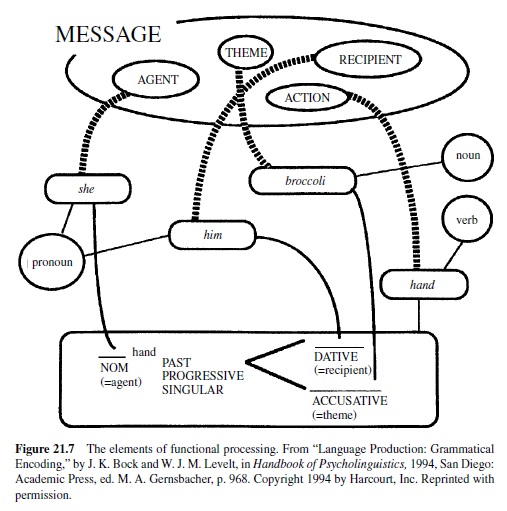

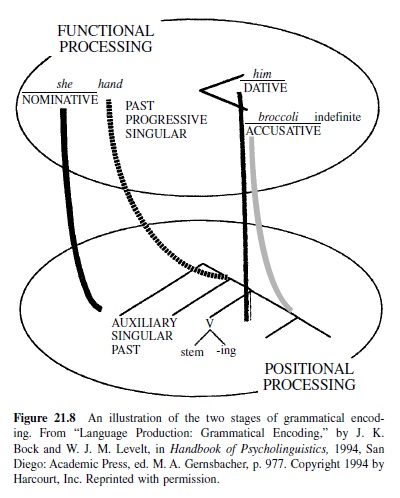

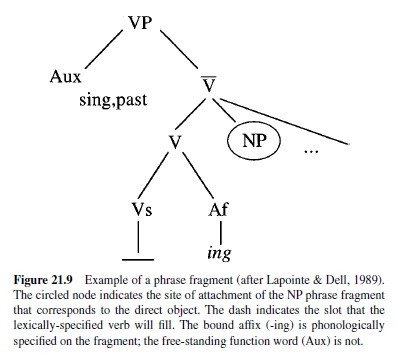

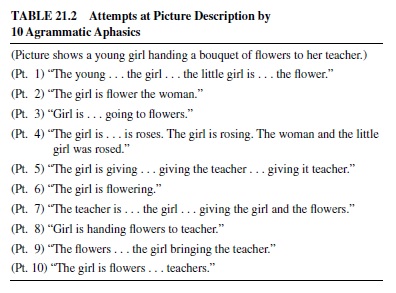

Phonological and Word Processing: Conclusions