View sample psychometric characteristics of assessment procedures research paper. Browse other research paper examples and check the list of psychology research paper topics for more inspiration. If you need a psychology research paper written according to all the academic standards, you can always turn to our experienced writers for help. This is how your paper can get an A! Feel free to contact our custom writing service for professional assistance. We offer high-quality assignments for reasonable rates.

“Whenever you can, count!” advised Sir Francis Galton (as cited in Newman, 1956, p. 1169), the father of contemporary psychometrics. Galton is credited with originating the concepts of regression, the regression line, and regression to the mean, as well as developing the mathematical formula (with Karl Pearson) for the correlation coefficient. He was a pioneer in efforts to measure physical, psychophysical, and mental traits, offering the first opportunity for the public to take tests of various sensory abilities and mental capacities in his London Anthropometric Laboratory. Galton quantified everything from fingerprint characteristics to variations in weather conditions to the number of brush strokes in two portraits for which he sat. At scientific meetings, he was known to count the number of times per minute members of the audience fidgeted, computing an average and deducing that the frequency of fidgeting was inversely associated with level of audience interest in the presentation.

Academic Writing, Editing, Proofreading, And Problem Solving Services

Get 10% OFF with 24START discount code

Of course, the challenge in contemporary assessment is to know what to measure, how to measure it, and whether the measurements are meaningful. In a definition that still remains appropriate, Galton defined psychometry as the “art of imposing measurement and number upon operations of the mind” (Galton, 1879, p. 149). Derived from the Greek psyche (ψνχή, meaning soul) and metro ( μετρώ , meaning measure), psychometry may best be considered an evolving set of scientific rules for the development and application of psychological tests. Construction of psychological tests is guided by psychometric theories in the midst of a paradigm shift. Classical test theory, epitomized by Gulliksen’s (1950) Theory of Mental Tests, has dominated psychological test development through the latter two thirds of the twentieth century. Item response theory, beginning with the work of Rasch (1960) and Lord and Novick’s (1968) Statistical Theories of Mental Test Scores, is growing in influence and use, and it has recently culminated in the “new rules of measurement” (Embretson, 1995).

In this research paper, the most salient psychometric characteristics of psychological tests are described, incorporating elements from both classical test theory and item response theory. Guidelines are provided for the evaluation of test technical adequacy.The guidelines may be applied to a wide array of psychological tests, including those in the domains of academic achievement, adaptive behavior, cognitive-intellectual abilities, neuropsychological functions, personality and psychopathology, and personnel selection. The guidelines are based in part upon conceptual extensions of the Standards for Educational and Psychological Testing (American Educational Research Association, 1999) and recommendations by such authorities as Anastasi and Urbina (1997), Bracken (1987), Cattell (1986), Nunnally and Bernstein (1994), and Salvia and Ysseldyke (2001).

Psychometric Theories

The psychometric characteristics of mental tests are generally derived from one or both of the two leading theoretical approaches to test construction: classical test theory and item response theory. Although it is common for scholars to contrast these two approaches (e.g., Embretson & Hershberger, 1999), most contemporary test developers use elements from both approaches in a complementary manner (Nunnally & Bernstein, 1994).

Classical Test Theory

Classical test theory traces its origins to the procedures pioneered by Galton, Pearson, Spearman, and E. L. Thorndike, and it is usually defined by Gulliksen’s (1950) classic book. Classical test theory has shaped contemporary investigations of test score reliability, validity, and fairness, as well as the widespread use of statistical techniques such as factor analysis.

At its heart, classical test theory is based upon the assumption that an obtained test score reflects both true score and error score. Test scores may be expressed in the familiar equation

Observed Score = True Score + Error

In this framework, the observed score is the test score that was actually obtained.The true scoreis thehypotheticalamount of the designated trait specific to the examinee, a quantity that would be expected if the entire universe of relevant content were assessed or if the examinee were tested an infinite number of times without any confounding effects of such things as practice or fatigue. Measurement error is defined as the difference between true score and observed score. Error is uncorrelated with the true score and with other variables, and it is distributed normally and uniformly about the true score. Because its influence is random, the average measurement error across many testing occasions is expected to be zero.

Many of the key elements from contemporary psychometrics may be derived from this core assumption. For example, internal consistency reliability is a psychometric function of random measurement error, equal to the ratio of the true score variance to the observed score variance. By comparison, validity depends on the extent of nonrandom measurement error. Systematic sources of measurement error negatively influence validity, because error prevents measures from validly representing what they purport to assess. Issues of test fairness and bias are sometimes considered to constitute a special case of validity in which systematic sources of error across racial and ethnic groups constitute threats to validity generalization.As an extension of classical test theory, generalizability theory (Cronbach, Gleser, Nanda, & Rajaratnam, 1972; Cronbach, Rajaratnam, & Gleser, 1963; Gleser, Cronbach, & Rajaratnam, 1965) includes a family of statistical procedures that permits the estimation and partitioning of multiple sources of error in measurement. Generalizability theory posits that a response score is defined by the specific conditions under which it is produced, such as scorers, methods, settings, and times (Cone, 1978); generalizability coefficients estimate the degree to which response scores can be generalized across different levels of the same condition.

Classical test theory places more emphasis on test score properties than on item parameters. According to Gulliksen (1950), the essential item statistics are the proportion of persons answering each item correctly (item difficulties, or p values), the point-biserial correlation between item and total score multiplied by the item standard deviation (reliability index), and the point-biserial correlation between item and criterion score multiplied by the item standard deviation (validity index).

Hambleton, Swaminathan, and Rogers (1991) have identified four chief limitations of classical test theory: (a) It has limited utility for constructing tests for dissimilar examinee populations (sample dependence); (b) it is not amenable for making comparisons of examinee performance on different tests purporting to measure the trait of interest (test dependence); (c) it operates under the assumption that equal measurement error exists for all examinees; and (d) it provides no basis for predicting the likelihood of a given response of an examinee to a given test item, based upon responses to other items. In general, with classical test theory it is difficult to separate examinee characteristics from test characteristics. Item response theory addresses many of these limitations.

Item Response Theory

Item response theory (IRT) may be traced to two separate lines of development. Its origins may be traced to the work of Danish mathematician Georg Rasch (1960), who developed a family of IRT models that separated person and item parameters. Rasch influenced the thinking of leading European and American psychometricians such as Gerhard Fischer and Benjamin Wright. A second line of development stemmed from research at the Educational Testing Service that culminated in Frederick Lord and Melvin Novick’s (1968) classic textbook, including four chapters on IRT written by Allan Birnbaum. This book provided a unified statistical treatment of test theory and moved beyond Gulliksen’s earlier classical test theory work.

IRT addresses the issue of how individual test items and observations map in a linear manner onto a targeted construct (termed latent trait, with the amount of the trait denoted by ). The frequency distribution of a total score, factor score, or other trait estimates is calculated on a standardized scale with a mean of 0 and a standard deviation of 1. An item characteristic curve (ICC) can then be created by plotting the proportion of people who have a score at each level of , so that the probability of a person’s passing an item depends solely on the ability of that person and the difficulty of the item. This item curve yields several parameters, including item difficulty and item discrimination. Item difficulty is the location on the latent trait continuum corresponding to chance responding. Item discrimination is the rate or slope at which the probability of success changes with trait level (i.e., the ability of the item to differentiate those with more of the trait from those with less). A third parameter denotes the probability of guessing. IRT based on the one-parameter model (i.e., item difficulty) assumes equal discrimination for all items and negligible probability of guessing and is generally referred to as the Rasch model. Two-parameter models (those that estimate both item difficulty and discrimination) and three-parameter models (those that estimate item difficulty, discrimination, and probability of guessing) may also be used.

IRT posits several assumptions: (a) unidimensionality and stability of the latent trait, which is usually estimated from an aggregation of individual item; (b) local independence of items,meaningthattheonlyinfluenceonitemresponsesisthe latent trait and not the other items; and (c) item parameter invariance, which means that item properties are a function of the item itself rather than the sample, test form, or interaction between item and respondent. Knowles and Condon (2000) argue that these assumptions may not always be made safely. Despite this limitation, IRT offers technology that makes test development more efficient than classical test theory.

Sampling and Norming

Under ideal circumstances, individual test results would be referenced to the performance of the entire collection of individuals (target population) for whom the test is intended. However, it is rarely feasible to measure performance of every member in a population. Accordingly, tests are developed through sampling procedures, which are designed to estimate the score distribution and characteristics of a target population by measuring test performance within a subset of individuals selected from that population. Test results may then be interpreted with reference to sample characteristics, which are presumed to accurately estimate population parameters. Most psychological tests are norm referenced or criterion referenced. Norm-referenced test scores provide information about an examinee’s standing relative to the distribution of test scores found in an appropriate peer comparison group. Criterion-referenced tests yield scores that are interpreted relative to predetermined standards of performance, such as proficiency at a specific skill or activity of daily life.

Appropriate Samples for Test Applications

When a test is intended to yield information about examinees’ standing relative to their peers, the chief objective of sampling should be to provide a reference group that is representative of the population for whom the test was intended. Sample selection involves specifying appropriate stratification variables for inclusion in the sampling plan. Kalton (1983) notes that two conditions need to be fulfilled for stratification: (a) The population proportions in the strata need to be known, and (b) it has to be possible to draw independent samples from each stratum. Population proportions for nationally normed tests are usually drawn from Census Bureau reports and updates.

The stratification variables need to be those that account for substantial variation in test performance; variables unrelated to the construct being assessed need not be included in the sampling plan. Variables frequently used for sample stratification include the following:

- Race (White, African American, Asian/Pacific Islander, Native American, Other).

- Ethnicity (Hispanic origin, non-Hispanic origin).

- Geographic Region (Midwest, Northeast, South, West).

- Community Setting (Urban/Suburban, Rural).

- Classroom Placement (Full-Time Regular Classroom, Full-Time Self-Contained Classroom, Part-Time Special Education Resource, Other).

- SpecialEducationServices(LearningDisability,Speechand Language Impairments, Serious Emotional Disturbance, Mental Retardation, Giftedness, English as a Second Language,BilingualEducation,andRegularEducation).

- Parent Educational Attainment (Less Than High School Degree,HighSchoolGraduateorEquivalent,SomeCollege or Technical School, Four or MoreYears of College).

The most challenging of stratification variables is socioeconomic status (SES), particularly because it tends to be associated with cognitive test performance and it is difficult to operationally define. Parent educational attainment is often used as an estimate of SES because it is readily available and objective, and because parent education correlates moderately with family income. Parent occupation and income are also sometimes combined as estimates of SES, although income information is generally difficult to obtain. Community estimates of SES add an additional level of sampling rigor, because the community in which an individual lives may be a greater factor in the child’s everyday life experience than his or her parents’ educational attainment. Similarly, the number of people residing in the home and the number of parents (one or two) heading the family are both factors that can influence a family’s socioeconomic condition. For example, a family of three that has an annual income of $40,000 may have more economic viability than a family of six that earns the same income. Also, a college-educated single parent may earn less income than two less educated cohabiting parents. The influences of SES on construct development clearly represent an area of further study, requiring more refined definition.

When test users intend to rank individuals relative to the special populations to which they belong, it may also be desirable to ensure that proportionate representation of those special populations are included in the normative sample (e.g., individuals who are mentally retarded, conduct disordered, or learning disabled). Millon, Davis, and Millon (1997) noted that tests normed on special populations may require the use of base rate scores rather than traditional standard scores, because assumptions of a normal distribution of scores often cannot be met within clinical populations.

Aclassic example of an inappropriate normative reference sample is found with the original Minnesota Multiphasic Personality Inventory (MMPI; Hathaway & McKinley, 1943), which was normed on 724 Minnesota white adults who were, for the most part, relatives or visitors of patients in the University of Minnesota Hospitals. Accordingly, the original MMPI reference group was primarily composed of Minnesota farmers! Fortunately, the MMPI-2 (Butcher, Dahlstrom, Graham, Tellegen, & Kaemmer, 1989) has remediated this normative shortcoming.

Appropriate Sampling Methodology

One of the principal objectives of sampling is to ensure that each individual in the target population has an equal and independent chance of being selected. Sampling methodologies include both probability and nonprobability approaches, which have different strengths and weaknesses in terms of accuracy, cost, and feasibility (Levy & Lemeshow, 1999).

Probability sampling is a random selection approach that permits the use of statistical theory to estimate the properties of sample estimators. Probability sampling is generally too expensive for norming educational and psychological tests, but it offers the advantage of permitting the determination of the degree of sampling error, such as is frequently reported with the results of most public opinion polls. Sampling error may be defined as the difference between a sample statistic and its corresponding population parameter. Sampling error is independent from measurement error and tends to have a systematic effect on test scores, whereas the effects of measurement error by definition is random. When sampling error in psychological test norms is not reported, the estimate of the true score will always be less accurate than when only measurement error is reported.

A probability sampling approach sometimes employed in psychological test norming is known as multistage stratified random cluster sampling; this approach uses a multistage sampling strategy in which a large or dispersed population is divided into a large number of groups, with participants in the groups selected via random sampling. In two-stage cluster sampling, each group undergoes a second round of simple random sampling based on the expectation that each cluster closely resembles every other cluster. For example, a set of schools may constitute the first stage of sampling, with students randomly drawn from the schools in the second stage. Cluster sampling is more economical than random sampling, but incremental amounts of error may be introduced at each stage of the sample selection. Moreover, cluster sampling commonly results in high standard errors when cases from a cluster are homogeneous (Levy & Lemeshow, 1999). Sampling error can be estimated with the cluster sampling approach, so long as the selection process at the various stages involves random sampling.

In general, sampling error tends to be largest when nonprobability-sampling approaches, such as convenience sampling or quota sampling, are employed. Convenience samples involve the use of a self-selected sample that is easily accessible (e.g., volunteers). Quota samples involve the selection by a coordinator of a predetermined number of cases with specific characteristics. The probability of acquiring an unrepresentative sample is high when using nonprobability procedures. The weakness of all nonprobability-sampling methods is that statistical theory cannot be used to estimate sampling precision, and accordingly sampling accuracy can only be subjectively evaluated (e.g., Kalton, 1983).

Adequately Sized Normative Samples

How large should a normative sample be? The number of participants sampled at any given stratification level needs to be sufficiently large to provide acceptable sampling error, stable parameter estimates for the target populations, and sufficient power in statistical analyses. As rules of thumb, group-administeredtestsgenerallysampleover10,000participants per age or grade level, whereas individually administered tests typically sample 100 to 200 participants per level (e.g., Robertson, 1992). In IRT, the minimum sample size is related to the choice of calibration model used. In an integrative review, Suen (1990) recommended that a minimum of 200 participants be examined for the one-parameter Rasch model, that at least 500 examinees be examined for the twoparameter model, and that at least 1,000 examinees be examined for the three-parameter model.

The minimum number of cases to be collected (or clusters to be sampled) also depends in part upon the sampling procedure used, and Levy and Lemeshow (1999) provide formulas for a variety of sampling procedures. Up to a point, the larger the sample, the greater the reliability of sampling accuracy. Cattell (1986) noted that eventually diminishing returns can be expected when sample sizes are increased beyond a reasonable level.

The smallest acceptable number of cases in a sampling plan may also be driven by the statistical analyses to be conducted. For example, Zieky (1993) recommended that a minimum of 500 examinees be distributed across the two groups compared in differential item function studies for groupadministered tests. For individually administered tests, these types of analyses require substantial oversampling of minorities.With regard to exploratory factor analyses, Riese,Waller, and Comrey (2000) have reviewed the psychometric literature and concluded that most rules of thumb pertaining to minimum sample size are not useful. They suggest that when communalities are high and factors are well defined, sample sizes of 100 are often adequate, but when communalities are low, the number of factors is large, and the number of indicators per factor is small, even a sample size of 500 may be inadequate. As with statistical analyses in general, minimal acceptable sample sizes should be based on practical considerations, including such considerations as desired alpha level, power, and effect size.

Sampling Precision

As we have discussed, sampling error cannot be formally estimated when probability sampling approaches are not used, and most educational and psychological tests do not employ probability sampling. Given this limitation, there are no objective standards for the sampling precision of test norms. Angoff (1984) recommended as a rule of thumb that the maximum tolerable sampling error should be no more than 14% of the standard error of measurement. He declined, however, to provide further guidance in this area: “Beyond the general consideration that norms should be as precise as their intended use demands and the cost permits, there is very little else that can be said regarding minimum standards for norms reliability” (p. 79).

In the absence of formal estimates of sampling error, the accuracy of sampling strata may be most easily determined by comparing stratification breakdowns against those available for the target population. The more closely the sample matches population characteristics, the more representative is a test’s normative sample. As best practice, we recommend that test developers provide tables showing the composition of the standardization sample within and across all stratification criteria (e.g., Percentages of the Normative Sample according to combined variables such as Age by Race by Parent Education). This level of stringency and detail ensures that important demographic variables are distributed proportionately across other stratifying variables according to population proportions. The practice of reporting sampling accuracy for single stratification variables “on the margins” (i.e., by one stratification variable at a time) tends to conceal lapses in sampling accuracy. For example, if sample proportions of low socioeconomic status are concentrated in minority groups (instead of being proportionately distributed across majority and minority groups), then the precision of the sample has been compromised through the neglect of minority groups with high socioeconomic status and majority groups with low socioeconomic status. The more the sample deviates from population proportions on multiple stratifications, the greater the effect of sampling error.

Manipulation of the sample composition to generate norms is often accomplished through sample weighting (i.e., application of participant weights to obtain a distribution of scores that is exactly proportioned to the target population representations). Weighting is more frequently used with group-administered educational tests than psychological tests because of the larger size of the normative samples. Educational tests typically involve the collection of thousands of cases, with weighting used to ensure proportionate representation. Weighting is less frequently used with psychological tests, and its use with these smaller samples may significantly affect systematic sampling error because fewer cases are collected and because weighting may thereby differentially affect proportions across different stratification criteria, improving one at the cost of another. Weighting is most likely to contribute to sampling error when a group has been inadequately represented with too few cases collected.

Recency of Sampling

How old can norms be and still remain accurate? Evidence from the last two decades suggests that norms from measures of cognitive ability and behavioral adjustment are susceptible to becoming soft or stale (i.e., test consumers should use older norms with caution). Use of outdated normative samples introduces systematic error into the diagnostic process and may negatively influence decision-making, such as by denying services (e.g., for mentally handicapping conditions) to sizable numbers of children and adolescents who otherwise would have been identified as eligible to receive services. Sample recency is an ethical concern for all psychologists who test or conduct assessments. The American Psychological Association’s (1992) Ethical Principles direct psychologists to avoid basing decisions or recommendations on results that stem from obsolete or outdated tests.

The problem of normative obsolescence has been most robustly demonstrated with intelligence tests. The Flynn effect (Herrnstein & Murray, 1994) describes a consistent pattern of population intelligence test score gains over time and across nations (Flynn, 1984, 1987, 1994, 1999). For intelligence tests, the rate of gain is about one third of an IQ point per year (3 points per decade), which has been a roughly uniform finding over time and for all ages (Flynn, 1999). The Flynn effect appears to occur as early as infancy (Bayley, 1993; S. K. Campbell, Siegel, Parr, & Ramey, 1986) and continues through the full range of adulthood (Tulsky & Ledbetter, 2000). The Flynn effect implies that older test norms may yield inflated scores relative to current normative expectations. For example, the Wechsler Intelligence Scale for Children—Revised (WISC-R; Wechsler, 1974) currently yields higher full scale IQs (FSIQs) than the WISC-III (Wechsler, 1991) by about 7 IQ points.

Systematic generational normative change may also occur in other areas of assessment. For example, parent and teacher reports on the Achenbach system of empirically based behavioral assessments show increased numbers of behavior problems and lower competence scores in the general population of children and adolescents from 1976 to 1989 (Achenbach & Howell, 1993). Just as the Flynn effect suggests a systematic increase in the intelligence of the general population over time, this effect may suggest a corresponding increase in behavioral maladjustment over time.

How often should tests be revised? There is no empirical basis for making a global recommendation, but it seems reasonable to conduct normative updates, restandardizations, or revisions at time intervals corresponding to the time expected to produce one standard error of measurement (SEM) of change. For example, given the Flynn effect and a WISC-III FSIQ SEM of 3.20, one could expect about 10 to 11 years should elapse before the test’s norms would soften to the magnitude of one SEM.

Calibration and Derivation of Reference Norms

In this section, several psychometric characteristics of test construction are described as they relate to building individual scales and developing appropriate norm-referenced scores. Calibration refers to the analysis of properties of gradation in a measure, defined in part by properties of test items. Norming is the process of using scores obtained by an appropriate sample to build quantitative Bibliography: that can be effectively used in the comparison and evaluation of individual performances relative to typical peer expectations.

Calibration

The process of item and scale calibration dates back to the earliest attempts to measure temperature. Early in the seventeenth century, there was no method to quantify heat and cold except through subjective judgment. Galileo and others experimented with devices that expanded air in glass as heat increased; use of liquid in glass to measure temperature was developed in the 1630s. Some two dozen temperature scales were available for use in Europe in the seventeenth century, and each scientist had his own scales with varying gradations and reference points. It was not until the early eighteenth century that more uniform scales were developed by Fahrenheit, Celsius, and de Réaumur.

The process of calibration has similarly evolved in psychological testing. In classical test theory, item difficulty is judged by the p value, or the proportion of people in the sample that passes an item. During ability test development, items are typically ranked by p value or the amount of the trait being measured. The use of regular, incremental increases in item difficulties provides a methodology for building scale gradations. Item difficulty properties in classical test theory are dependent upon the population sampled, so that a sample with higher levels of the latent trait (e.g., older children on a set of vocabulary items) would show different item properties (e.g., higher p values) than a sample with lower levels of the latent trait (e.g., younger children on the same set of vocabulary items).

In contrast, item response theory includes both item properties and levels of the latent trait in analyses, permitting item calibration to be sample-independent. The same item difficulty and discrimination values will be estimated regardless of trait distribution. This process permits item calibration to be “sample-free,” according to Wright (1999), so that the scale transcends the group measured. Embretson (1999) has stated one of the new rules of measurement as “Unbiased estimates of item properties may be obtained from unrepresentative samples” (p. 13).

Item response theory permits several item parameters to be estimated in the process of item calibration. Among the indexes calculated in widely used Rasch model computer programs (e.g., Linacre & Wright, 1999) are item fit-to-model expectations, item difficulty calibrations, item-total correlations, and item standard error. The conformity of any item to expectations from the Rasch model may be determined by examining item fit. Items are said to have good fits with typical item characteristic curves when they show expected patterns near to and far from the latent trait level for which they are the best estimates. Measures of item difficulty adjusted for the influence of sample ability are typically expressed in logits, permitting approximation of equal difficulty intervals.

Item and Scale Gradients

The item gradient of a test refers to how steeply or gradually items are arranged by trait level and the resulting gaps that may ensue in standard scores. In order for a test to have adequate sensitivity to differing degrees of ability or any trait being measured, it must have adequate item density across the distribution of the latent trait. The larger the resulting standard score differences in relation to a change in a single raw score point, the less sensitive, discriminating, and effective a test is.

For example, on the Memory subtest of the Battelle Developmental Inventory (Newborg, Stock, Wnek, Guidubaldi, & Svinicki, 1984), a child who is 1 year, 11 months old who earned a raw score of 7 would have performance ranked at the 1st percentile for age, whereas a raw score of 8 leaps to a percentile rank of 74. The steepness of this gradient in the distribution of scores suggests that this subtest is insensitive to even large gradations in ability at this age.

A similar problem is evident on the Motor Quality index of the Bayley Scales of Infant Development–Second Edition Behavior Rating Scale (Bayley, 1993). A 36-month-old child with a raw score rating of 39 obtains a percentile rank of 66. The same child obtaining a raw score of 40 is ranked at the 99th percentile.

As a recommended guideline, tests may be said to have adequate item gradients and item density when there are approximately three items per Rasch logit, or when passage of a single item results in a standard score change of less than one third standard deviation (0.33 SD) (Bracken, 1987; Bracken & McCallum, 1998). Items that are not evenly distributed in terms of the latent trait may yield steeper change gradients that will decrease the sensitivity of the instrument to finer gradations in ability.

Floor and Ceiling Effects

Do tests have adequate breadth, bottom and top? Many tests yield their most valuable clinical inferences when scores are extreme (i.e., very low or very high). Accordingly, tests used for clinical purposes need sufficient discriminating power in the extreme ends of the distributions.

The floor of a test represents the extent to which an individual can earn appropriately low standard scores. For example, an intelligence test intended for use in the identification of individuals diagnosed with mental retardation must, by definition, extend at least 2 standard deviations below normative expectations (IQ < 70). In order to serve individuals with severe to profound mental retardation, test scores must extend even further to more than 4 standard deviations below the normative mean (IQ < 40). Tests without a sufficiently low floor would not be useful for decision-making for more severe forms of cognitive impairment.

A similar situation arises for test ceiling effects. An intelligence test with a ceiling greater than 2 standard deviations above the mean (IQ > 130) can identify most candidates for intellectually gifted programs. To identify individuals as exceptionally gifted (i.e., IQ > 160), a test ceiling must extend more than 4 standard deviations above normative expectations. There are several unique psychometric challenges to extending norms to these heights, and most extended norms are extrapolations based upon subtest scaling for higher ability samples (i.e., older examinees than those within the specified age band).

As a rule of thumb, tests used for clinical decision-making should have floors and ceilings that differentiate the extreme lowest and highest 2% of the population from the middlemost 96% (Bracken, 1987, 1988; Bracken & McCallum, 1998). Tests with inadequate floors or ceilings are inappropriate for assessing children with known or suspected mental retardation, intellectual giftedness, severe psychopathology, or exceptional social and educational competencies.

Derivation of Norm-Referenced Scores

Item response theory yields several different kinds of interpretable scores (e.g., Woodcock, 1999), only some of which are norm-referenced standard scores. Because most test users are most familiar with the use of standard scores, it is the process of arriving at this type of score that we discuss. Transformation of raw scores to standard scores involves a number of decisions based on psychometric science and more than a little art.

The first decision involves the nature of raw score transformations, based upon theoretical considerations (Is the trait being measured thought to be normally distributed?) and examination of the cumulative frequency distributions of raw scores within age groups and across age groups. The objective of this transformation is to preserve the shape of the raw score frequency distribution, including mean, variance, kurtosis, and skewness. Linear transformations of raw scores are based solely on the mean and distribution of raw scores and are commonly used when distributions are not normal; linear transformation assumes that the distances between scale points reflect true differences in the degree of the measured trait present. Area transformations of raw score distributions convert the shape of the frequency distribution into a specified type of distribution. When the raw scores are normally distributed, then they may be transformed to fit a normal curve, with corresponding percentile ranks assigned in a way so that the mean corresponds to the 50th percentile, – 1 SD and + 1 SD correspond to the 16th and 84th percentiles, respectively, and so forth. When the frequency distribution is not normal, it is possible to select from varying types of nonnormal frequency curves (e.g., Johnson, 1949) as a basis for transformation of raw scores, or to use polynomial curve fitting equations.

Following raw score transformations is the process of smoothing the curves. Data smoothing typically occurs within groups and across groups to correct for minor irregularities, presumably those irregularities that result from sampling fluctuations and error. Quality checking also occurs to eliminate vertical reversals (such as those within an age group, from one raw score to the next) and horizonal reversals (such as those within a raw score series, from one age to the next). Smoothing and elimination of reversals serve to ensure that raw score to standard score transformations progress according to growth and maturation expectations for the trait being measured.

Test Score Validity

Validity is about the meaning of test scores (Cronbach & Meehl, 1955). Although a variety of narrower definitions have been proposed, psychometric validity deals with the extent to which test scores exclusively measure their intended psychological construct(s) and guide consequential decisionmaking. This concept represents something of a metamorphosis in understanding test validation because of its emphasis on the meaning and application of test results (Geisinger, 1992). Validity involves the inferences made from test scores and is not inherent to the test itself (Cronbach, 1971).

Evidence of test score validity may take different forms, many of which are detailed below, but they are all ultimately concerned with construct validity (Guion, 1977; Messick, 1995a, 1995b). Construct validity involves appraisal of a body of evidence determining the degree to which test score inferences are accurate, adequate, and appropriate indicators of the examinee’s standing on the trait or characteristic measured by the test. Excessive narrowness or broadness in the definition and measurement of the targeted construct can threaten construct validity. The problem of excessive narrowness, or construct underrepresentation, refers to the extent to which test scores fail to tap important facets of the construct being measured. The problem of excessive broadness, or construct irrelevance, refers to the extent to which test scores are influenced by unintended factors, including irrelevant constructs and test procedural biases.

Construct validity can be supported with two broad classes of evidence: internal and external validation, which parallel the classes of threats to validity of research designs (D. T. Campbell & Stanley, 1963; Cook & Campbell, 1979). Internal evidence for validity includes information intrinsic to the measure itself, including content, substantive, and structural validation. External evidence for test score validity may be drawn from research involving independent, criterion-related data. External evidence includes convergent, discriminant, criterion-related, and consequential validation. This internalexternal dichotomy with its constituent elements represents a distillation of concepts described by Anastasi and Urbina (1997), Jackson (1971), Loevinger (1957), Messick (1995a, 1995b), and Millon et al. (1997), among others.

Internal Evidence of Validity

Internalsourcesofvalidityincludetheintrinsiccharacteristics of a test, especially its content, assessment methods, structure, and theoretical underpinnings. In this section, several sources of evidence internal to tests are described, including content validity, substantive validity, and structural validity.

Content Validity

Content validity is the degree to which elements of a test, ranging from items to instructions, are relevant to and representative of varying facets of the targeted construct (Haynes, Richard, & Kubany, 1995). Content validity is typically established through the use of expert judges who review test content, but other procedures may also be employed (Haynes et al., 1995). Hopkins and Antes (1978) recommended that tests include a table of content specifications, in which the facets and dimensions of the construct are listed alongside the number and identity of items assessing each facet.

Content differences across tests purporting to measure the same construct can explain why similar tests sometimes yield dissimilar results for the same examinee (Bracken, 1988). For example, the universe of mathematical skills includes varying types of numbers (e.g., whole numbers, decimals, fractions), number concepts (e.g., half, dozen, twice, more than), and basic operations (addition, subtraction, multiplication, division). The extent to which tests differentially sample content can account for differences between tests that purport to measure the same construct.

Tests should ideally include enough diverse content to adequately sample the breadth of construct-relevant domains, but content sampling should not be so diverse that scale coherence and uniformity are lost. Construct underrepresentation, stemming from use of narrow and homogeneous content sampling, tends to yield higher reliabilities than tests with heterogeneous item content, at the potential cost of generalizability and external validity. In contrast, tests with more heterogeneous content may show higher validity with the concomitant cost of scale reliability. Clinical inferences made from tests with excessively narrow breadth of content may be suspect, even when other indexes of validity are satisfactory (Haynes et al., 1995).

Substantive Validity

The formulation of test items and procedures based on and consistent with a theory has been termed substantive validity (Loevinger, 1957). The presence of an underlying theory enhances a test’s construct validity by providing a scaffolding between content and constructs, which logically explains relations between elements, predicts undetermined parameters, and explains findings that would be anomalous within another theory (e.g., Kuhn, 1970). As Crocker and Algina (1986) suggest, “psychological measurement, even though it is based on observable responses, would have little meaning or usefulness unless it could be interpreted in light of the underlying theoretical construct” (p. 7).

Many major psychological tests remain psychometrically rigorous but impoverished in terms of theoretical underpinnings. For example, there is conspicuously little theory associated with most widely used measures of intelligence (e.g., the Wechsler scales), behavior problems (e.g., the Child Behavior Checklist), neuropsychological functioning (e.g., the Halstead-Reitan Neuropsychology Battery), and personality and psychopathology (the MMPI-2). There may be some post hoc benefits to tests developed without theories; as observed by Nunnally and Bernstein (1994), “Virtually every measure that became popular led to new unanticipated theories” (p. 107).

Personality assessment has taken a leading role in theorybased test development, while cognitive-intellectual assessment has lagged. Describing best practices for the measurement of personality some three decades ago, Loevinger (1972) commented, “Theory has always been the mark of a mature science. The time is overdue for psychology, in general, and personality measurement, in particular, to come of age” (p. 56). In the same year, Meehl (1972) renounced his former position as a “dustbowl empiricist” in test development:

I now think that all stages in personality test development, from initial phase of item pool construction to a late-stage optimized clinical interpretive procedure for the fully developed and “validated” instrument, theory—and by this I mean all sorts of theory, including trait theory, developmental theory, learning theory, psychodynamics, and behavior genetics—should play an important role. . . . [P]sychology can no longer afford to adopt psychometric procedures whose methodology proceeds with almost zero reference to what bets it is reasonable to lay upon substantive personological horses. (pp. 149–151)

Leading personality measures with well-articulated theories include the “Big Five” factors of personality and Millon’s “three polarity” bioevolutionary theory. Newer intelligence tests based on theory such as the Kaufman Assessment Battery for Children (Kaufman & Kaufman, 1983) and Cognitive Assessment System (Naglieri & Das, 1997) represent evidence of substantive validity in cognitive assessment.

Structural Validity

Structural validity relies mainly on factor analytic techniques to identify a test’s underlying dimensions and the variance associated with each dimension. Also called factorial validity (Guilford, 1950), this form of validity may utilize other methodologies such as multidimensional scaling to help researchers understand a test’s structure. Structural validity evidence is generally internal to the test, based on the analysis of constituent subtests or scoring indexes. Structural validation approaches may also combine two or more instruments in cross-battery factor analyses to explore evidence of convergent validity.

The two leading factor-analytic methodologies used to establish structural validity are exploratory and confirmatory factor analyses. Exploratory factor analyses allow for empirical derivation of the structure of an instrument, often without a priori expectations, and are best interpreted according to the psychological meaningfulness of the dimensions or factors that emerge (e.g., Gorsuch, 1983). Confirmatory factor analyses help researchers evaluate the congruence of the test data with a specified model, as well as measuring the relative fit of competing models. Confirmatory analyses explore the extent to which the proposed factor structure of a test explains its underlying dimensions as compared to alternative theoretical explanations.

As a recommended guideline, the underlying factor structure of a test should be congruent with its composite indexes (e.g., Floyd & Widaman, 1995), and the interpretive structure of a test should be the best fitting model available. For example, several interpretive indexes for the Wechsler Intelligence Scales (i.e., the verbal comprehension, perceptual organization, working memory/freedom from distractibility, and processing speed indexes) match the empirical structure suggested by subtest-level factor analyses; however, the original Verbal–Performance Scale dichotomy has never been supported unequivocally in factor-analytic studies. At the same time, leading instruments such as the MMPI-2 yield clinical symptom-based scales that do not match the structure suggested by item-level factor analyses. Several new instruments with strong theoretical underpinnings have been criticized for mismatch between factor structure and interpretive structure (e.g., Keith & Kranzler, 1999; Stinnett, Coombs, Oehler-Stinnett, Fuqua, & Palmer, 1999) even when there is a theoretical and clinical rationale for scale composition. A reasonable balance should be struck between theoretical underpinnings and empirical validation; that is, if factor analysis does not match a test’s underpinnings, is that the fault of the theory, the factor analysis, the nature of the test, or a combination of these factors? Carroll (1983), whose factoranalytic work has been influential in contemporary cognitive assessment, cautioned against overreliance on factor analysis as principal evidence of validity, encouraging use of additional sources of validity evidence that move beyond factor analysis (p. 26). Consideration and credit must be given to both theory and empirical validation results, without one taking precedence over the other.

External Evidence of Validity

Evidenceoftestscorevalidityalsoincludestheextenttowhich the test results predict meaningful and generalizable behaviors independent of actual test performance. Test results need to be validated for any intended application or decision-making process in which they play a part. In this section, external classes of evidence for test construct validity are described, including convergent, discriminant, criterion-related, and consequentialvalidity,aswellasspecializedformsofvaliditywithin these categories.

Convergent and Discriminant Validity

In a frequently cited 1959 article, D. T. Campbell and Fiske described a multitrait-multimethod methodology for investigating construct validity. In brief, they suggested that a measure is jointly defined by its methods of gathering data (e.g., self-report or parent-report) and its trait-related content (e.g., anxiety or depression). They noted that test scores should be related to (i.e., strongly correlated with) other measures of the same psychological construct (convergent evidence of validity) and comparatively unrelated to (i.e., weakly correlated with) measures of different psychological constructs (discriminant evidence of validity). The multitraitmultimethod matrix allows for the comparison of the relative strength of association between two measures of the same trait using different methods (monotrait-heteromethod correlations), two measures with a common method but tapping different traits (heterotrait-monomethod correlations), and two measures tapping different traits using different methods (heterotrait-heteromethod correlations), all of which are expected to yield lower values than internal consistency reliability statistics using the same method to tap the same trait.

The multitrait-multimethod matrix offers several advantages, such as the identification of problematic method variance. Method variance is a measurement artifact that threatens validity by producing spuriously high correlations between similar assessment methods of different traits. For example, high correlations between digit span, letter span, phoneme span, and word span procedures might be interpreted as stemming from the immediate memory span recall method common to all the procedures rather than any specific abilities being assessed. Method effects may be assessed by comparing the correlations of different traits measured with the same method (i.e., monomethod correlations) and the correlations among different traits across methods (i.e., heteromethod correlations). Method variance is said to be present if the heterotrait-monomethod correlations greatly exceed the heterotrait-heteromethod correlations in magnitude, assuming that convergent validity has been demonstrated.

Fiske and Campbell (1992) subsequently recognized shortcomings in their methodology: “We have yet to see a really good matrix: one that is based on fairly similar concepts and plausibly independent methods and shows high convergent and discriminant validation by all standards” (p. 394). At the same time, the methodology has provided a useful framework for establishing evidence of validity.

Criterion-Related Validity

How well do test scores predict performance on independent criterion measures and differentiate criterion groups? The relationship of test scores to relevant external criteria constitutes evidence of criterion-related validity, which may take several different forms. Evidence of validity may include criterion scores that are obtained at about the same time (concurrent evidence of validity) or criterion scores that are obtained at some future date (predictive evidence of validity). External criteria may also include functional, real-life variables (ecological validity), diagnostic or placement indexes (diagnostic validity), and intervention-related approaches (treatment validity).

The emphasis on understanding the functional implications of test findings has been termed ecological validity (Neisser, 1978). Banaji and Crowder (1989) suggested, “If research is scientifically sound it is better to use ecologically lifelike rather than contrived methods” (p. 1188). In essence, ecological validation efforts relate test performance to various aspects of person-environment functioning in everyday life, including identification of both competencies and deficits in social and educational adjustment. Test developers should show the ecological relevance of the constructs a test purports to measure, as well as the utility of the test for predicting everyday functional limitations for remediation. In contrast, tests based on laboratory-like procedures with little or no discernible relevance to real life may be said to have little ecological validity.

The capacity of a measure to produce relevant applied group differences has been termed diagnostic validity (e.g., Ittenbach, Esters, & Wainer, 1997). When tests are intended for diagnostic or placement decisions, diagnostic validity refers to the utility of the test in differentiating the groups of concern. The process of arriving at diagnostic validity may be informed by decision theory, a process involving calculations of decision-making accuracy in comparison to the base rate occurrence of an event or diagnosis in a given population. Decision theory has been applied to psychological tests (Cronbach & Gleser, 1965) and other high-stakes diagnostic tests (Swets, 1992) and is useful for identifying the extent to which tests improve clinical or educational decision-making.

The method of contrasted groups is a common methodology to demonstrate diagnostic validity. In this methodology, test performance of two samples that are known to be different on the criterion of interest is compared. For example, a test intended to tap behavioral correlates of anxiety should show differences between groups of normal individuals and individuals diagnosed with anxiety disorders. A test intended for differential diagnostic utility should be effective in differentiating individuals with anxiety disorders from diagnoses that appear behaviorally similar. Decision-making classification accuracy may be determined by developing cutoff scores or rules to differentiate the groups, so long as the rules show adequate sensitivity, specificity, positive predictive power, and negative predictive power. These terms may be defined as follows:

- Sensitivity: the proportion of cases in which a clinical condition is detected when it is in fact present (true positive).

- Specificity: the proportion of cases for which a diagnosis is rejected, when rejection is in fact warranted (true negative).

- Positive predictive power: the probability of having the diagnosis given that the score exceeds the cutoff score.

- Negative predictive power: the probability of not having the diagnosis given that the score does not exceed the cutoff score.

All of these indexes of diagnostic accuracy are dependent upon the prevalence of the disorder and the prevalence of the score on either side of the cut point.

Findings pertaining to decision-making should be interpreted conservatively and cross-validated on independent samples because (a) classification decisions should in practice be based upon the results of multiple sources of information rather than test results from a single measure, and (b) the consequences of a classification decision should be considered in evaluating the impact of classification accuracy. A false negative classification, in which a child is incorrectly classified as not needing special education services, could mean the denial of needed services to a student. Alternately, a false positive classification, in which a typical child is recommended for special services, could result in a child’s being labeled unfairly.

Treatment validity refers to the value of an assessment in selecting and implementing interventions and treatments that will benefit the examinee. “Assessment data are said to be treatmentvalid,”commentedBarrios(1988),“iftheyexpedite the orderly course of treatment or enhance the outcome of treatment” (p. 34). Other terms used to describe treatment validity are treatment utility (Hayes, Nelson, & Jarrett, 1987) and rehabilitation-referenced assessment (Heinrichs, 1990).

Whether the stated purpose of clinical assessment is description, diagnosis, intervention, prediction, tracking, or simply understanding, its ultimate raison d’être is to select and implement services in the best interests of the examinee, that is, to guide treatment. In 1957, Cronbach described a rationale for linking assessment to treatment: “For any potential problem, there is some best group of treatments to use and best allocation of persons to treatments” (p. 680).

The origins of treatment validity may be traced to the concept of aptitude by treatment interactions (ATI) originally proposed by Cronbach (1957), who initiated decades of research seeking to specify relationships between the traits measured by tests and the intervention methodology used to produce change. In clinical practice, promising efforts to match client characteristics and clinical dimensions to preferred therapist characteristics and treatment approaches have been made (e.g., Beutler & Clarkin, 1990; Beutler & Harwood, 2000; Lazarus, 1973; Maruish, 1999), but progress has been constrained in part by difficulty in arriving at consensus for empirically supported treatments (e.g., Beutler, 1998). In psychoeducational settings, test results have been shown to have limited utility in predicting differential responses to varied forms of instruction (e.g., Reschly, 1997). It is possible that progress in educational domains has been constrained by underestimation of the complexity of treatment validity. For example, many ATI studies utilize overly simple modalityspecific dimensions (auditory-visual learning style or verbalnonverbal pBibliography:) because of their easy appeal.

Consequential Validity

In recent years, there has been an increasing recognition that test usage has both intended and unintended effects on individuals and groups. Messick (1989, 1995b) has argued that test developers must understand the social values intrinsic to the purposes and application of psychological tests, especially those that may act as a trigger for social and educational actions. Linn (1998) has suggested that when governmental bodies establish policies that drive test development and implementation, the responsibility for the consequences of test usage must also be borne by the policymakers. In this context, consequential validity refers to the appraisal of value implications and the social impact of score interpretation as a basis for action and labeling, as well as the actual and potential consequences of test use (Messick, 1989; Reckase, 1998).

This new form of validity represents an expansion of traditional conceptualizations of test score validity. Lees-Haley (1996) has urged caution about consequential validity, noting its potential for encouraging the encroachment of politics into science. The Standards for Educational and Psychological Testing (1999) recognize but carefully circumscribe consequential validity:

Evidence about consequences may be directly relevant to validity when it can be traced to a source of invalidity such as construct underrepresentation or construct-irrelevant components. Evidence about consequences that cannot be so traced—that in fact reflects valid differences in performance—is crucial in informing policy decisions but falls outside the technical purview of validity. (p. 16)

Evidence of consequential validity may be collected by test developers during a period starting early in test development and extending through the life of the test (Reckase, 1998). For educational tests, surveys and focus groups have been described as two methodologies to examine consequential aspects of validity (Chudowsky & Behuniak, 1998; Pomplun, 1997). As the social consequences of test use and interpretation are ascertained, the development and determinants of the consequences need to be explored. A measure with unintended negative side effects calls for examination of alternative measures and assessment counterproposals. Consequential validity is especially relevant to issues of bias, fairness, and distributive justice.

Validity Generalization

The accumulation of external evidence of test validity becomes most important when test results are generalized across contexts, situations, and populations, and when the consequences of testing reach beyond the test’s original intent. According to Messick (1995b), “The issue of generalizability of score inferences across tasks and contexts goes to the very heart of score meaning. Indeed, setting the boundaries of score meaning is precisely what generalizability evidence is meant to address” (p. 745).

Hunter and Schmidt (1990; Hunter, Schmidt, & Jackson, 1982; Schmidt & Hunter, 1977) developed a methodology of validity generalization, a form of meta-analysis, that analyzes the extent to which variation in test validity across studies is due to sampling error or other sources of error such as imperfect reliability, imperfect construct validity, range restriction, or artificial dichotomization. Once incongruent or conflictual findings across studies can be explained in terms of sources of error, meta-analysis enables theory to be tested, generalized, and quantitatively extended.

Test Score Reliability

If measurement is to be trusted, it must be reliable. It must be consistent, accurate, and uniform across testing occasions, across time, across observers, and across samples. In psychometric terms, reliability refers to the extent to which measurement results are precise and accurate, free from random and unexplained error. Test score reliability sets the upper limit of validity and thereby constrains test validity, so that unreliable test scores cannot be considered valid.

Reliability has been described as “fundamental to all of psychology” (Li, Rosenthal, & Rubin, 1996), and its study dates back nearly a century (Brown, 1910; Spearman, 1910).

Concepts of reliability in test theory have evolved, including emphasis in IRT models on the test information function as an advancement over classical models (e.g., Hambleton et al., 1991) and attempts to provide new unifying and coherent models of reliability (e.g., Li & Wainer, 1997). For example, Embretson (1999) challenged classical test theory tradition by asserting that “Shorter tests can be more reliable than longer tests” (p. 12) and that “standard error of measurement differs between persons with different response patterns but generalizes across populations” (p. 12). In this section, reliability is described according to classical test theory and item response theory. Guidelines are provided for the objective evaluation of reliability.

Internal Consistency

Determination of a test’s internal consistency addresses the degree of uniformity and coherence among its constituent parts. Tests that are more uniform tend to be more reliable. As a measure of internal consistency, the reliability coefficient is the square of the correlation between obtained test scores and true scores; it will be high if there is relatively little error but low with a large amount of error. In classical test theory, reliability is based on the assumption that measurement error is distributed normally and equally for all score levels. By contrast, item response theory posits that reliability differs between persons with different response patterns and levels of ability but generalizes across populations (Embretson & Hershberger, 1999).

Several statistics are typically used to calculate internal consistency. The split-half method of estimating reliability effectively splits test items in half (e.g., into odd items and even items) and correlates the score from each half of the test with the score from the other half. This technique reduces the number of items in the test, thereby reducing the magnitude of the reliability. Use of the Spearman-Brown prophecy formula permits extrapolation from the obtained reliability coefficient to original length of the test, typically raising the reliability of the test. Perhaps the most common statistical index of internal consistency is Cronbach’s alpha, which provides a lower bound estimate of test score reliability equivalent to the average split-half consistency coefficient for all possible divisions of the test into halves. Note that item response theory implies that under some conditions (e.g., adaptive testing, in which the items closest to an examinee’s ability level need be measured) short tests can be more reliable than longer tests (e.g., Embretson, 1999).

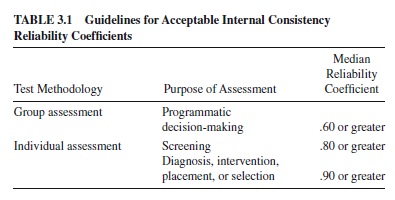

In general, minimal levels of acceptable reliability should be determined by the intended application and likely consequences of test scores. Several psychometricians have proposed guidelines for the evaluation of test score reliability coefficients (e.g., Bracken, 1987; Cicchetti, 1994; Clark & Watson, 1995; Nunnally & Bernstein, 1994; Salvia & Ysseldyke, 2001), depending upon whether test scores are to be used for high- or low-stakes decision-making. High-stakes tests refer to tests that have important and direct consequences such as clinical-diagnostic, placement, promotion, personnel selection, or treatment decisions; by virtue of their gravity, these tests require more rigorous and consistent psychometric standards. Low-stakes tests, by contrast, tend to have only minor or indirect consequences for examinees.

After a test meets acceptable guidelines for minimal acceptable reliability, there are limited benefits to furtheri ncreasing reliability. Clark and Watson (1995) observe that “Maximizing internal consistency almost invariably produces a scale that is quite narrow in content; if the scale is narrower than the target construct, it svalidity is compromised” (pp.316–317). Nunnally and Bernstein (1994, p. 265) state more directly: “Never switch to a less valid measure simply because it is more reliable.”

Local Reliability and Conditional Standard Error

Internal consistency indexes of reliability provide a single average estimate of measurement precision across the full range of test scores. In contrast, local reliability refers to measurement precision at specified trait levels or ranges of scores. Conditional error refers to the measurement variance at a particular level of the latent trait, and its square root is a conditional standard error. Whereas classical test theory posits that the standard error of measurement is constant and applies to all scores in a particular population, item response theory posits that the standard error of measurement varies according to the test scores obtained by the examinee but generalizes across populations (Embretson & Hershberger, 1999).

As an illustration of the use of classical test theory in the determination of local reliability, the Universal Nonverbal Intelligence Test (UNIT; Bracken & McCallum, 1998) presents local reliabilities from a classical test theory orientation. Based on the rationale that a common cut score for classification of individuals as mentally retarded is an FSIQ equal to 70, the reliability of test scores surrounding that decision point was calculated. Specifically, coefficient alpha reliabilities were calculated for FSIQs from – 1.33 and – 2.66 standard deviations below the normative mean. Reliabilities were corrected for restriction in range, and results showed that composite IQ reliabilities exceeded the .90 suggested criterion. That is, the UNIT is sufficiently precise at this ability range to reliably identify individual performance near to a common cut point for classification as mentally retarded.

Item response theory permits the determination of conditional standard error at every level of performance on a test. Several measures, such as the Differential Ability Scales (Elliott, 1990) and the Scales of Independent Behavior— Revised (SIB-R; Bruininks, Woodcock, Weatherman, & Hill, 1996), report local standard errors or local reliabilities for every test score. This methodology not only determines whether a test is more accurate for some members of a group (e.g., high-functioning individuals) than for others (Daniel, 1999), but also promises that many other indexes derived from reliability indexes (e.g., index discrepancy scores) may eventually become tailored to an examinee’s actual performance. Several IRT-based methodologies are available for estimating local scale reliabilities using conditional standard errors of measurement (Andrich, 1988; Daniel, 1999; Kolen, Zeng, & Hanson, 1996; Samejima, 1994), but none has yet become a test industry standard.

Temporal Stability

Are test scores consistent over time? Test scores must be reasonably consistent to have practical utility for making clinical and educational decisions and to be predictive of future performance. The stability coefficient, or test-retest score reliability coefficient, is an index of temporal stability that can be calculated by correlating test performance for a large number of examinees at two points in time. Two weeks is considered a preferred test-retest time interval (Nunnally & Bernstein, 1994; Salvia & Ysseldyke, 2001), because longer intervals increase the amount of error (due to maturation and learning) and tend to lower the estimated reliability.

Bracken (1987; Bracken & McCallum, 1998) recommends that a total test stability coefficient should be greater than or equal to .90 for high-stakes tests over relatively short test-retest intervals, whereas a stability coefficient of .80 is reasonable for low-stakes testing. Stability coefficients may be spuriously high, even with tests with low internal consistency, but tests with low stability coefficients tend to have low internal consistency unless they are tapping highly variable state-based constructs such as state anxiety (Nunnally & Bernstein, 1994). As a general rule of thumb, measures of internal consistency are preferred to stability coefficients as indexes of reliability.

Interrater Consistency and Consensus

Whenever tests require observers to render judgments, ratings, or scores for a specific behavior or performance, the consistency among observers constitutes an important source of measurement precision. Two separate methodological approaches have been utilized to study consistency and consensus among observers: interrater reliability (using correlational indexes to reference consistency among observers) and interrater agreement (addressing percent agreement among observers; e.g., Tinsley & Weiss, 1975). These distinctive approaches are necessary because it is possible to have high interrater reliability with low manifest agreement among raters if ratings are different but proportional. Similarly, it is possible to have low interrater reliability with high manifest agreement among raters if consistency indexes lack power because of restriction in range.

Interrater reliability refers to the proportional consistency of variance among raters and tends to be correlational. The simplest index involves correlation of total scores generated by separate raters. The intraclass correlation is another index of reliability commonly used to estimate the reliability of ratings. Its value ranges from 0 to 1.00, and it can be used to estimate the expected reliability of either the individual ratings provided by a single rater or the mean rating provided by a group of raters (Shrout & Fleiss, 1979). Another index of reliability, Kendall’s coefficient of concordance, establishes how much reliability exists among ranked data. This procedure is appropriate when raters are asked to rank order the persons or behaviors along a specified dimension.

Interrater agreement refers to the interchangeability of judgments among raters, addressing the extent to which raters make the same ratings. Indexes of interrater agreement typically estimate percentage of agreement on categorical and rating decisions among observers, differing in the extent to which they are sensitive to degrees of agreement correct for chance agreement. Cohen’s kappa is a widely used statistic of interobserver agreement intended for situations in which raters classify the items being rated into discrete, nominal categories. Kappa ranges from – 1.00 to +1.00; kappa values of .75 or higher are generally taken to indicate excellent agreement beyond chance, values between .60 and .74 are considered good agreement, those between .40 and .59 are considered fair, and those below .40 are considered poor (Fleiss, 1981).

Interrater reliability and agreement may vary logically depending upon the degree of consistency expected from specific sets of raters. For example, it might be anticipated that people who rate a child’s behavior in different contexts (e.g., school vs. home) would produce lower correlations than two raters who rate the child within the same context (e.g., two parents within the home or two teachers at school). In a review of 13 preschool social-emotional instruments, the vast majority of reported coefficients of interrater congruence were below .80 (range .12 to .89). Walker and Bracken (1996) investigated the congruence of biological parents who rated their children on four preschool behavior rating scales. Interparent congruence ranged from a low of .03 (Temperament Assessment Battery for Children Ease of Management through Distractibility) to a high of .79 (Temperament Assessment Battery for Children Approach/Withdrawal). In addition to concern about low congruence coefficients, the authors voiced concern that 44% of the parent pairs had a mean discrepancy across scales of 10 to 13 standard score points; differences ranged from 0 to 79 standard score points.

Interrater studies are preferentially conducted under field conditions, to enhance generalizability of testing by clinicians “performing under the time constraints and conditions of their work” (Wood, Nezworski, & Stejskal, 1996, p. 4). Cone (1988) has described interscorer studies as fundamental to measurement, because without scoring consistency and agreement, many other reliability and validity issues cannot be addressed.

Congruence Between Alternative Forms

When two parallel forms of a test are available, then correlating scores on each form provides another way to assess reliability. In classical test theory, strict parallelism between forms requires equality of means, variances, and covariances (Gulliksen, 1950). A hierarchy of methods for pinpointing sources of measurement error with alternative forms has been proposed (Nunnally & Bernstein, 1994; Salvia & Ysseldyke, 2001): (a) assess alternate-form reliability with a two-week interval between forms, (b) administer both forms on the same day, and if necessary (c) arrange for different raters to score the forms administered with a two-week retest interval and on the same day. If the score correlation over the twoweek interval between the alternative forms is lower than coefficient alpha by .20 or more, then considerable measurement error is present due to internal consistency, scoring subjectivity, or trait instability over time. If the score correlation is substantially higher for forms administered on the same day, then the error may stem from trait variation over time. If the correlations remain low for forms administered on the same day, then the two forms may differ in content with one form being more internally consistent than the other. If trait variation and content differences have been ruled out, then comparison of subjective ratings from different sources may permit the major source of error to be attributed to the subjectivity of scoring.

In item response theory, test forms may be compared by examining the forms at the item level. Forms with items of comparable item difficulties, response ogives, and standard errors by trait level will tend to have adequate levels of alternate form reliability (e.g., McGrew & Woodcock, 2001). For example, when item difficulties for one form are plotted against those for the second form, a clear linear trend is expected. When raw scores are plotted against trait levels for the two forms on the same graph, the ogive plots should be identical.

At the same time, scores from different tests tapping the same construct need not be parallel if both involve sets of itemsthatareclosetotheexaminee’sabilitylevel.Asreported by Embretson (1999), “Comparing test scores across multiple forms is optimal when test difficulty levels vary across persons” (p. 12).The capacity of IRTto estimate trait level across differing tests does not require assumptions of parallel forms or test equating.

Reliability Generalization

Reliability generalization is a meta-analytic methodology that investigates the reliability of scores across studies and samples (Vacha-Haase, 1998). An extension of validity generalization (Hunter & Schmidt, 1990; Schmidt & Hunter, 1977), reliability generalization investigates the stability of reliability coefficients across samples and studies. In order to demonstrate measurement precision for the populations for which a test is intended, the test should show comparable levels of reliability across various demographic subsets of the population (e.g., gender, race, ethnic groups), as well as salient clinical and exceptional populations.

Test Score Fairness